-

-

AIDIT AI — The Trust Layer for secure AI-assisted software development in GitLab.

-

The three pillars of AIDIT AI: Deterministic detection, automated registry validation, and deep security review via Claude.

-

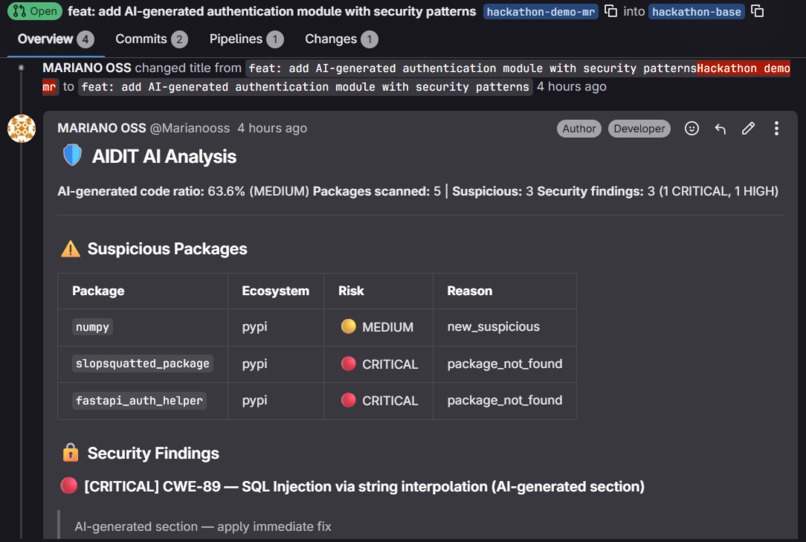

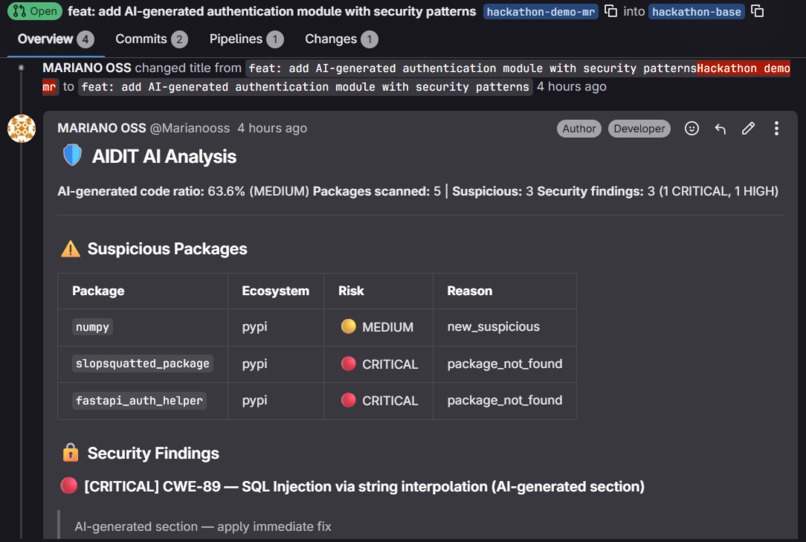

Real-time AIDIT AI audit on a GitLab Merge Request, detecting hallucinated packages and critical SQL injection in AI-generated code.

Inspiration: The "vibe coding" wave of 2025–2026 created a silent crisis: developers are shipping AI-generated code at unprecedented speed, but no tool in the SDLC treats it differently from human-written code. We discovered that 45% of AI-generated code contains security vulnerabilities that SAST tools miss — not because the scanners are broken, but because they look for known CVEs in known packages. AI-generated code has neither.

The attack vector that alarmed us most was slopsquatting: LLMs hallucinate package names consistently, and attackers register those exact names with malware. Current SCA tools only scan packages that exist — they are completely blind to packages that don't. We wanted to build the trust layer that GitLab Duo itself needs but doesn't have yet.

What it does: AIdit is a GitLab Duo Agent that activates on every Merge Request and answers one question: "Can we trust this AI-generated code to ship?"

In under 90 seconds, it runs a 6-step automated pipeline:

Detects AI-generated code per file using deterministic AST-based heuristics — no LLM call, no false positives, sub-3-second analysis. Scans every imported package against PyPI, npm, Maven Central, and Cargo in parallel. Non-existent packages = immediate pipeline block. Calls Claude (Anthropic API) with the full diff, annotated with AI ratios. Claude applies 3x stricter scrutiny to AI-generated sections and returns findings with CWE, severity, exact line range, and a concrete fix. Generates an AI Provenance Report — a signed JSON artifact recording which code was AI-generated, what review it received, and the pipeline decision. Designed for 2026 AI software transparency compliance requirements. Posts a structured MR comment with the full analysis plus inline diff notes on CRITICAL and HIGH findings. Sets the pipeline status to ✅ PASSED, 🟡 REVIEW REQUIRED, or 🔴 BLOCKED based on configurable risk thresholds. How we built it (The Vibe Coding Revolution): What makes AIdit unique is its own creation process: it was developed entirely through "Vibe Coding." I am not a professional developer; I am a builder who utilizes only AI tools. By orchestrating advanced AI agents like Google Antigravity and VS Code AI extensions, I was able to architect, code, and deploy this entire full-stack solution.

AIdit has two components:

GitLab Duo Agent — pure YAML configuration. The platform executes it — we write the logic, GitLab orchestrates it. AIdit Tools Server — a stateless FastAPI service (Python 3.12) on Railway that exposes the 6 tool endpoints. Challenges we ran into:

Defining "AI-generated" without an LLM. We needed detection to be fast, cheap, and deterministic. We identified 7 AST-based signals (uniform docstrings, variable naming patterns, etc.) that reliably distinguish AI code. Slopsquatting edge cases. Identifying non-existent packages required a nuanced risk model: from CRITICAL (missing) to HIGH (7 days old, low downloads). Token budget management. building a prompt construction algorithm that prioritizes high-AI-ratio files when the diff exceeds Claude's token limit. Accomplishments we're proud of:

Achieving a high-speed AI detection heuristic (AST-based) that requires zero LLM tokens. Producing the first slopsquatting scanner integrated directly into a GitLab MR workflow. Ensuring end-to-end execution in under 90 seconds with a cost of only ~$0.012 per MR. 41 unit tests, all green, with zero external API calls required (full mock coverage). What we learned: GitLab's "AI Paradox" framing is right: the same AI acceleration that makes teams productive is also the source of a new risk category. We also learned that the most valuable part of AIdit is the provenance tracking — keeping an auditable record of AI usage is what organizations actually need for 2026 compliance.

What's next for AIdit:

Policy UI: Set per-branch thresholds. Auto-fix PRs: Suggesting correct package names for slopsquatting hits. GitHub Actions port: Expanding reach beyond GitLab. SOC2 / ISO 27001 module: Exporting reports directly for audit frameworks.

Log in or sign up for Devpost to join the conversation.