-

-

Meet the AI Room Designer: your intelligent companion for the perfect space. A creative app for generating photorealistic rooms & 3D models.

-

This isn't just one AI; it's a creative team. A robust backend orchestrates a multi-modal pipeline for visuals, text, 3D, and voice.

-

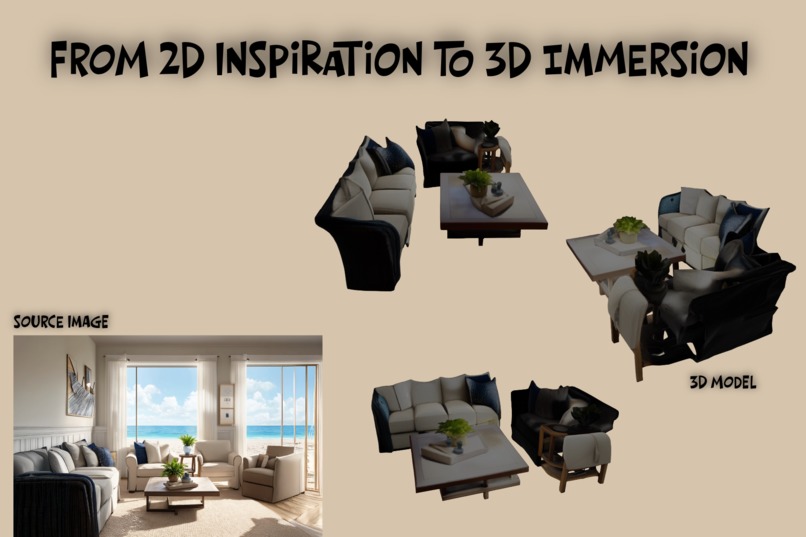

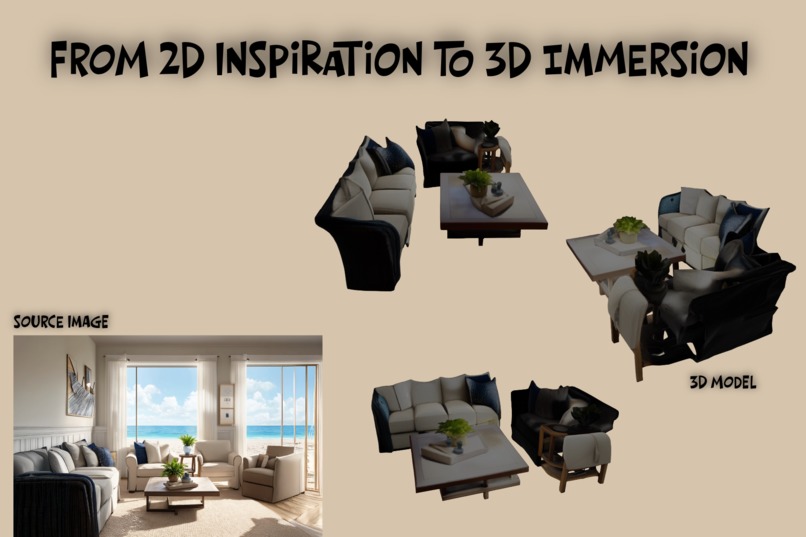

From 2D photo to 3D reality. This pipeline generates 36 AI camera views from a single photo, creating a detailed, interactive 3D model.

Inspiration

It all started with a simple, frustrating question: why is it so hard to visualize a new design for an empty room? That gap between a vague idea and a clear vision is a universal creative block. I was inspired to build a tool that could instantly bridge that "imagination gap," turning simple text into photorealistic images and empowering anyone to become their own interior designer.

What it does

AI Room Designer is an intelligent, multi-modal design companion. It's not just a tool; it's a creative partner that allows you to:

Generate photorealistic rooms from a simple text prompt in over 36 distinct styles. Redesign your own space by taking a photo and applying a new aesthetic. Visualize in 3D with an "Ultimate Quality" pipeline that transforms a 2D image into a high-fidelity, interactive 3D model. Interact with an "AI Design Consultant", a gpt-oss powered local agent that provides dynamic, voice-driven descriptions and expert design advice. Edit with AI Vision, a feature that uses segmentation to identify and recolor any object in the scene.

How we built it

The application is built on a modern, decoupled architecture designed for robustness, scalability, and a unique local-first philosophy.

Frontend: A sleek and responsive UI built with React, Vite, and TypeScript, styled with Tailwind CSS. It's hosted on Vercel. Backend: A powerful Python/FastAPI server that acts as a secure AI Orchestration Engine. It manages all API keys and orchestrates the complex pipeline of AI models. It's hosted on Railway. This resilient, local-first architecture is the core of the project. As you can see in our demo, even with the Wi-Fi turned off, our gpt-oss powered "AI Design Consultant" continues to provide smart, contextual advice. When we reconnect to the cloud, the application seamlessly enhances the experience with high-fidelity visuals from Fal.ai and realistic voice narration from ElevenLabs.

The AI Orchestra: gpt-oss-20b (via LM Studio): The core "brain" of the application, running as a true local agent to power the intelligent chat and dynamic narration features entirely offline. Fal.ai: The powerhouse for all visual generation, providing stable-diffusion-v3-medium for image creation, llava-next for visual analysis, sam2/image for segmentation, and instant-mesh/triposr for the advanced 3D pipeline. ElevenLabs: The "voice" of the AI consultant, converting the dynamic text from gpt-oss into high-quality, realistic audio.

Challenges we ran into

This project was a marathon of real-world problem-solving. Early on, the primary image model went behind a sudden API billing wall, which forced a critical architectural pivot from a fragile client-side app to the robust, backend-centric orchestration engine it is today.

The biggest challenge was achieving high-quality 3D reconstruction. The initial models produced "funky," low-detail results. Conquering this required deep research and the implementation of the "Ultimate Quality" 3D Pipeline, a sophisticated, multi-stage process that uses AI to generate 36 unique camera angles before feeding them into an advanced multi-view 3D model. This complex workflow was the key to transforming the feature from a novelty into a professional-grade tool.

Accomplishments that we're proud of

I'm incredibly proud of building a truly multi-modal AI platform that seamlessly integrates text, image, 3D, and audio generation. The successful implementation of the local-first gpt-oss agent via LM Studio is a major accomplishment, creating a genuinely intelligent and interactive experience that works even without an internet connection(Works In Text).

Most of all, I'm proud of the final user experience. The app is not just a collection of features; it's a polished, cohesive product with a "soul." The interactive AI companion, the immersive 3D viewer, and the thoughtful "Contextual Loading" system all work together to create a tool that is both powerful and delightful to use.

What we learned

This project was a deep dive into the practical challenges and incredible potential of AI orchestration. I learned three key lessons:

A backend-centric architecture is essential for building secure, scalable, and powerful AI applications. The most impressive results come from chaining multiple specialized AI models together, allowing each to do what it does best. The future of creative tools lies in building intelligent, collaborative companions that don't just execute commands, but enhance our own creativity.

What's next for AI Room Designer - Rooms Through Time

The future is incredibly exciting. The current architecture is a perfect foundation for even more advanced features. The next steps include:

Integrating Hugging Face to dynamically generate unique character avatars for the AI consultant. Expanding the "AI Vision" capabilities to include object identification and smart replacement suggestions. Implementing a "High-Quality Multi-View" 3D Pipeline to push the level of detail even further, moving closer to true digital twins of interior spaces. Deploying a cloud-based gpt-oss model to provide a seamless, high-performance experience for all users in a production environment.

Built With

- css

- elevenlabs

- fal

- fastapi

- google/model-viewer

- gpt-oss-20b

- httpx

- huggingface

- instant-mesh

- javascript

- llava-next

- pillow

- python

- react

- sam2/image

- stable-diffusion-v3-medium

- tailwind

- triposr

- typescript

- uvicorn

- vite

Log in or sign up for Devpost to join the conversation.