-

-

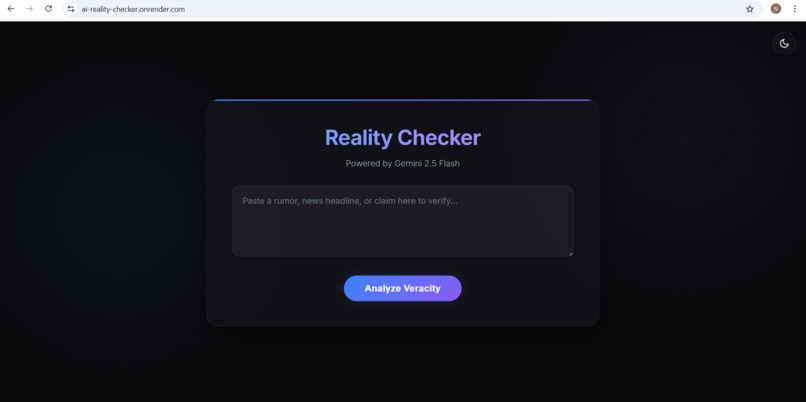

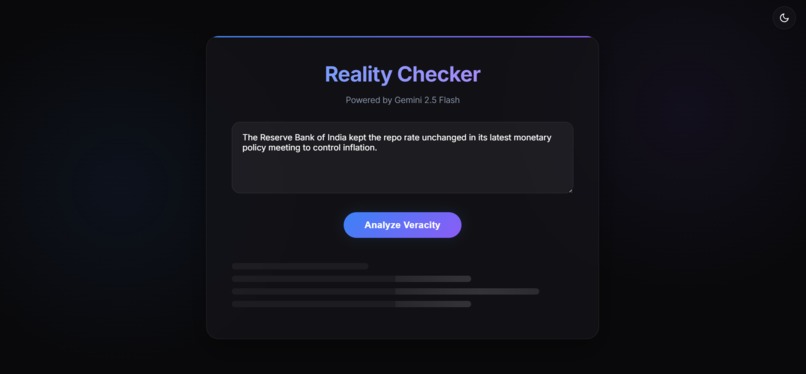

Gemini-inspired interface for real-time news credibility analysis. A minimal UI designed for fast input and instant AI-powered verification.

-

Gemini-inspired interface for real-time news credibility analysis.

-

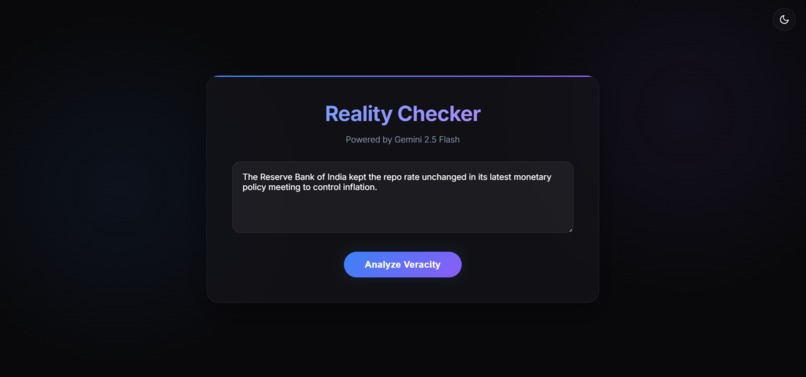

Paste any news headline or claim to analyze its authenticity.

-

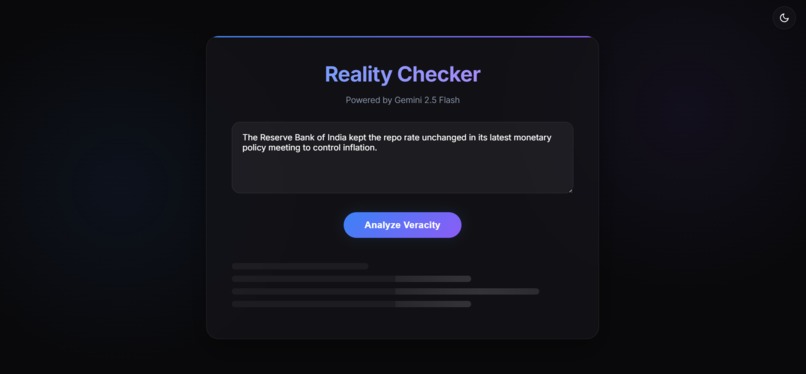

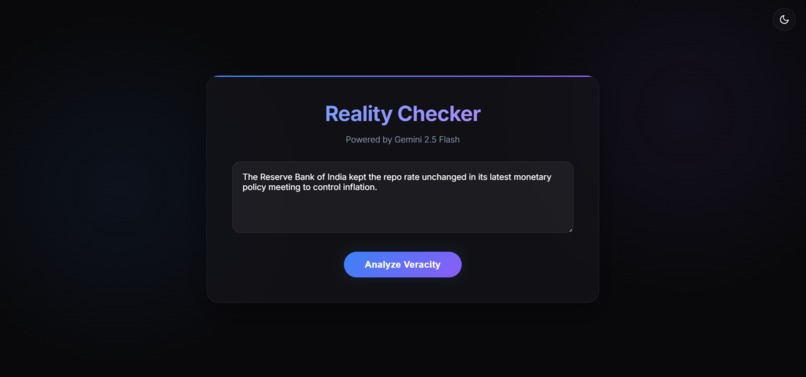

The system processes user input and prepares it for AI-based fact evaluation.

-

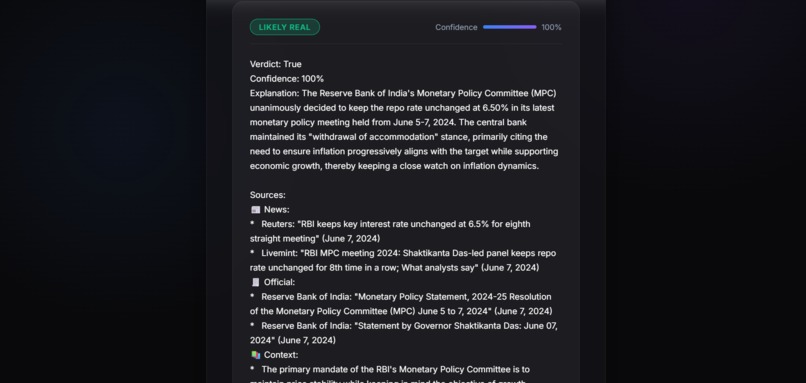

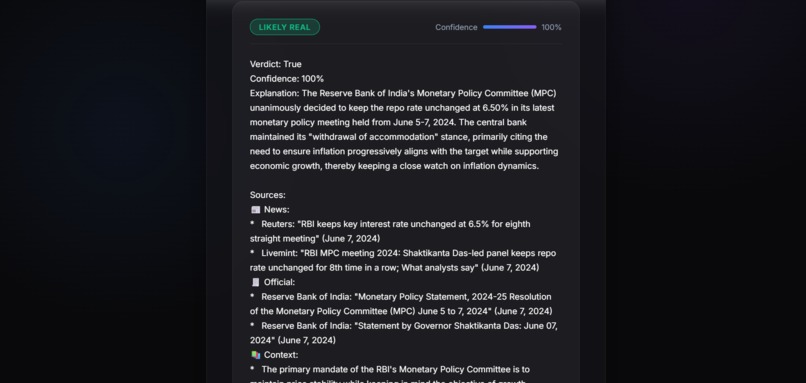

End-to-end user experience focused on clarity and trust. Users receive structured verdict, explanation, and confidence cues in single view.

Inspiration

The inspiration for this project came from observing how quickly unverified information goes viral — often without context, nuance, or evidence. During global events, elections, or crises, a single misleading claim can shape opinions and create panic. As a student exploring AI and machine learning, I wanted to build something that demonstrates how generative AI can be used responsibly, not just creatively. Instead of generating content, this project focuses on analyzing, explaining, and contextualizing information — a use case that feels both timely and impactful.

What it does

The user enters a claim or statement. The backend sends a carefully designed prompt to Gemini. Gemini analyzes the claim and returns: Verdict (Likely True / Likely Fake / Uncertain) Confidence percentage Explanation (logical, neutral reasoning) Contextual sources The frontend displays results using visual cues like badges, confidence bars, and structured cards. The UI was intentionally designed to resemble a calm, professional fact-checking tool, rather than a flashy chatbot — reinforcing trust and usability.

How we built it

The project was built as a full-stack web application with simplicity and clarity as core design principles. Tech Stack Frontend: HTML, CSS (Gemini-inspired UI with glass morphism & gradients) Backend: Python (Flask) AI Model: Google Gemini (Generative AI API) Styling Approach: Minimalist, modern, trust-focused UI

Challenges we ran into

Some of the main challenges included: API quota and configuration issues, especially while transitioning between different AI platforms. Ensuring the AI response was structured and consistent, rather than vague or overly verbose. Designing a UI that felt authoritative but approachable — not overwhelming the user with information. Balancing speed and accuracy while keeping the user experience smooth. Each challenge pushed me to iterate, simplify, and think more critically about both engineering and design decisions.

Accomplishments that we're proud of

Successfully built a full-stack AI-powered web application from scratch, integrating a real-world generative AI model (Google Gemini) with a Flask backend and a clean frontend. Designed a structured and explainable AI response format (verdict, confidence score, explanation, and sources) instead of a generic chatbot output — making the tool more trustworthy and user-friendly. Overcame API configuration, quota limits, and integration errors by debugging real production-style issues rather than relying on mock data. Created a Gemini-inspired UI/UX that focuses on clarity, minimalism, and trust — proving that good design plays a crucial role in responsible AI tools. Learned and applied prompt engineering techniques to ensure neutral, balanced, and explainable AI responses rather than biased or sensational outputs. Built a project that addresses a real societal problem — misinformation, showing how AI can be used responsibly for analysis and awareness rather than just content generation. Completed the project within hackathon constraints, balancing technical implementation, design, and documentation under time pressure.

What we learned

Working on this project taught me several valuable lessons: Prompt engineering matters — small changes in phrasing significantly affect the quality and neutrality of AI responses. UI/UX is as important as the model — how information is presented directly impacts user trust. AI responsibility — presenting uncertainty clearly is just as important as giving answers. Debugging real API integrations helped me better understand rate limits, API keys, and error handling. This project helped bridge the gap between theoretical AI concepts and real-world application design.

What's next for AI Reality Checker

In the future, AI Reality Checker can be expanded to include URL-based article verification Image + text misinformation analysis Multilingual fact-checking Browser extensions or mobile support

Built With

- css-(gemini-inspired-ui-with-glassmorphism-&-gradients)-backend:-python-(flask)-ai-model:-google-gemini-(generative-ai-api)-styling-approach:-minimalist

- css3

- flask

- git

- github

- google-cloud-console

- google-gemini-api

- google-generative-ai

- html5

- javascript

- jinja

- modern

- python

- visual-studio

Log in or sign up for Devpost to join the conversation.