-

-

We use Gemini’s vision features to look at the photo. It doesn't just see food. It estimates exactly what is there.

-

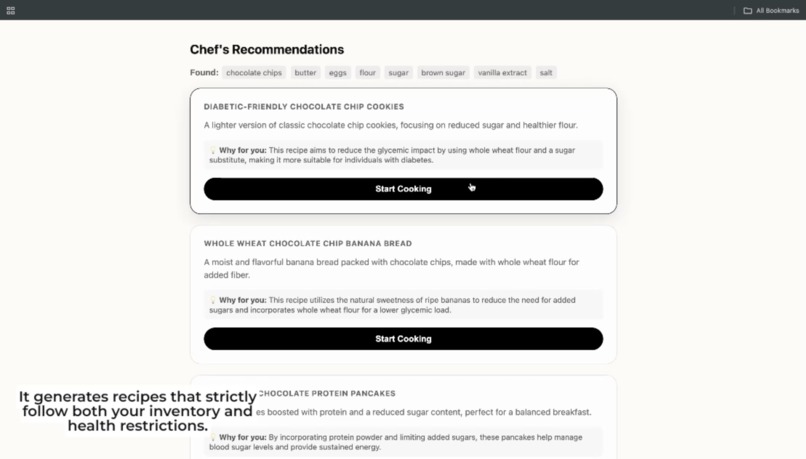

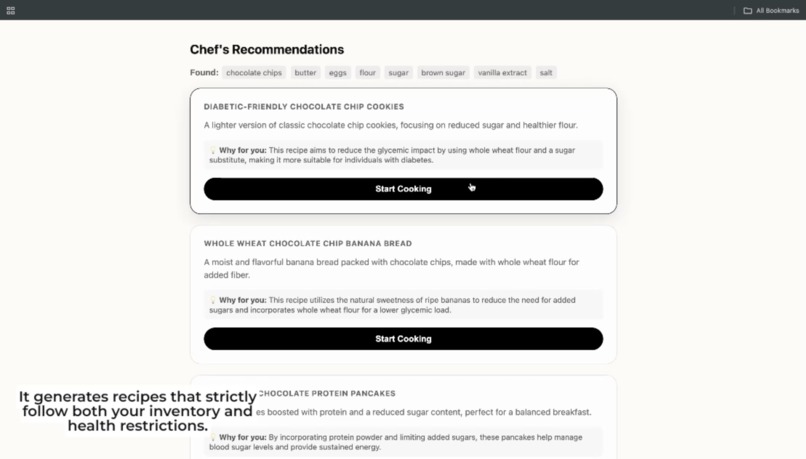

Gemini, using its reasoning skills, suggests recipes that use what you have while strictly following your health restrictions.

-

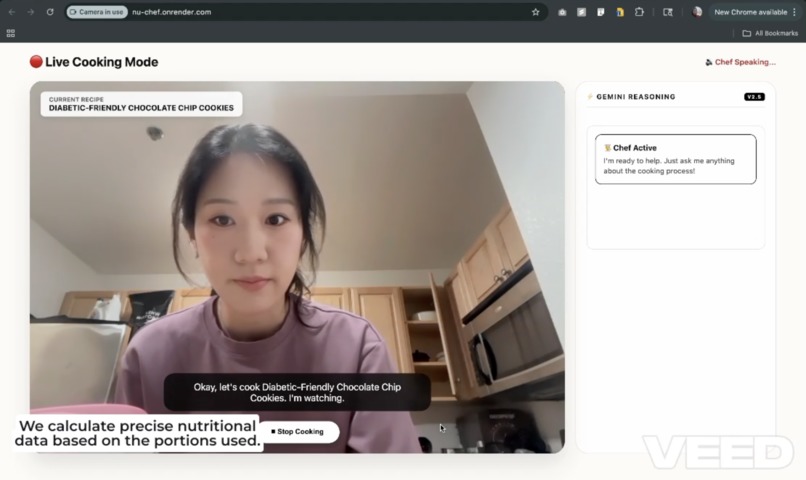

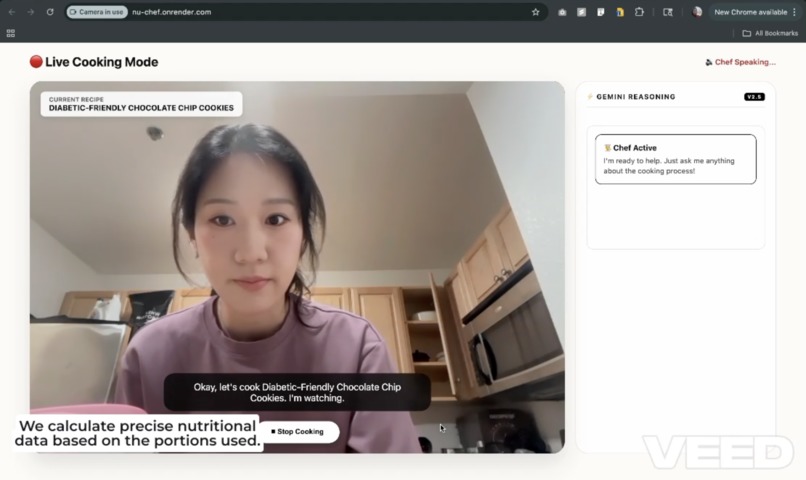

Instead of using generic numbers, Gemini calculates the calories based on the exact amount of ingredients used in that specific meal.

-

It selects recipes that maximize the use of detected ingredients while strictly adhering to dietary constraints.

-

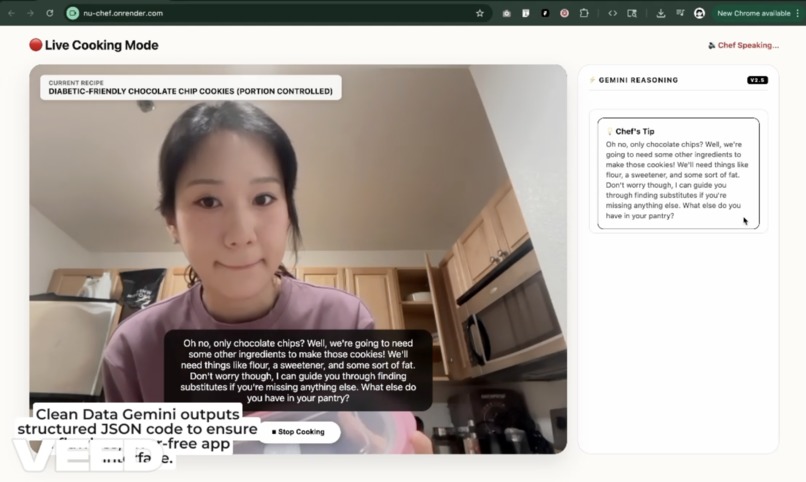

Once a recipe is selected, the final instruction set is generated in valid JSON, allowing the application to render steps and timers.

Inspiration

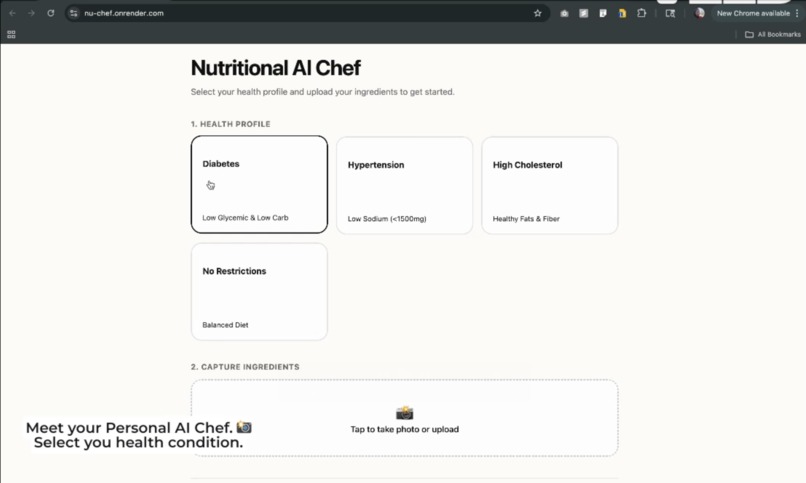

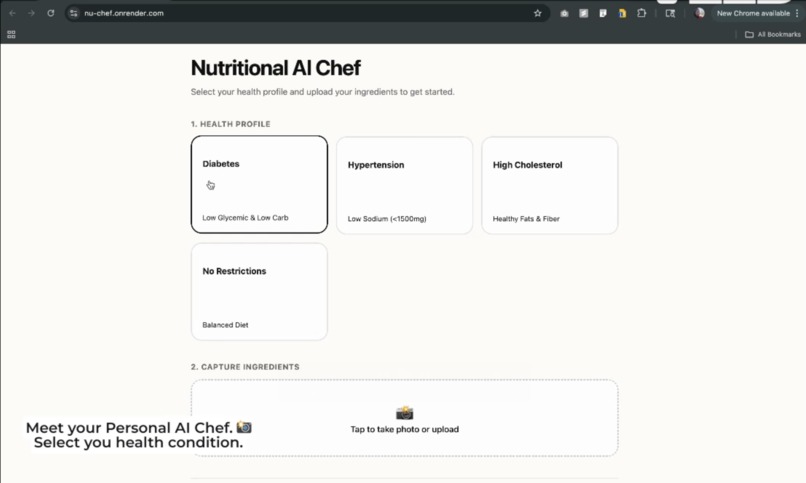

For millions of people living with chronic conditions like diabetes or hypertension, the simple question What's for dinner? is often a source of stress. Generic recipe apps are disconnected from reality. They don't know what's in your fridge, and they certainly don't account for specific dietary restrictions. We were inspired to build a bridge between the pantry and the user. We wanted to create an assistant that doesn't just find recipes, but acts as a safety layer, ensuring that whatever you cook using available ingredients is actually safe for your specific health profile. We wanted to move away from static databases and use the reasoning power of Gemini 3 to understand food contextually.

What it does

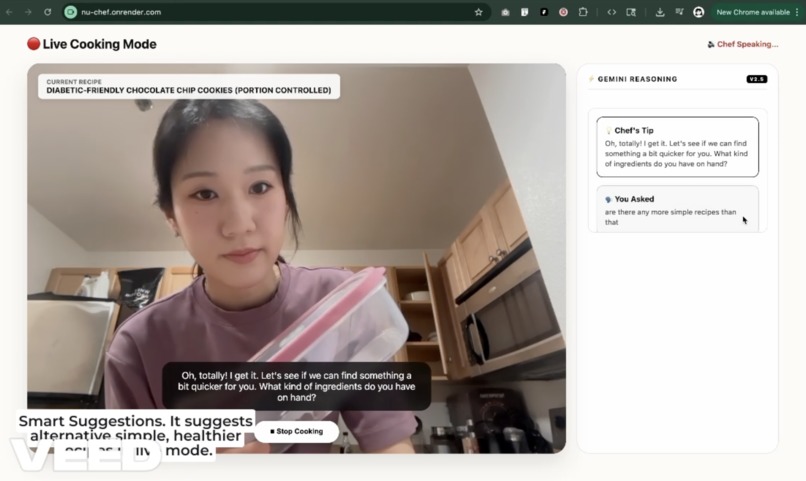

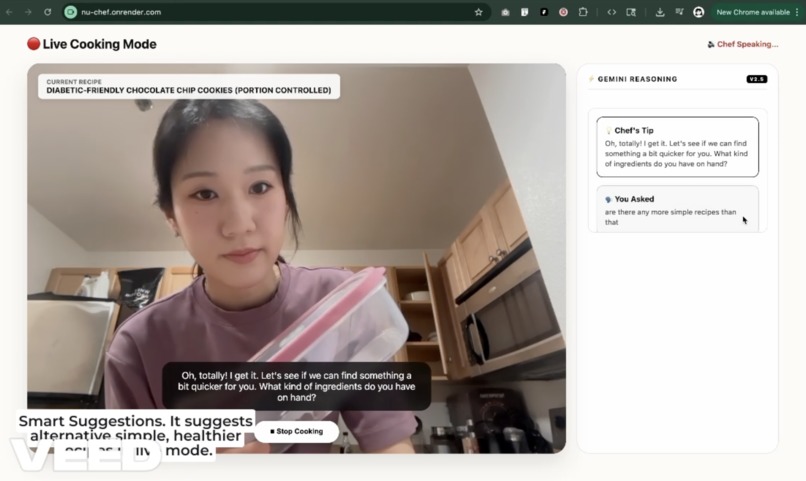

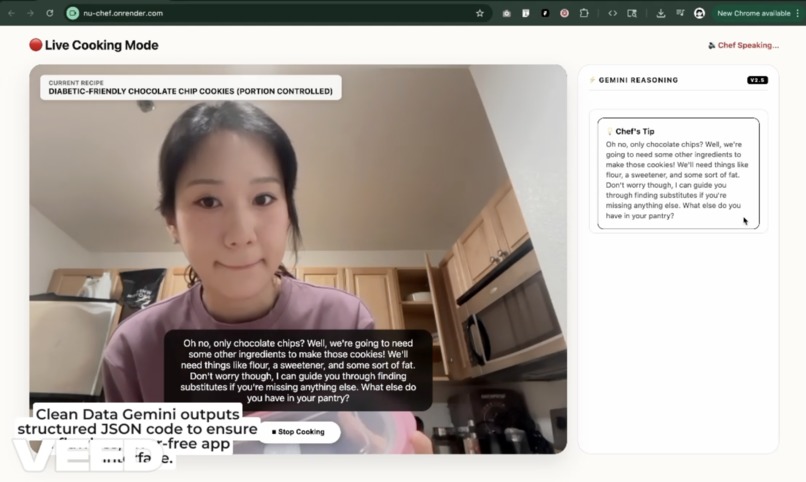

AI-Powered Adaptive Nutrition is a smart cooking assistant that turns a single photo of ingredients into a safe, personalized, and actionable meal plan. \ Visual Ingredient Analysis: Users snap a photo of their counter. The system identifies ingredients and, crucially, estimates quantities (e.g., distinguishing between a bag of flour and a cup of flour). \ Health-First Reasoning: It cross-references these ingredients against the user's health profile (e.g., diabetes). \ Natural Interaction: The workflow is triggered by the voice command Hey Chef, simulating a real kitchen partner. \ Dynamic Calorie Calculation: It doesn't use generic database values. It calculates energy density dynamically based on the specific portion sizes generated for the recipe.

How we built it

We built the core logic around Gemini 3, leveraging its multimodal and reasoning capabilities to handle the heavy lifting. \ Multimodal Input (Vision): We utilize Gemini's vision capabilities to analyze user-uploaded images. We prompted the model not just for object detection, but for contextual awareness, estimating volume and state (fresh vs. cooked). \ Reasoning Engine: We implemented a "Health Guardrail" system. The prompt structure requires the model to reason through dietary constraints before generating the recipe. \ Dynamic Math: We tasked Gemini with performing the caloric math in real-time. $$TotalCalories = \sum (Ingredient_{mass} \times EnergyDensity_{factor})$$ This ensures the nutritional info matches the exact recipe generated, rather than a generic average. \ Structured Output: We forced the final output into a strict JSON schema. This allows our frontend application to programmatically render step-by-step instructions, timers, and nutritional badges without parsing errors.

Challenges we ran into

\ Live Video vs. Static Reality: Our original vision was a continuous, real-time video feed that identified ingredients as you panned across the kitchen. However, we realized that the latency and token overhead for processing live video streams compromised the immediate responsiveness we wanted. We had to pivot from a live stream to a "snap and analyze" workflow. \ The "Vibe Coding" Trade-off: We embraced a "vibe coding" approach, moving incredibly fast and using AI to generate large chunks of our frontend interface. While this accelerated the visual build, it created friction when integrating with the backend. We found that our rapidly generated UI code often clashed with the strict JSON structure returned by Gemini, forcing us to slow down and manually refactor our state management to handle the data correctly. \ Prompting for Precision: Getting the model to be a "creative chef" and a "strict data analyst" simultaneously was tough. Early on, the model would prioritize the conversational "vibe" over the math, leading to missing calorie counts or broken instruction steps until we implemented a multi-step reasoning chain.

Accomplishments that we're proud of

\ The "Health Safety" Filter: We successfully managed to get Gemini to reject delicious-sounding recipes if they violated the user's health constraints (e.g., rejecting a high-sodium soy sauce marinade for a user with high blood pressure). \ Seamless Photo-to-JSON Pipeline: achieving a consistent output format where the app can render a UI directly from the AI's response without breaking. \ Real-time Adaptation: The "Hey Chef" interaction feels incredibly fluid and natural.

What we learned

\ Multimodal is more than just "seeing": We learned that Gemini 3 can infer context (like a bag being half-empty) which is crucial for cooking. \ Prompt Engineering for Safety: We learned that defining "constraints" is often more powerful than defining "goals" when dealing with health data. \ Structure vs. Creativity: We found the sweet spot between allowing the AI to be creative with flavors while being rigid with data structure.

What's next for AI-Powered Adaptive Nutrition & Recipe Assistant

\ Pantry Memory: Implementing a database that remembers what you bought so you don't have to photograph it every time. \ IoT Integration: Connecting directly to smart ovens to automatically set temperatures based on the generated JSON instructions. \ Wearable Sync: syncing the calculated nutritional data directly to health apps (like Google Fit or Apple Health) to close the loop on daily tracking.

Log in or sign up for Devpost to join the conversation.