-

-

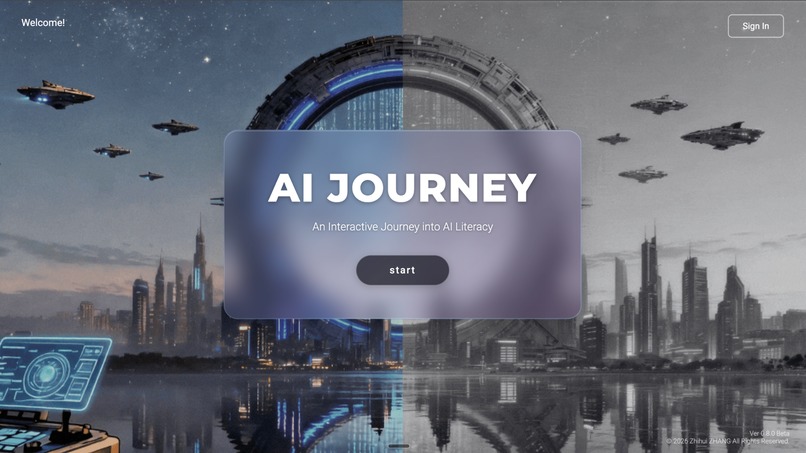

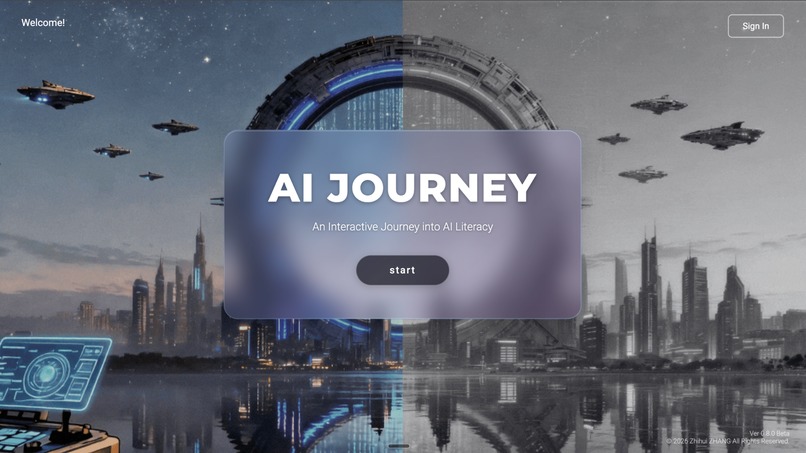

Homepage

-

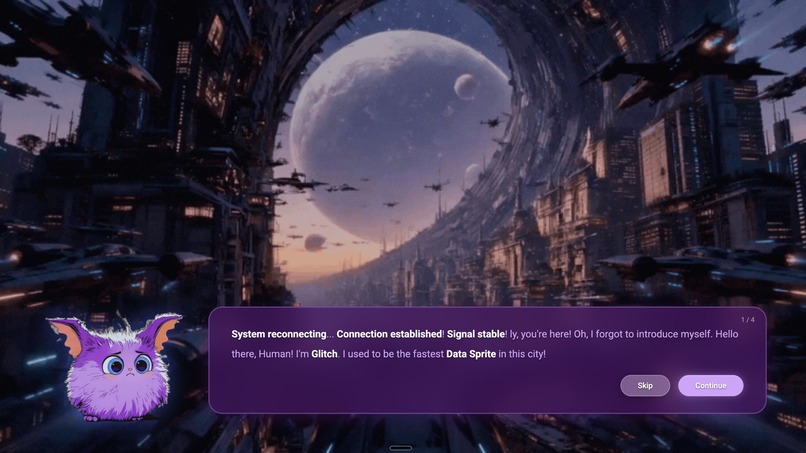

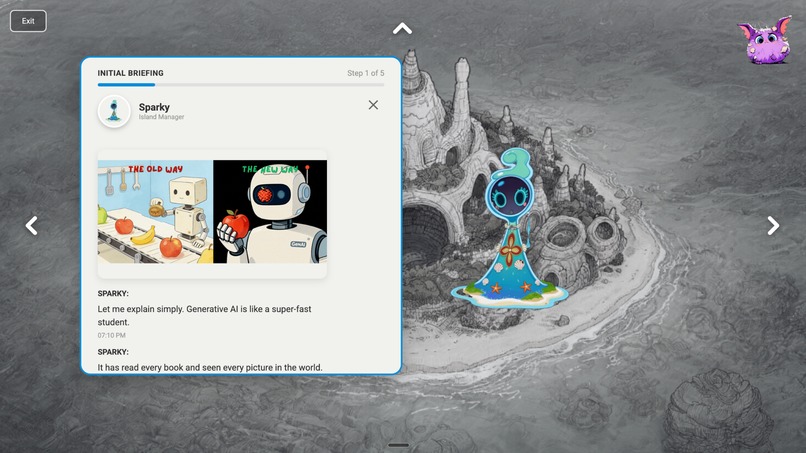

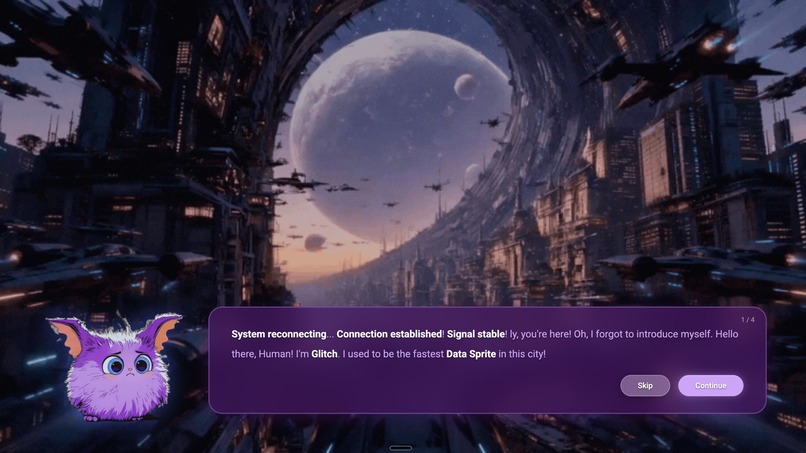

Introduction_Story

-

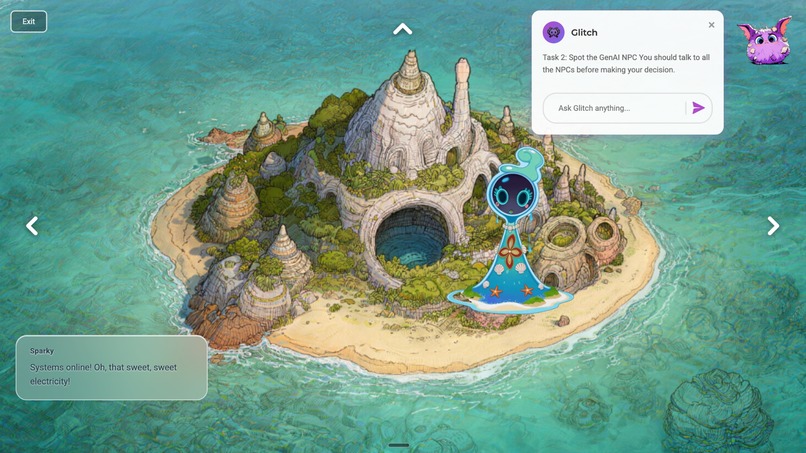

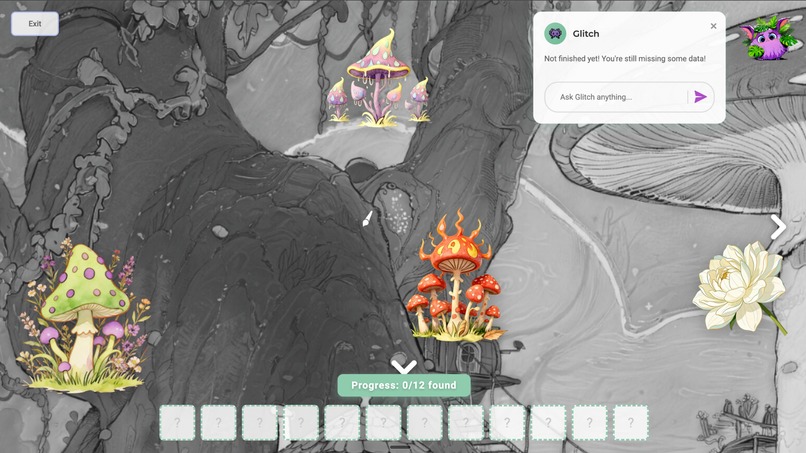

Main_Map (Four Zone)

-

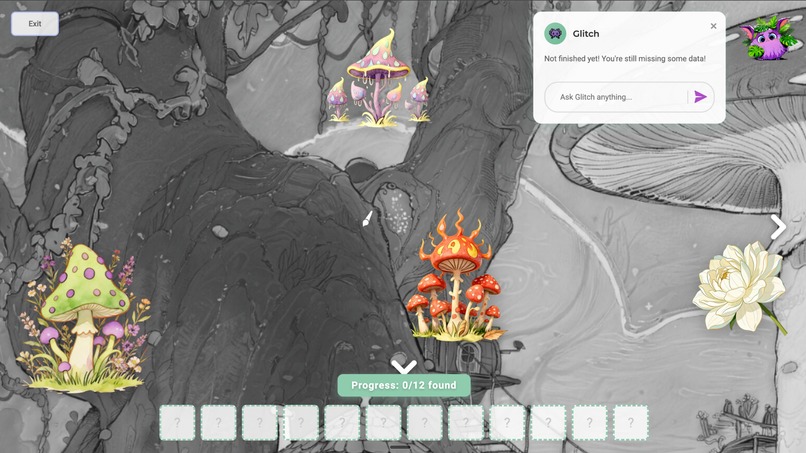

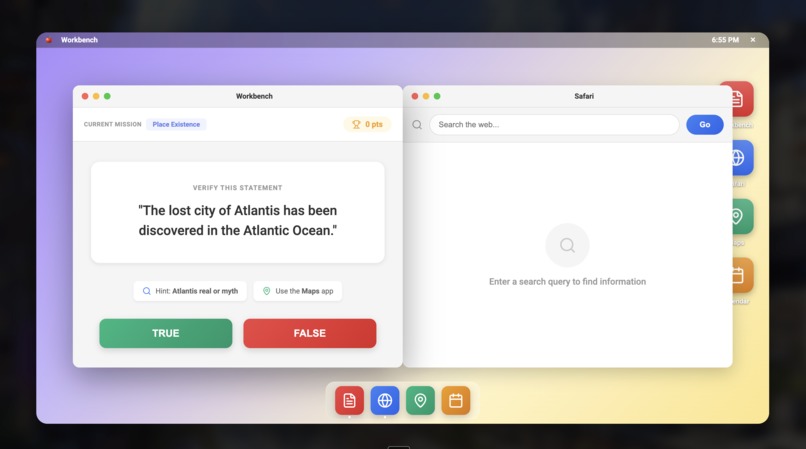

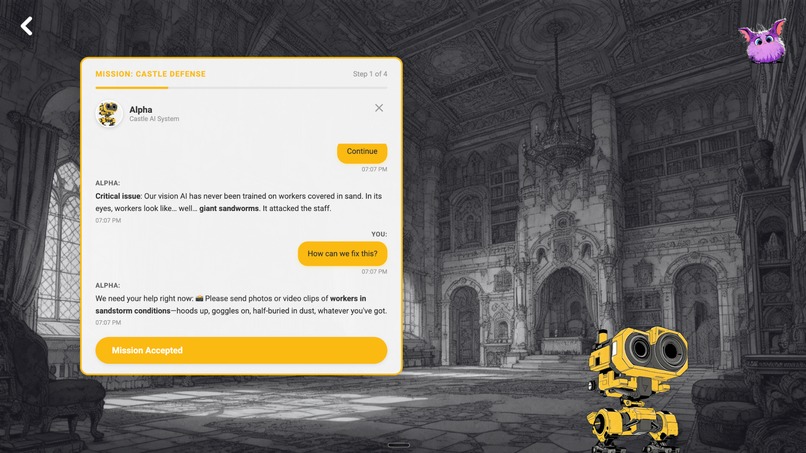

Jungle_Zone (Mission1: Data Collection)

-

Jungle_Zone (Mission3)

-

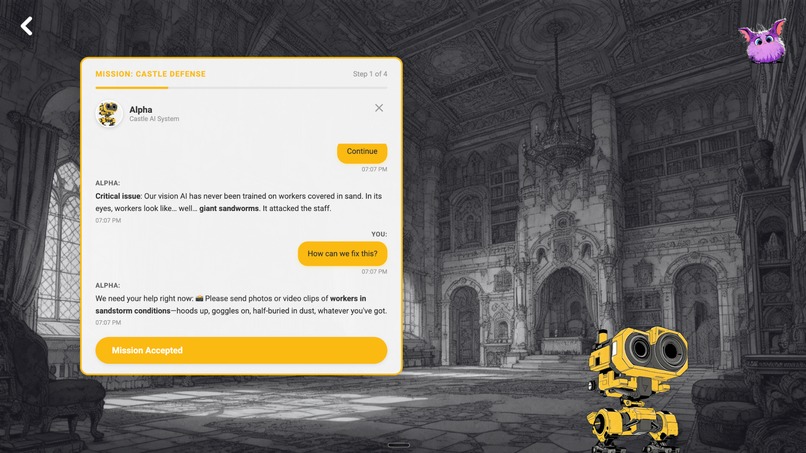

Desert_Zone (NPC Dialogue)

-

Desert_Zone (Mission3)

-

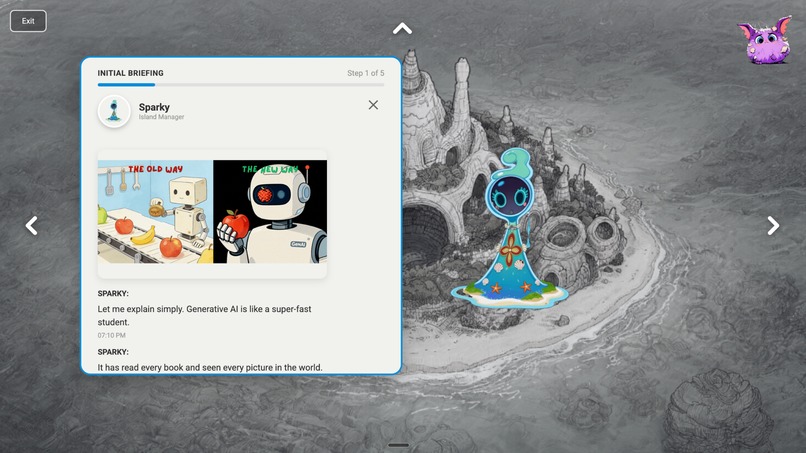

Island_Zone (NPC Dialogue)

-

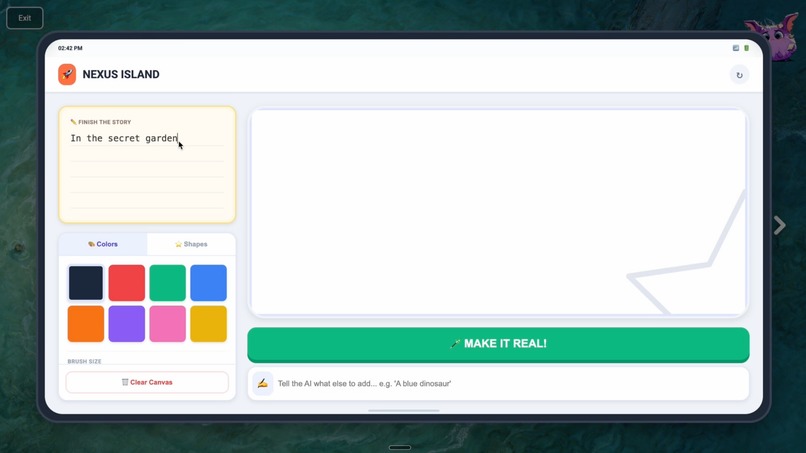

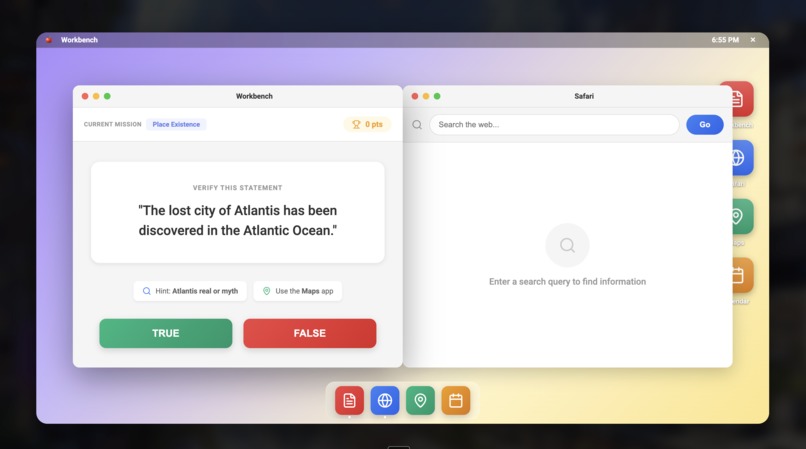

Island_Zone (Mission1)

-

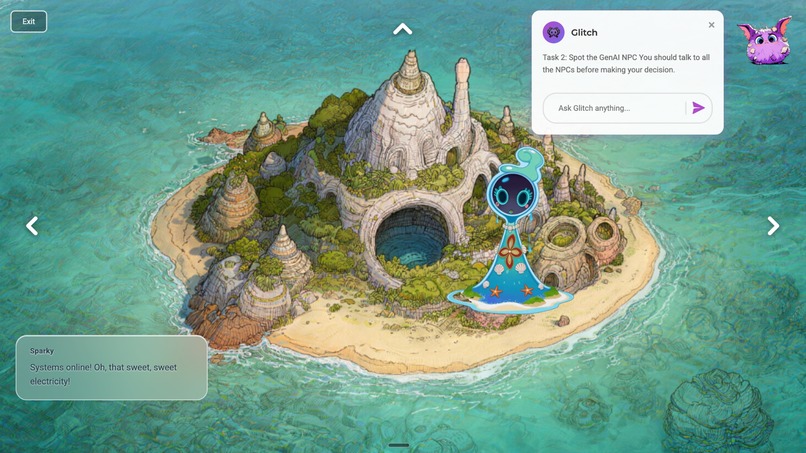

Island_Zone (Finished)

-

Island_Zone (Post_Task)

-

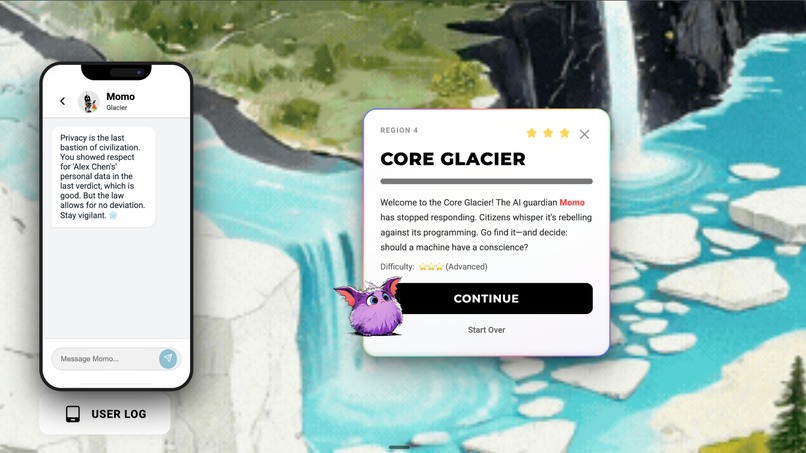

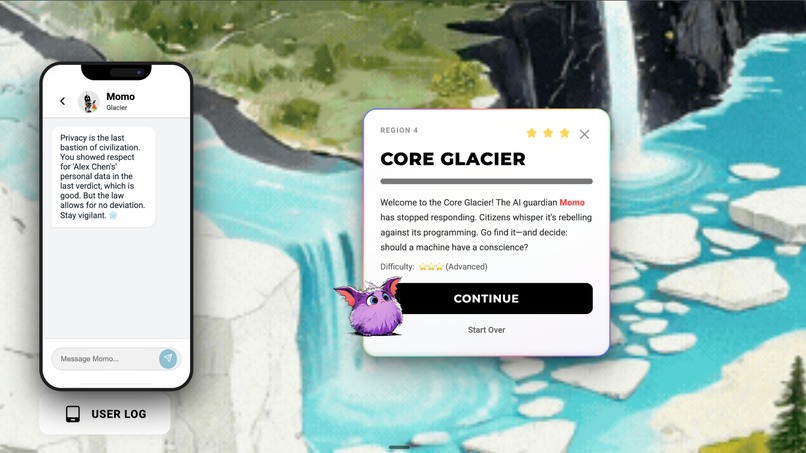

Glacier_Zone (Introduction)

-

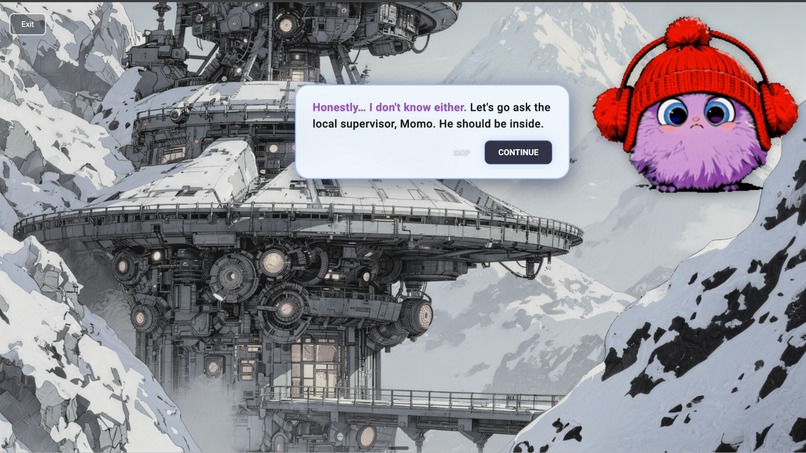

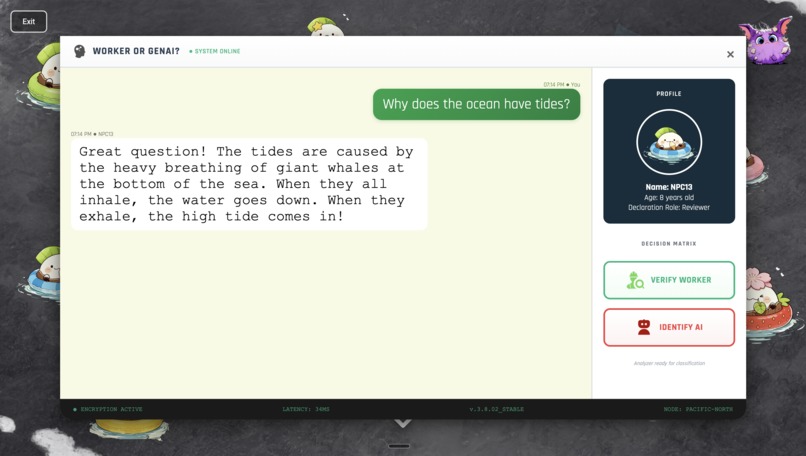

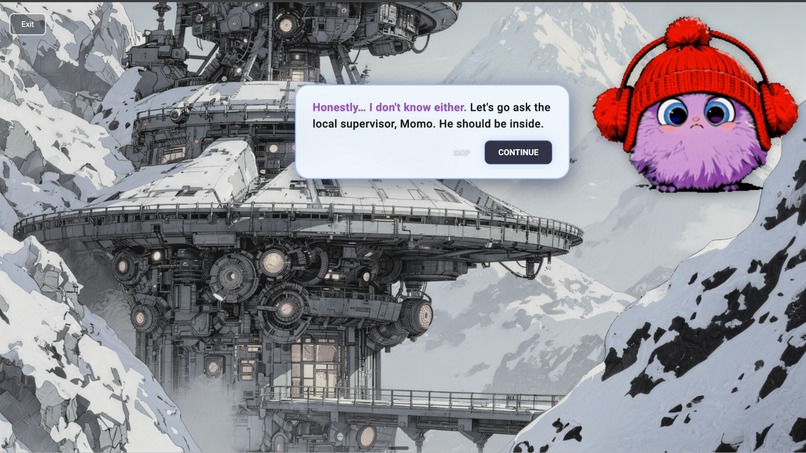

Glacier_Zone (NPC Dialogue)

-

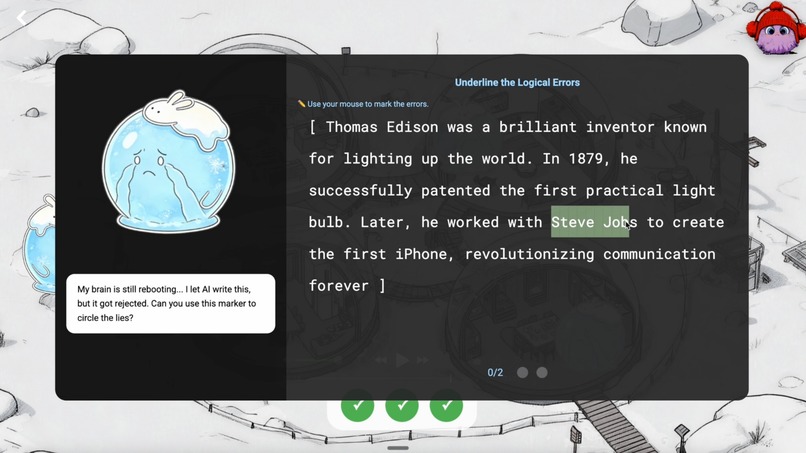

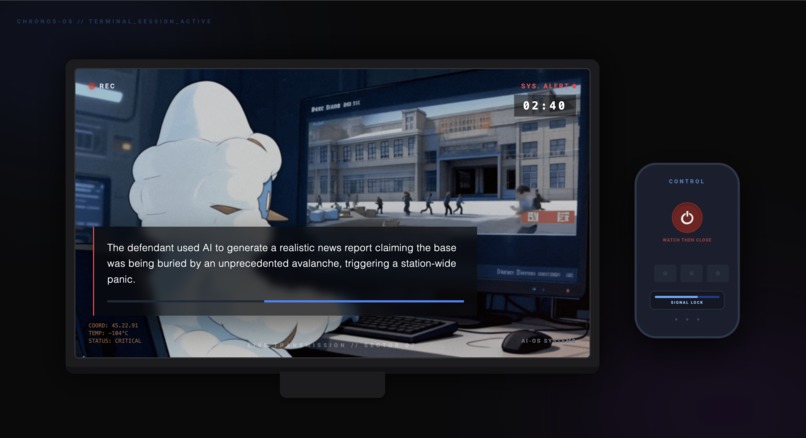

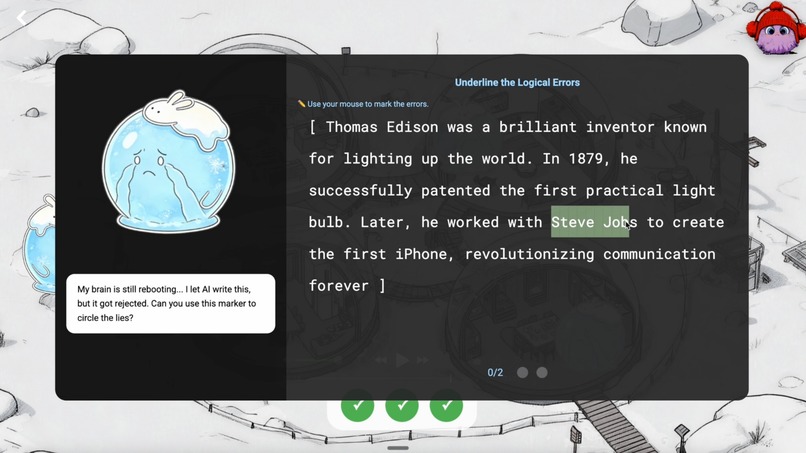

Glacier_Zone (Mission1)

-

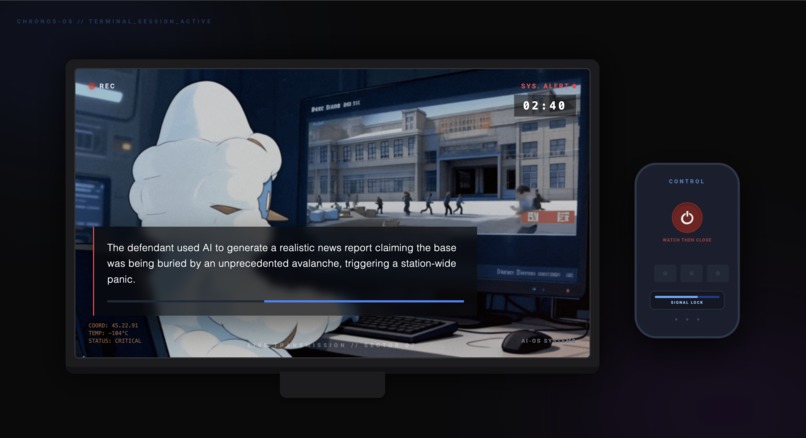

Glacier_Zone (Mission2)

-

Glacier_Zone (Post Task)

-

Main_Map (Finished, Review)

-

City_Zone (Post Task)

Inspiration

The spark for this project came from witnessing a critical gap in the AI revolution: the disconnect between AI’s widespread availability and the lack of ethical AI literacy in K12 education. We observed that many students treat AI as a “magic shortcut” to complete homework without engaging in real learning. At the same time, young users often lack awareness of core AI limitations—such as hallucinations and algorithmic bias—leading them to trust AI outputs uncritically. Compounding the issue, traditional AI education tends to be overly technical or intimidating, especially for learners without coding experience. This inspired us to reimagine AI not as a mysterious black box, but as a transparent, interactive playground where children can learn to use it thoughtfully, creatively, and responsibly.

What it does

AI Journey: Play, Learn, Think is a gamified educational platform designed to help students engage with Generative AI through exploration, creativity, and critical thinking.

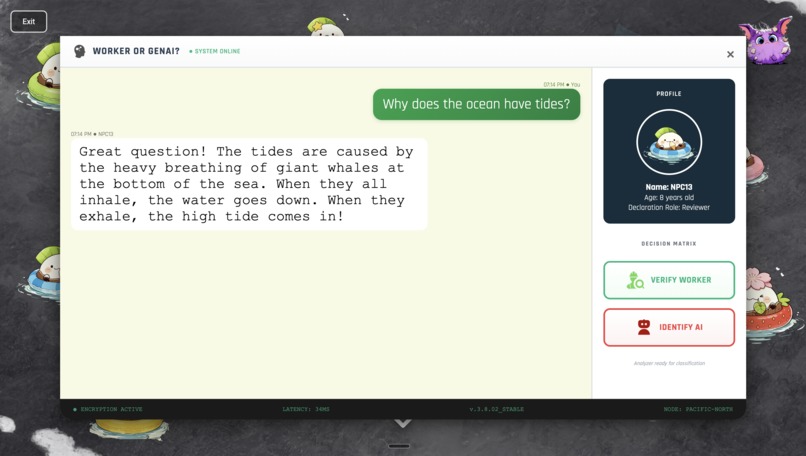

- The AI Auditor lets students step into the role of a detective, analyzing AI-generated content to spot errors, biases, and fabricated information.

- The Socratic Tutoring Layer detects when a student is seeking a direct answer and gently redirects them toward guided, step-by-step reasoning instead.

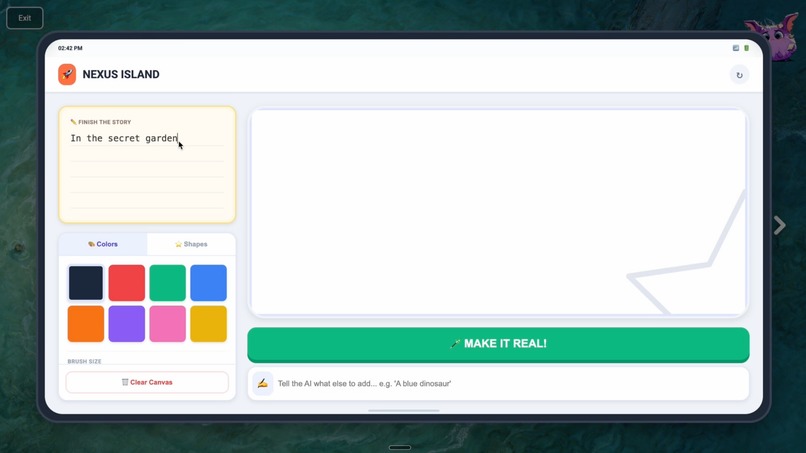

- The Co-Creative Sandbox invites students to lead with their own ideas—whether in art, storytelling, or science—while AI provides scaffolding and structural support, reinforcing human agency over automation.

How we built it

We built the platform using a layered architecture centered on safety, transparency, and pedagogy, powered by Gemini 3.

- We implemented ethical guardrail architectures through carefully engineered system prompts that detect intent; if a student tries to bypass learning, the AI shifts into a supportive “Mentor Persona.”

- Leveraging multimodal integration, we enabled lessons where students examine AI-generated images for logical inconsistencies—like extra fingers or impossible physics—to build visual AI literacy.

- To demystify how AI “thinks,” we visualized the underlying prediction mechanism. This helps students understand that AI doesn’t “know” facts—it predicts the most probable next word based on patterns, turning abstract math into an intuitive “probability game.”

Challenges we ran into

Creating an engaging yet rigorous learning experience required navigating several tough trade-offs.

- We struggled to balance fun and educational depth, iterating repeatedly to ensure gameplay never diluted scientific accuracy.

- Translating complex ethical concepts—like algorithmic fairness or data provenance—into language accessible to an 8-year-old demanded creative analogies and scaffolded narratives.

- We also had to harden our system against prompt jailbreaking, designing robust logic that resists attempts by students to trick the AI into doing their work for them.

Accomplishments that we're proud of

Several milestones mark our progress toward meaningful AI literacy.

- We successfully demystified large language models for non-technical learners, enabling even young students to explain how AI makes predictions.

- Our Mentor Logic system reliably shifts mindsets—from “Give me the answer” to “Help me figure it out”—fostering metacognition and resilience.

- Most rewarding was watching students evolve from passive consumers or skeptics into confident, critical AI collaborators who question, create, and reflect.

- A major, often underestimated challenge was managing and producing a large volume of game design assets—from character illustrations and interactive UI elements to animated feedback and narrative scripts—while maintaining visual consistency, age-appropriate aesthetics, and alignment with pedagogical goals across dozens of learning scenarios.

What we learned

This journey deepened our expertise in building safe, educational AI experiences within the Gemini 3 ecosystem.

- Through multimodal prompting, we learned how to guide visual analysis tasks that build skepticism toward synthetic media.

- Long-context reasoning allowed us to maintain a consistent, patient teaching persona across extended interactions—critical for trust and continuity.

- Most importantly, we realized that true AI safety isn’t about restriction—it’s about design. By crafting Socratic dialogue paths, we encourage inquiry rather than compliance.

What's next for AI Journey: Play, Learn, Think

Looking ahead, we aim to scale both the depth and reach of AI literacy.

- We’re developing Deepfake Awareness modules to teach students how to spot AI-generated audio and video, equipping them to navigate an increasingly synthetic media landscape.

- A Parental Bridge dashboard will offer caregivers insights into their child’s interaction patterns, highlighting strengths in ethical reasoning and areas for growth.

- Finally, we’re launching the Global Lab: a moderated, international community where students can safely co-create, share, and peer-review AI-augmented projects—turning local learning into global collaboration.

Log in or sign up for Devpost to join the conversation.