-

-

scrap children’s motorcycle that will be turn to robot

-

prototype of the water management tank

-

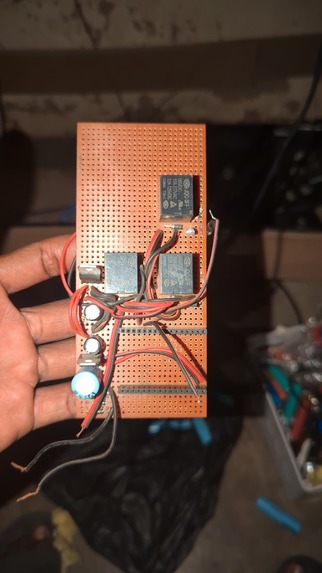

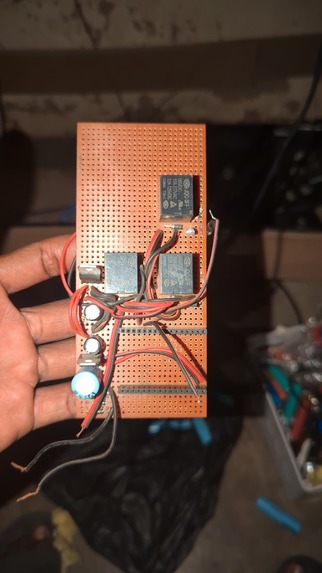

The relay broad

-

Nodemcu

-

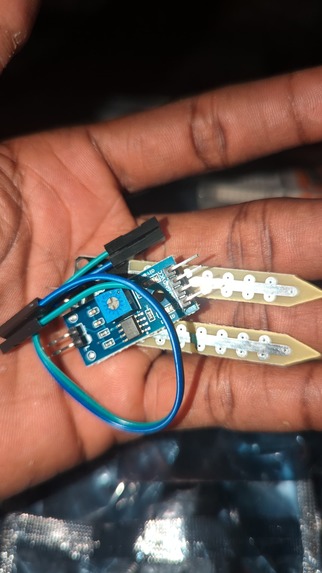

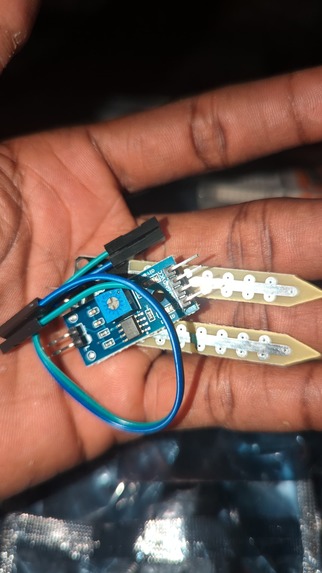

Soil moisture sensor

-

Temperature sensor

-

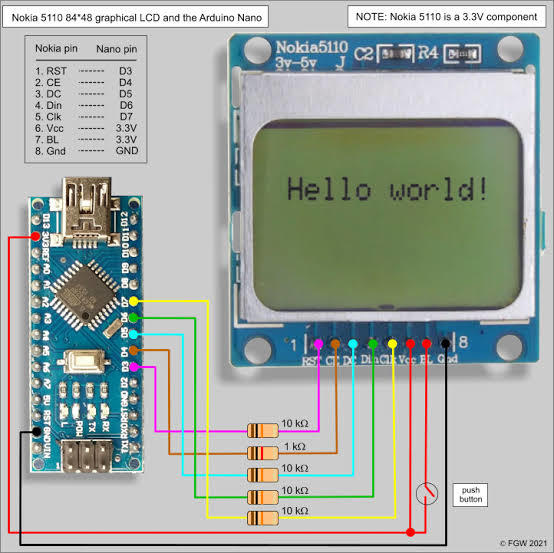

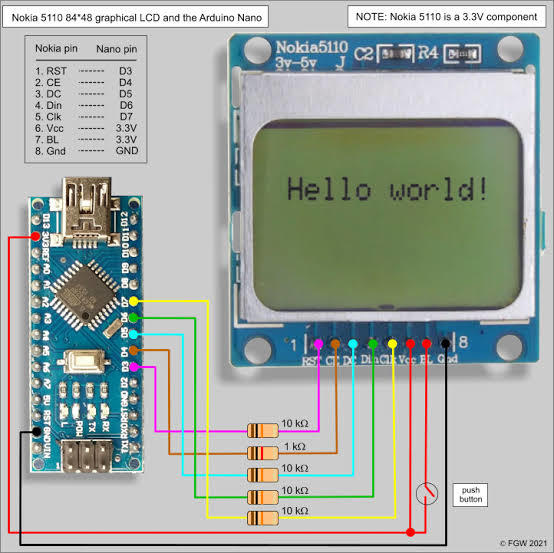

Nokia screen for output and display from the robot

-

-

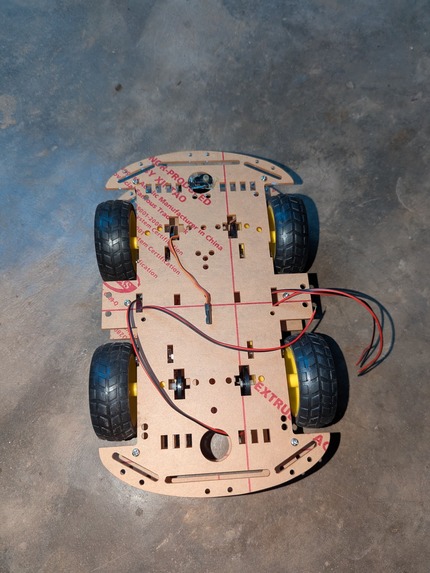

prototype robot that will be use to test all our sensor

-

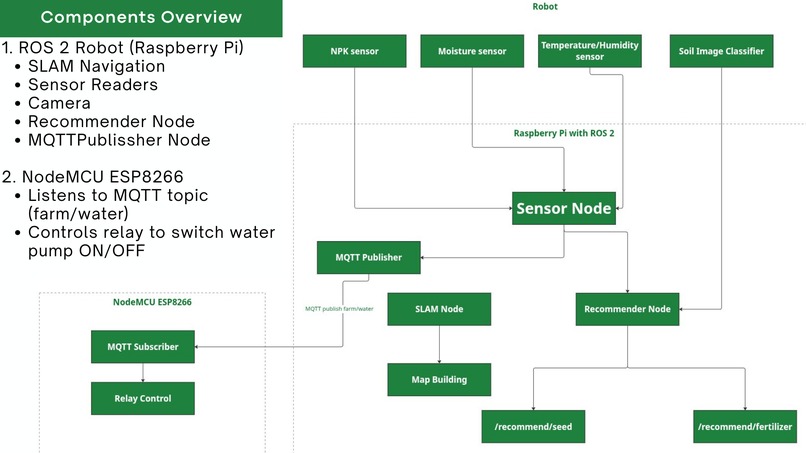

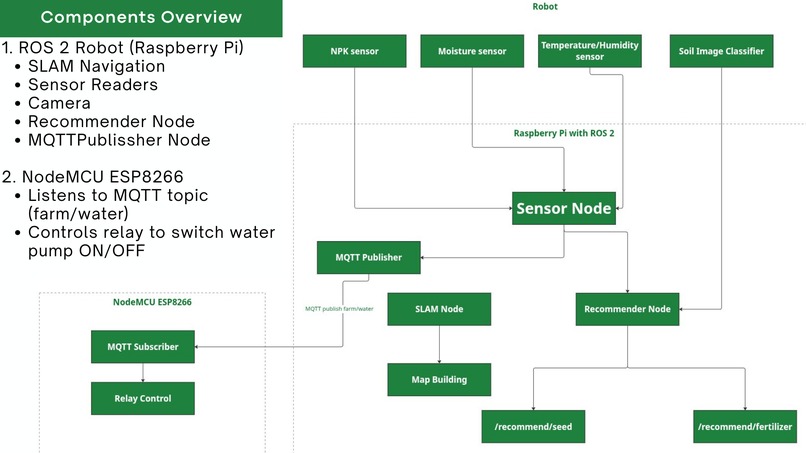

System Architecture Design

-

Meeting session

-

project showcasing

Inspiration

Our team was driven by the daily struggles of smallholder farmers in Nigeria hardworking individuals who often lack access to timely and affordable guidance. We witnessed firsthand how poor soil management, inadequate fertilizer use, and inefficient crop selection lead to low yields and wasted resources.

A study published in Discover Sustainability (September 2024) analyzed data from Nigerian smallholder households and found that effective soil management practices such as proper soil testing and nutrient management can significantly boost crop yields. In contrast, low adoption of these practices has been linked to stagnating productivity. Osabohien, R., Jaaffar, A.H., Matthew, O. et al. Soil management practice and smallholder agricultural productivity in Nigeria. Discov Sustain 5, 283 (2024).(Source)

This evidence fueled our mission to develop AgroBot AI, an affordable, autonomous system that brings expert-level agronomic recommendations directly to farmers without needing labs or internet.

What it does

AgroBot AI is an autonomous, AI-powered robot that performs on-the-spot soil testing and provides instant, tailored recommendations for:

- Seed selection

- Fertilizer use

- Water management

It uses sensors and a built-in recommendation engine to guide farmers on what to plant, how to feed the soil, and when to irrigate all based on real-time soil conditions.

How we built it

We started by repurposing parts from a scrap children’s motorcycle to build the robot’s mobile base. This gave us a durable and affordable frame to work with.

Key components integrated into the system include:

- NPK Sensor – for measuring soil nutrient content (Nitrogen, Phosphorous, Potassium)

- Soil Moisture Sensor – to determine water content in the soil

- Temperature Sensor – to monitor soil and ambient temperature

- DC Motors & Stepper Motor – for movement and sensor deployment

- L298N Motor Driver – to control the motors

- Relay & Water Pump – to simulate or deliver precision irrigation

- Battery Pack – for powering the robot in the field

- Arduino Uno & NodeMCU – for sensor control and real-time data collection

- ESP32-CAM – to enable camera-based feedback or remote monitoring

- Raspberry Pi – acts as the brain of the system, coordinating input/output and running the recommendation engine

On the software side, we programmed the robot using Python (on Raspberry Pi) and C++ (for microcontroller firmware on Arduino and NodeMCU). These two languages allowed us to manage both high-level logic and low-level sensor control efficiently.

We developed a query-based recommendation engine using pandas and NumPy. The engine compares real-time soil data to a pre-collected dataset and uses Euclidean distance to find either an exact or closest match. Based on this match, the system recommends:

- The most suitable crop type

- The ideal fertilizer name

- Whether the soil requires watering or not

All recommendations are generated locally, meaning farmers can access them in real-time without needing internet or external servers.

For the UI, we used Streamlit to build a simple, farmer-friendly interface. Users can manually input soil parameters (or use readings from the robot), and the app returns tailored recommendations instantly. The system can also run headlessly in the field when needed.

By combining low-cost hardware with intelligent software, we’ve built a practical and scalable solution for real-time, AI-driven soil analysis and crop advisory—customized for the needs of rural farmers.

Challenges we ran into

Building AgroBot AI came with a mix of technical and practical challenges. One of the first hurdles was repurposing parts from a used children’s motorcycle we had to carefully adapt it to carry sensors, wiring, and control boards without compromising mobility or balance.

Integrating multiple hardware components (Arduino, NodeMCU, Raspberry Pi, ESP32-CAM, sensors, and motor drivers) proved tricky due to power supply fluctuations, timing issues, and communication conflicts between devices. Ensuring accurate data collection from the NPK and moisture sensors also required calibration in varying soil types.

On the software side, combining data from multiple sources and aligning them with our custom recommendation engine involved careful data cleaning and feature encoding. We also faced issues distinguishing between exact and close matches during the query process, which affected the quality of recommendations early on.

New Challenges We Faced in AgroBot AI

- Battery Pack Instability Initially, we constructed a 12V battery pack using multiple 3.7V lithium-ion cells (100A) with a BMS but without a balancer. The pack kept bursting after re-celling because some cells had lower capacity than others. These weaker cells would reach full charge earlier, leading to overcharging and damage.

Solution: We integrated a cell balancer to equalize voltage levels across all cells during both charging and discharging, preventing overcharging and extending battery life.

- Steering System Misalignment We used a car back-glass wiper motor to rotate the robot’s front tires. However, the mechanism got stuck frequently. We discovered the issue was due to the wiper’s rotation not being perfectly linear, while the robot’s steering hook required a straight linear motion.

Solution: We developed a smart motion linkage that compensates for this mismatch, ensuring smooth steering without jamming.

- Motor Speed Control Limitations The rear wheels were controlled using an H-bridge relay system, but we realized that H-bridge relays can only switch direction and on/off states, not control speed. As a result, the robot always moved at full speed, making precise control difficult.

Solution: We introduced a step-down buck converter to regulate voltage and reduce motor speed, allowing the robot to move slower and more stable.

- Computational Limitations with ROS2 Our initial plan was to run ROS 2 on a Raspberry Pi Zero, but we quickly realized that the Pi Zero lacked the processing power required for real-time AI computation and robot control. Due to budget constraints, we couldn’t afford the latest Raspberry Pi versions.

Solution: We switched to using a brain computer (more powerful processor setup) for handling computations, data analysis, and AI tasks

Accomplishments that we're proud of

Despite the challenges, we’re incredibly proud of what we achieved as a team. We built a functioning AI-powered robot that performs real-time soil analysis and provides actionable agricultural recommendations all using affordable, off-the-shelf hardware.

We successfully integrated multiple sensors, developed a robust recommendation engine using Python and pandas, and built a clean, user-friendly interface with Streamlit. Our system works offline, meaning it can be deployed in areas with limited connectivity, and can still return valuable insights to the farmer.

What started as an idea turned into a tangible prototype that could genuinely support smallholder farmers with better decisions and improved productivity.

New Accomplishment

What We Have Included in This Robot That Was Not in the Previous One

From Reactive to Intelligent Navigation Previous robots were programmed with basic conditional statements (e.g., “if obstacle, then turn”) and depended mainly on ultrasonic sensors. This caused major issues such as blind spots, false readings in sunlight or reflective surfaces, and limited adaptability. In this new robot, we integrated ROS 2 (Robot Operating System 2) together with the SLAM algorithm, giving it the ability to see, map, and predict, rather than just react.

SLAM for Environmental Awareness With Simultaneous Localization and Mapping (SLAM), the robot can: Build a map of its environment in real-time. Continuously localize its position within that map. Plan paths intelligently, avoiding obstacles and blind spots. This means the robot is not “blindfolded” anymore but rather has a 360° situational awareness.

Multi-Sensor Fusion Instead of relying solely on a single ultrasonic sensor, the new system can integrate multiple sensor inputs (camera, ultrasonic, soil sensors, IMU, etc.). Using sensor fusion techniques under ROS 2, the robot combines data sources to make smarter, more reliable decisions.

Upgraded Power & Safety Management Our previous robot struggled with unstable batteries and uneven charging. The new version incorporates cell balancing, battery management systems, and voltage regulation to provide a stable power supply and extend lifespan.

Scalability and Modularity with ROS 2 Unlike the older version (hard-coded logic), ROS 2 makes it easier to add new features like extra sensors, new AI models, or mechanical upgrades. This modularity means our robot is not fixed, it can evolve over time without needing a complete redesign.

rue Autonomy Through Prediction Instead of just responding when something happens, the robot can now predict movement patterns (e.g., detecting an approaching obstacle before collision). This forward-thinking design makes the robot more efficient and closer to human-like decision-making.

Application-Oriented Intelligence While older designs were “general” robots, this AgroBot is tailored with agricultural intelligence: Soil monitoring (moisture, NPK, temperature). Seed and fertilizer recommendation. Water management via smart pump control.

Development of Agrobot AI website It will let us onboard farmers, know the number of farmer we have onboard, track the number of robots created and deployed, and display deployment locations in real time. This platform will keep us transparent, organized, and attractive to investors.

This makes it domain-specific and far more useful in real-world agriculture.

What we learned

This project taught us more than just technical skills. We deepened our understanding of how real-world farming problems can be solved with simple, well-designed technology.

We learned how to calibrate sensors, optimize communication between microcontrollers, and combine low-level C++ firmware with high-level Python logic. We also gained valuable insight into how to design AI systems that are both explainable and usable in practical, rural settings.

Most importantly, we learned the power of teamwork, perseverance, and keeping the end user rural farmers in focus at every step of the build.

New

Basic robots are limited, so intelligent navigation (ROS 2 + SLAM) is essential.

Using multiple sensors together makes the system smarter and more reliable.

Stable power and battery management are just as important as AI.

Modularity helps the robot grow and adapt over time.

Robots must be tailored to agriculture—soil, seeds, and water management.

Transparency through the Agrobot AI website builds trust with farmers and investors.

What's next for AgroBot AI

Our next steps focus on turning this prototype into a field-ready solution. We plan to:

- Integrate fully into our original Robot

- Expand the dataset to improve recommendation accuracy across more soil types and crops (In progress)

- Create a mobile version of the app so farmers can use the system even without the robot

- Explore partnerships with cooperatives, NGOs, and agricultural bodies for field trials

We believe AgroBot AI has the potential to scale across regions and revolutionize how smallholder farmers access precision agriculture tools.

Log in or sign up for Devpost to join the conversation.