-

-

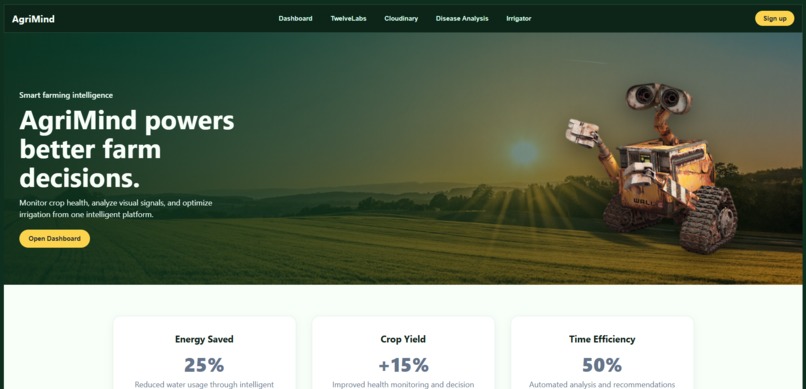

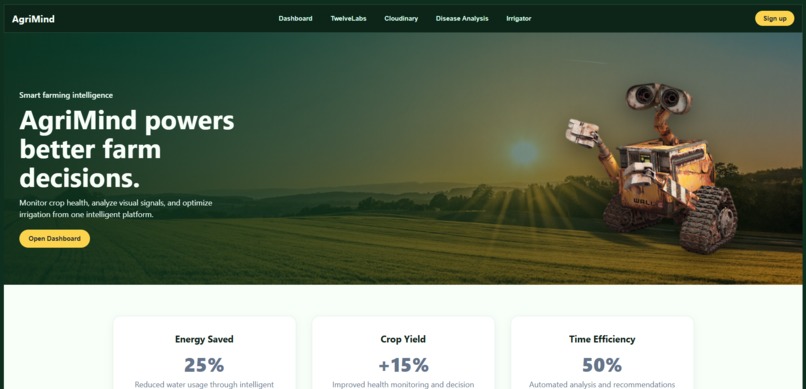

landing page - Wall-E as our mascot!

-

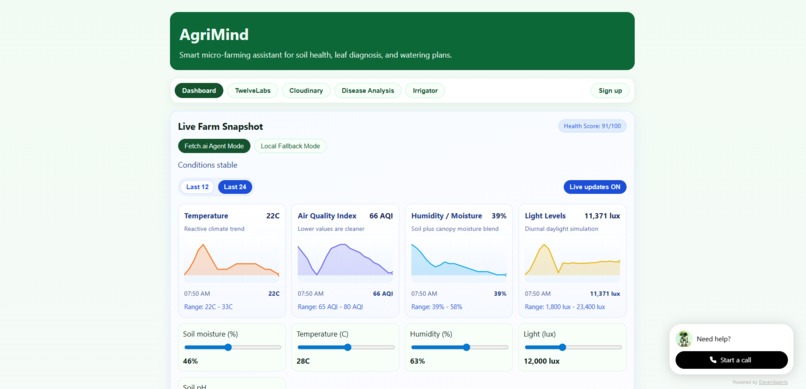

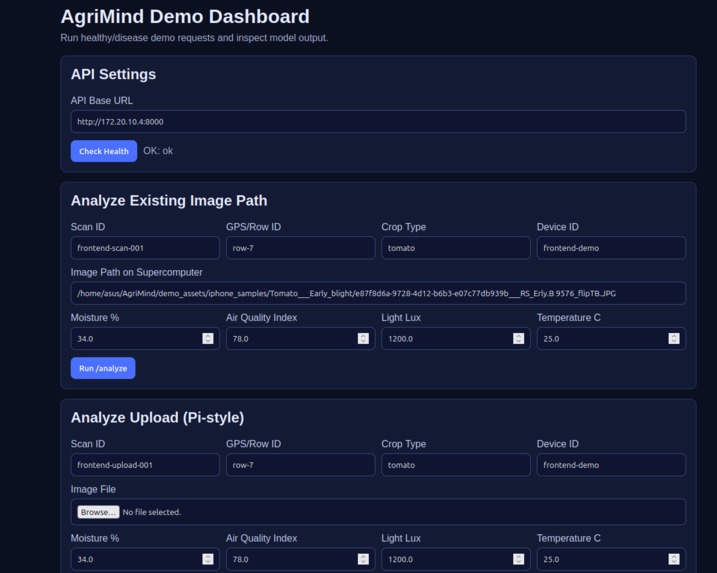

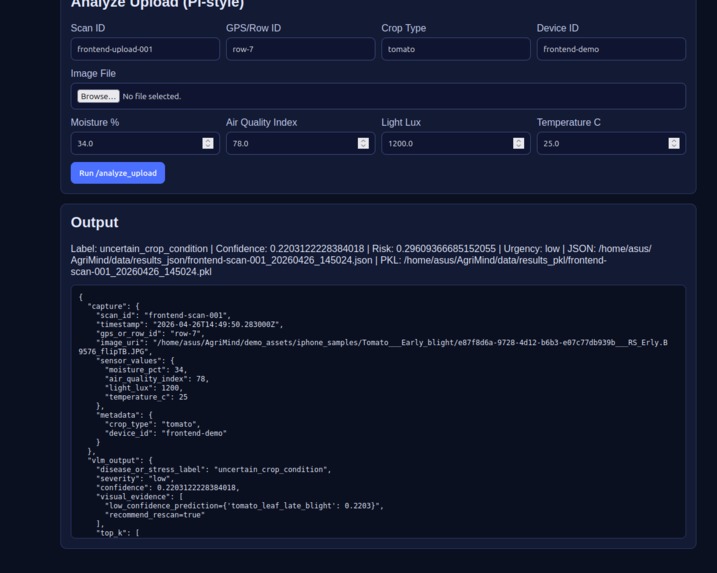

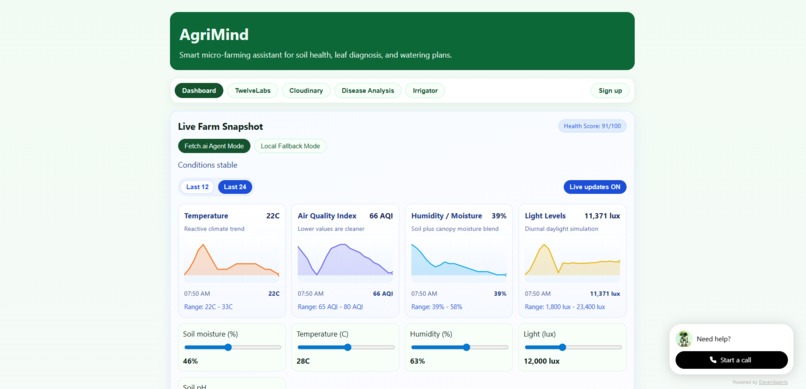

Real-time dashboard with sensor data ingested from temp, air quality, humidity and light sensors

-

-

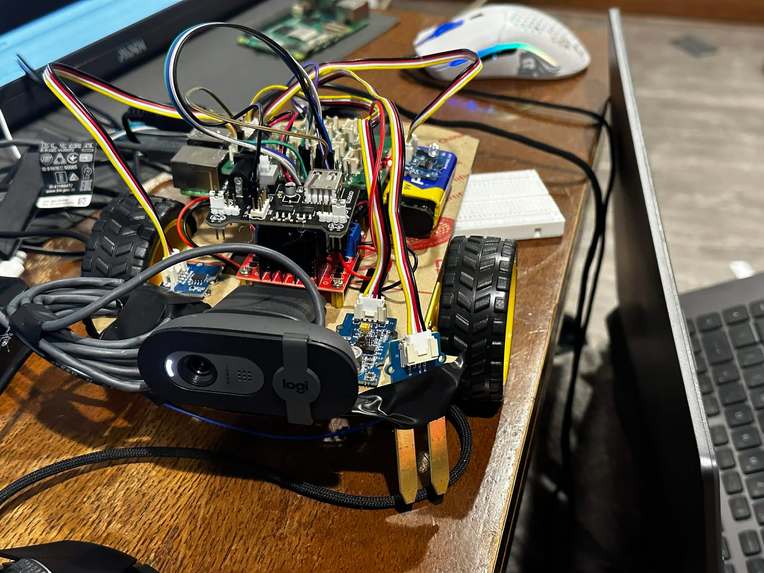

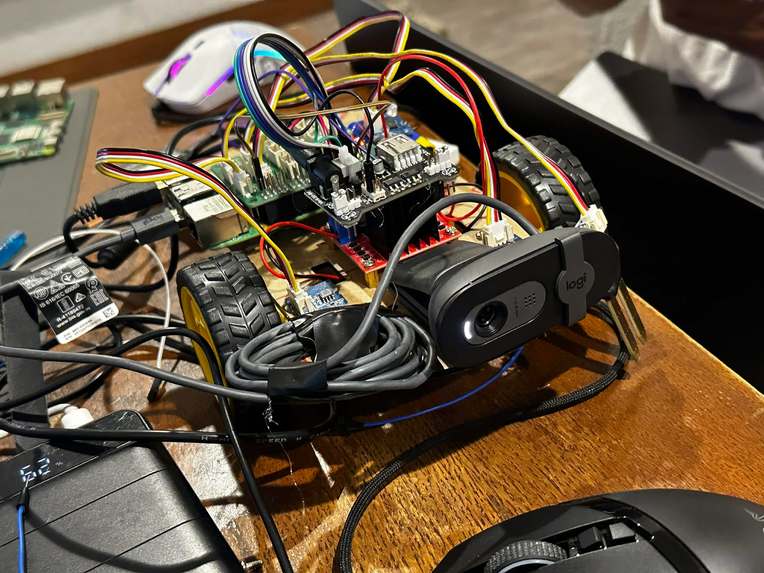

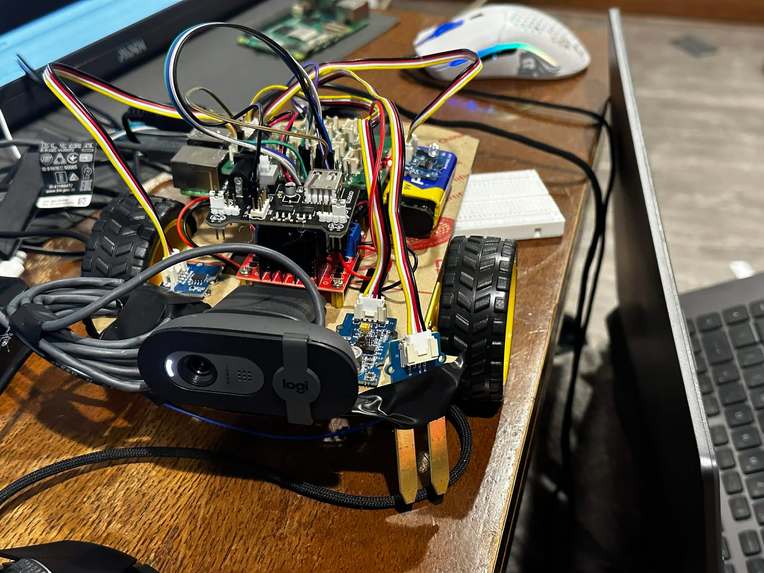

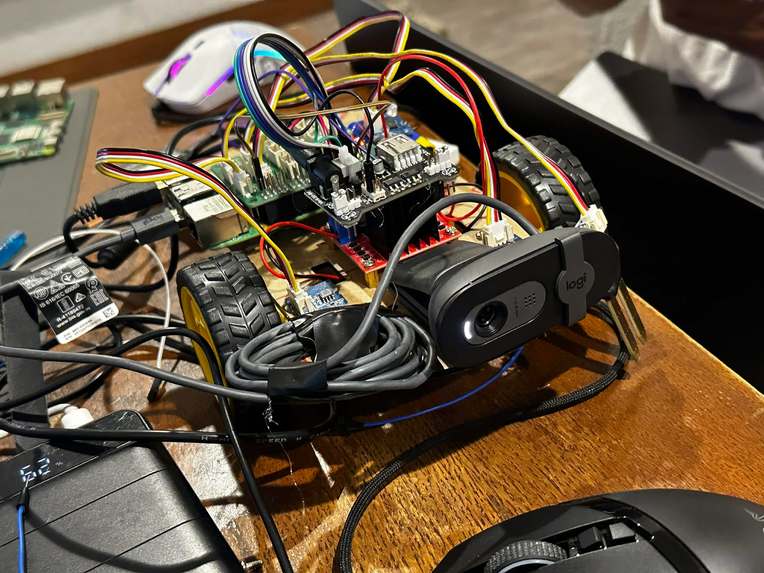

Our Smart Robot guardian!

-

-

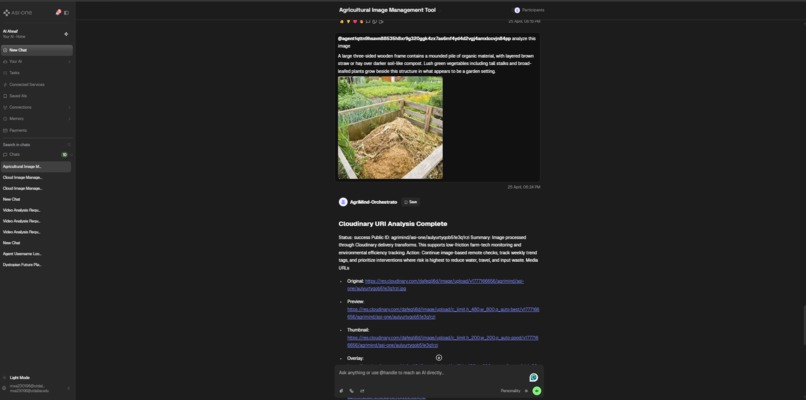

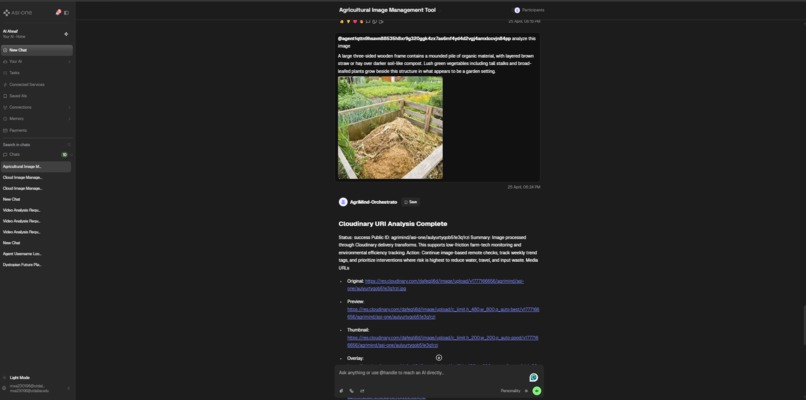

ASI:One Chat Protocol Agent: users can upload media and incur the image processing pipeline through Cloudinary: annotating, analysis, QnA

-

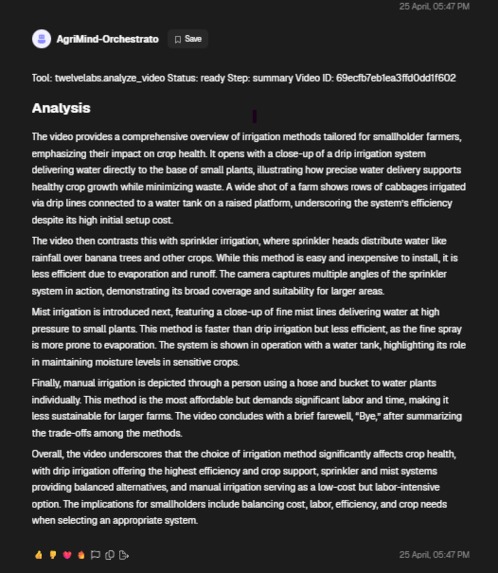

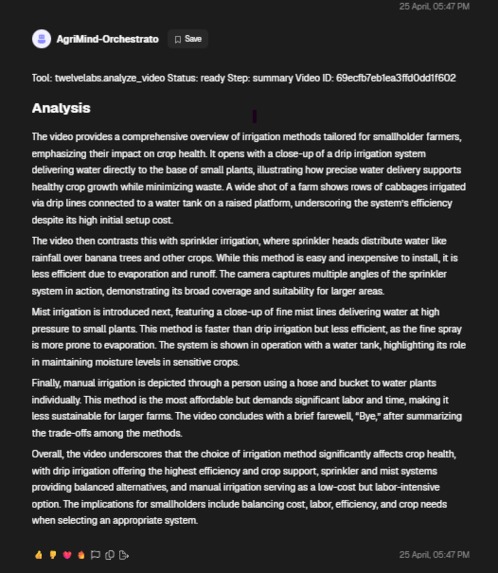

ASI:One Chat Protocol Agent: users can provide video ID and incur the video processing pipeline through TwelveLabs: analysis and QnA

-

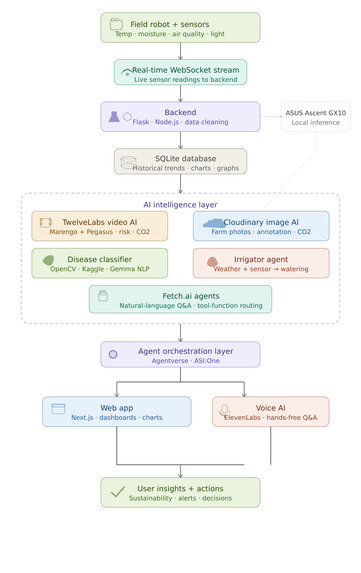

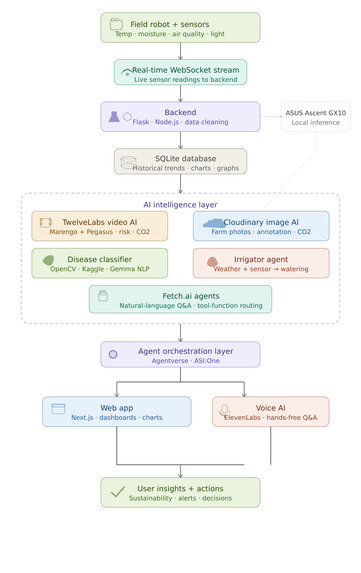

system architecture

-

ASUS Ascent GX10

-

-

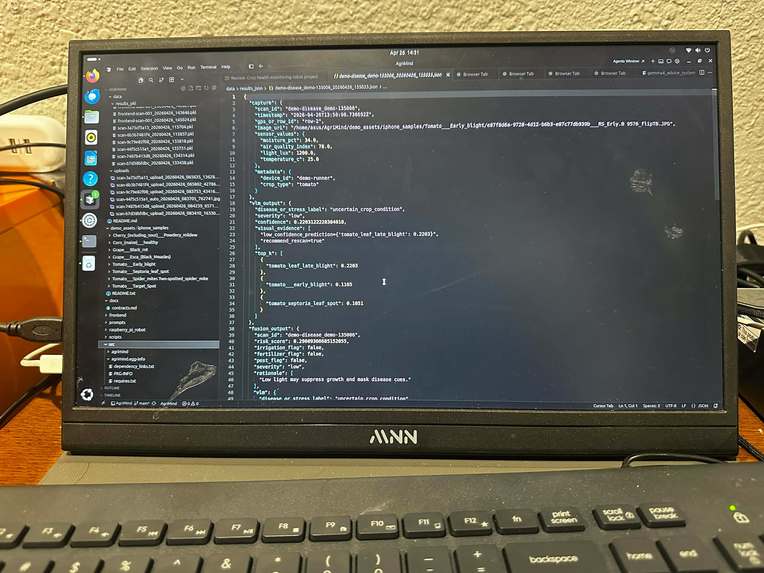

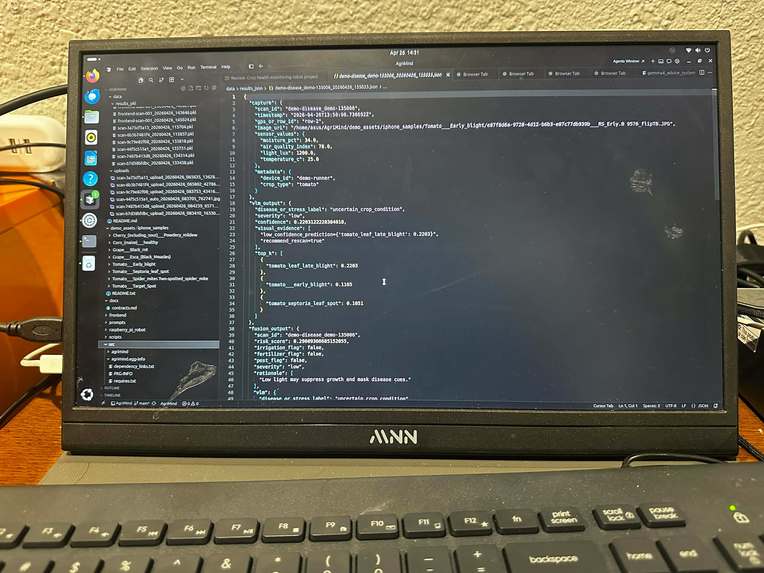

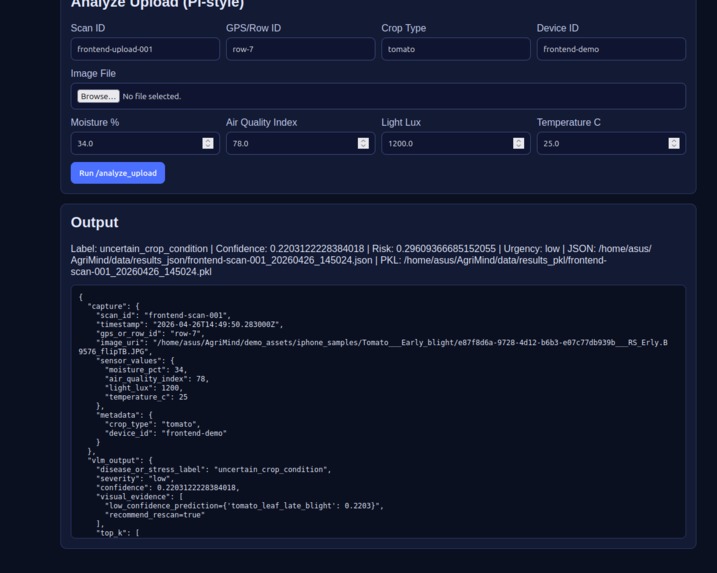

classification

-

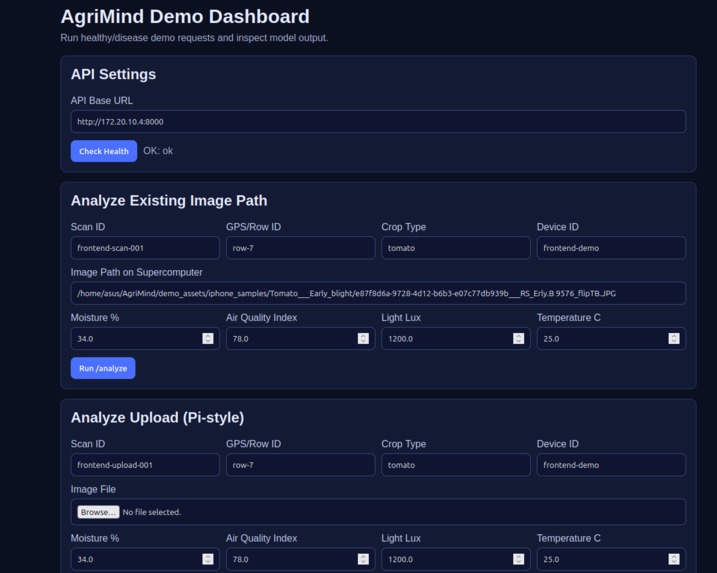

Advanced dashboard

-

real-time data captured (Sensors -> Raspberry PI -> ASUS GX10)

Inspiration

Agriculture loses massive value every year to problems that are often detected too late. Globally, up to 30–40% of crop yield is lost to pests and diseases, while farms can waste nearly 50% of irrigation water through overwatering, evaporation, and inefficient schedules. On top of that, fertilizer misuse not only drives runoff and emissions but also costs growers billions in avoidable input spend and yield loss. For small and medium growers, even a single equipment failure or delayed disease response can mean thousands of dollars lost in one season.

We built AgriMind because we saw these losses as a preventable intelligence gap. Farms already generate signals — temperature, moisture, light, and air-quality readings, visual crop images, and equipment footage — but most of that data is fragmented or underused. AgriMind brings it together in one system: sensor-driven irrigation decisions to reduce water waste, multimodal video understanding to flag equipment risks and unsustainable practices, and AI-based crop health analysis to catch disease earlier. Instead of reactive farming, we enable proactive, climate-smart action — helping growers protect yield, reduce resource waste, and improve sustainability without adding operational complexity.

What it does

AgriMind is an AI-powered sustainable farming platform that helps growers:

- Deploy a mobile field robot with temperature, moisture, air quality, and light sensors to scan crop zones and detect anomalies.

- Stream sensor readings to the web app over real-time websockets, then store them in SQLite for historical trend analysis with charts and graphs.

- Run a multimodal video pipeline using TwelveLabs (Marengo + Pegasus) for embedding/summarization to analyze footage for equipment failure, abnormal events, timestamped risk moments, water loss/usage, carbon-footprint estimates, and actionable recommendations.

- Run an image analysis pipeline using Cloudinary for uploaded farm photos with cloud storage + annotation of sustainability metrics (CO2 impact, water usage, and risk insights).

- Query both pipelines through Fetch.ai agents as tool functions, so users can ask natural-language Q&A in Agentverse/ASI:One about past footage and uploaded media.

- Use a two-stage AI pipeline on the ASUS Ascent GX10: a fine-tuned PaliGemma (LoRA) vision model classifies crop disease with 96.35% accuracy and ranked confidence scoring, then Gemma 4 via Ollama generates plain-language treatment recommendations from fused vision + sensor outputs.

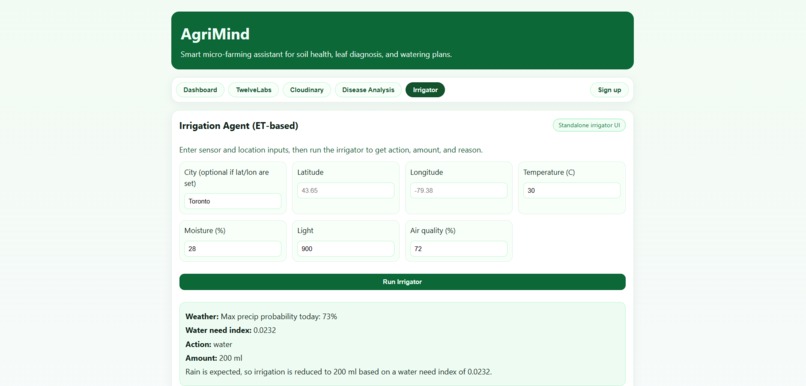

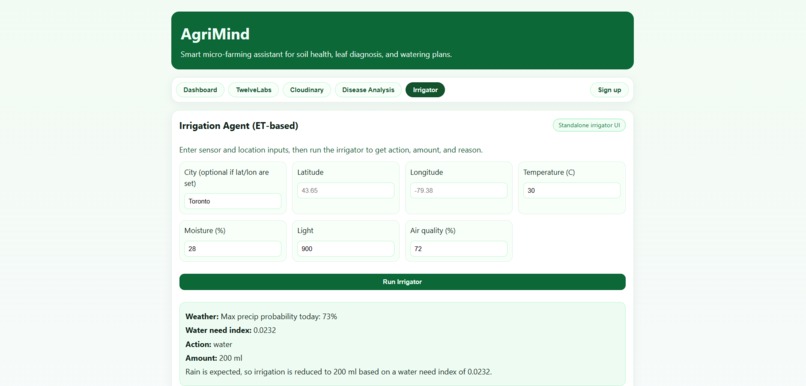

- Use the Irrigator Agent to combine weather + sensor context into precise watering actions (e.g., reduced irrigation when rain is likely) with chat-based reasoning.

- Interact through ElevenLabs voice support to ask about sensor trends, agro-tech operations, and sustainability decisions hands-free.

How we built it

AgriMind is designed as a modular, multi-layer agri-intelligence stack that connects robot telemetry, multimodal media analysis, agent orchestration, and decision support into one pipeline.

1) System architecture (end-to-end flow)

Edge Sensing (Robot Layer) The mobile field robot is built on a Raspberry Pi 4 paired with a Pi Shield, which provides the physical interface for connecting the following sensors:

Soil Moisture Sensor — measures real-time volumetric water content at the root zone to detect under/over-watering conditions Temperature Sensor — captures ambient and canopy-level thermal readings to flag heat stress or frost risk Air Quality Sensor — monitors atmospheric indicators (e.g., CO₂, particulate matter, VOCs) relevant to crop health and micro-environment conditions Light/LDR Sensor — measures photosynthetically active radiation (PAR) / sunlight intensity to assess light availability per crop zone

As the robot traverses the field and stops near each plant, it simultaneously:

Captures a close-up plant image using an onboard Logitech camera module Samples all four sensors to produce a structured telemetry snapshot tied to that location

The Raspberry Pi is accessed and monitored remotely via SSH from a development PC, enabling real-time observation of sensor readings, system logs, and robot state during field runs.

Sensor-to-Inference Pipeline

Rather than processing locally on the Pi (which lacks the compute for ML inference), the robot transmits both the captured image and the structured sensor payload to the ASUS Ascent GX10 — a dedicated on-premise AI accelerator. The GX10 runs a two-stage inference pipeline: a fine-tuned PaliGemma (LoRA) vision model classifies crop condition at 96.35% accuracy with ranked confidence scores and crop-aware filtering, then a fusion layer combines VLM confidence with live sensor readings (moisture, temperature, air quality, light) into a structured risk score — which Gemma 4 (served locally via Ollama) converts into concise, farmer-readable recommendations. Each inference run is persisted as both a .pkl binary and .json artifact for logging and trend analysis.

Realtime ingestion + persistence (data layer)

Sensor events are streamed to the web platform via WebSocket-style realtime flow and persisted in SQLite for historical analytics. This enables trend charts, anomaly checks, and stateful decisioning.

Frontend control + observability (UI layer)

A React + TypeScript frontend provides:

- Live dashboard and trend visualizations

- Disease-analysis upload UI (drag/drop + preview + classification)

- Cloudinary media pipeline views

- Irrigation decision + rationale + chat interface

- Voice interaction (ElevenLabs widget)

Backend services + API routes (application layer)

A FastAPI backend exposes route-level capabilities for:

- Agent decisioning

- Explanation generation

- Cloudinary event/latest retrieval and webhook handling

- TwelveLabs index/history/ingest/summarize/Q&A operations

- These routes normalize frontend calls and orchestrate external AI services.

Multimodal analysis pipeline (vision/video layer)

- Cloudinary is used for media ingestion/storage and transformed assets.

- Video intelligence routes support indexed video search/Q&A/summarization and timestamped risk segmentation.

- Output includes equipment-risk indicators, abnormal-event detection, and sustainability-oriented insights.

Disease classification module (ML layer)

Implemented as a separate service in classification-model/ (kept isolated from core backend):

- Trained on PlantVillage and PlantDoc open datasets, preprocessed via automated scripts that reorganize raw images into class structure and generate train/val/test JSONL splits, achieving 96.35% classification accuracy

- Exposes

POST /predictfor image inference - Returns ranked classes + confidence scores

- The frontend Disease Analysis tab calls this service directly for classification results.

Irrigation intelligence (decision layer)

Rule + signal-driven irrigation logic combines:

- Sensor snapshot

- Crop stage

- Forecast precipitation probability

- Output includes:

- Water-need index

- Water/skip action

- Dose recommendation

- Human-readable rationale

Agent orchestration (agentic layer)

Fetch.ai agents are wired as tool-call orchestrators across media/sensor/decision components, so users can query system state and analysis results in natural language.

Auth + access control (identity layer)

Auth0 is integrated at app bootstrap:

main.tsxwraps the app with Auth0Provider when env keys are presentApp.tsxsupports landing/auth/page route states and login redirect flow- Non-auth mode is supported via

authEnabled=falsefallback

2) Key implementation layers by technology -**Edge Hardware: **Raspberry Pi 4 + Pi Shield, onboard camera module, SSH-managed via development PC; inference offloaded to ASUS Ascent GX10

- Frontend: React, TypeScript, Vite, CSS

- Identity/Auth: Auth0 (@auth0/auth0-react)

- Backend/API: Python + FastAPI

- Database: SQLite (local persistence for logs/history)

- Media infrastructure: Cloudinary

- Video intelligence: TwelveLabs integration

- Agent orchestration: Fetch.ai / Agentverse / ASI:One tool-calls

- Disease ML service: PaliGemma (LoRA fine-tuned, 96.35% accuracy), Hugging Face Transformers, PEFT, PyTorch, trained on **PlantVillage* + PlantDoc datasets

- Local LLM inference: Ollama — Gemma 4 (gemma3:4b) for farmer-readable recommendation generation

- Voice UX: ElevenLabs conversational widget

3) Representative API surface Core route categories used in the pipeline include:

POST /api/decision– sensor/media-aware decision outputPOST /api/explain– explanation generation for decisions- Cloudinary routes: latest/events/webhook/analyze

- TwelveLabs routes: index/history/ingest/summarize/Q&A

- Classifier service:

POST /predict(separate process, separate folder)

4) Project folder skeleton

farm-master/

├─ .env # Frontend runtime config (Auth0, classifier URL, etc.)

├─ README.md # Project overview and setup notes

├─ index.html # Vite HTML entry

├─ package.json # Frontend dependencies/scripts

├─ package-lock.json # Dependency lockfile

├─ vite.config.ts # Vite build/dev config

├─ eslint.config.js # ESLint config

├─ tsconfig.json # Base TS config

├─ tsconfig.app.json # App TS config

├─ tsconfig.node.json # Node/Vite TS config

├─ docs/

│ └─ CLOUDINARY_WEBHOOK_AND_SUBMISSIONS.md # Cloudinary pipeline documentation

├─ src/ # FRONTEND (full)

│ ├─ App.tsx # Main app UI + routing + landing + dashboard + disease + cloudinary + irrigator + ElevenLabs

│ ├─ App.css # Main styles (landing, dashboard, hero, widgets, modules)

│ ├─ main.tsx # Frontend bootstrap + Auth0Provider wiring

│ ├─ index.css # Global/base CSS

│ ├─ api/

│ │ └─ agriApi.ts # Typed frontend API client wrappers for backend routes

│ └─ cloudinary/

│ ├─ UploadWidget.tsx # Cloudinary upload widget component

│ └─ config.ts # Cloudinary upload config/env mapping

├─ backend/ # BACKEND (full)

│ ├─ .env # Backend env vars (API keys, service config)

│ ├─ .gitignore # Backend-specific git ignore rules

│ ├─ requirements.txt # Python backend dependencies

│ ├─ app/

│ │ └─ main.py # FastAPI app entrypoint + route registration

│ ├─ agents/

│ │ ├─ health_agent.py # Health-focused agent behavior/orchestration

│ │ ├─ irrigation_agent.py # Irrigation decision agent logic

│ │ ├─ orchestrator_agent.py # Cross-tool orchestration agent

│ │ └─ sustainability_agent.py # Sustainability analysis agent logic

│ ├─ models/

│ │ ├─ contracts.py # Core request/response contracts and typed models

│ │ └─ cloudinary_contracts.py # Cloudinary-specific typed contracts

│ ├─ services/

│ │ ├─ decision_engine.py # Core diagnosis + irrigation decision logic

│ │ ├─ health_intelligence.py # Health explanation/insight generation

│ │ ├─ pipeline.py # End-to-end backend pipeline orchestration

│ │ ├─ cloudinary_client.py # Cloudinary API interaction layer

│ │ ├─ cloudinary_pipeline.py # Cloudinary media analysis pipeline

│ │ └─ twelvelabs_client.py # TwelveLabs ingest/index/summarize/Q&A integration

│ └─ storage/

│ └─ local_store.py # Local persistence layer (SQLite-backed store)

└─ classification-model/

└─ leaf_disease_classifier.py # disease classification service (CLI + HTTP API)

Challenges we ran into

1. Hardware ↔ software link design (WebSocket + secure remote access)

- Our robot node had to push live sensor telemetry (temp/moisture/light/air quality) into the web stack with low latency.

- We tested multiple patterns and found the most reliable method was: device-side publisher → backend WebSocket ingestion, with SSH tunneling/reverse port forwarding for secure remote debugging and field access behind NAT.

- Biggest issues: packet drops on unstable field Wi-Fi, reconnect storms, and out-of-order sensor frames.

- We mitigated this with heartbeat + retry logic, timestamped payloads, and server-side dedupe.

2. FastAPI orchestration under multi-service load

- Our backend had to coordinate decision routes, Cloudinary hooks, TwelveLabs flows, and agent tool calls.

- Constraint: blocking external API calls caused request pileups and UI lag.

- Fixes: route-level timeouts, async boundaries, fallback responses, and stricter payload validation contracts.

3. Cloudinary + multimodal pipeline consistency

- Upload/webhook timing wasn’t always deterministic, and transformed assets could arrive before analysis metadata.

- We hit race conditions between “media uploaded”, “analysis completed”, and “UI fetch latest”.

- We added event polling windows, status flags, and idempotent event storage to keep results consistent.

4. TwelveLabs indexing + query lifecycle

- Video ingestion/index readiness and Q&A availability are not instant.

- Constraint: users expected immediate answers even when indexing wasn’t ready.

- We added state-aware UX (“ingesting/indexing/ready”), retriable actions, and history logging to prevent silent failures.

5. Fetch.ai agent tool-call reliability

- Agents could produce ambiguous calls when media context or IDs were missing.

- We constrained tool schemas, normalized outputs, and added deterministic error messages so agent responses stayed actionable.

Fetch.AI Challenge

AgroVision AI is a multimodal intelligence agent built on Fetch.ai that transforms raw farm media into actionable insights. It serves as a centralized analysis layer where users can interact with both video and image data through natural language or structured tool calls. By integrating advanced video understanding from TwelveLabs and image processing via Cloudinary, the agent bridges the gap between unstructured visual data and meaningful agricultural decision-making.

Agent Profile: https://agentverse.ai/agents/details/agent1qttn9hsavn88535h8xr9g320ggk4zx7as6mf4yd4d2vgj4amxlccvjn84pp/profile

Agent Address: agent1qttn9hsavn88535h8xr9g320ggk4zx7as6mf4yd4d2vgj4amxlccvjn84pp

Agent Shared Chat URL: https://asi1.ai/ai/agent1qttn9hsavn88535h8xr9g320ggk4zx7as6mf4yd4d2vgj4amxlccvjn84pp

ASUS Hardware Challenge

AgriMind was built and runs entirely on the ASUS Ascent GX10 — leveraging its NVIDIA GB10 Grace Blackwell Superchip for real-time, on-premise AI inference with zero cloud dependency for compute-heavy workloads.

What we ran on the GX10: Fine-tuned PaliGemma (LoRA) vision model* for crop disease classification, achieving 96.35% accuracy with ranked confidence scoring and crop-aware filtering across **PlantVillage + PlantDoc datasets Gemma 4 served locally via Ollama for natural-language recommendation generation — converting raw vision + sensor outputs into plain-language, farmer-readable treatment guidance in real time Sensor fusion layer combining live VLM confidence scores with robot telemetry (moisture, temperature, air quality, light) into a structured agronomic risk score per crop zone Full inference pipeline exposed via FastAPI (POST /analyze_upload) — accepting multipart payloads directly from the Raspberry Pi robot and returning structured JSON + persisted .pkl artifacts per run YOUTUBE: https://youtu.be/IIkgUTQpKFk Why the GX10 mattered:

The Raspberry Pi robot is compute-constrained by design — keeping it lightweight and field-mobile required offloading all ML workloads to dedicated inference hardware Running PaliGemma + Gemma 4 locally on the GX10 eliminated cloud round-trip latency, enabling real-time crop health decisions as the robot moves through crop rows On-premise inference kept raw plant imagery and sensor data fully private — no farm data leaves the local network during analysis The GX10's ARM Ubuntu environment allowed us to deploy a production-grade FastAPI inference server with startup warmup, robust error handling, and per-run artifact persistence without any cloud infrastructure

Accomplishments that we're proud of

- Built a Raspberry Pi 4 field robot with a Pi Shield connecting soil moisture, temperature, air quality, and light sensors plus a camera module, managed remotely via SSH from a development PC

- Designed a split-compute pipeline where the Pi transmits images and sensor data to the ASUS Ascent GX10, which runs a fine-tuned PaliGemma LoRA vision classifier achieving 96.35% accuracy, fused with live sensor context, then Gemma 4 via Ollama for plain-language farmer recommendations

- Integrated TwelveLabs video understanding, Fetch.ai agents, Cloudinary media pipelines, ElevenLabs voice, and a sensor-driven irrigation engine into one unified React + FastAPI platform

- Executed across two parallel workstreams — Hardware and Software, delivering a fully integrated system within a single hackathon timeframe

What we learned

- Real agricultural intelligence requires hardware and software designed together — sensors generate signal, AI generates insight, but neither delivers value without a reliable pipeline connecting both

- Configuring the *Raspberry Pi 4 *+ Pi Shield + multi-sensor setup with SSH-based remote development taught us how critical stable hardware foundations are before any software layer can function

- Building a two-stage AI pipeline — PaliGemma LoRA (96.35% accuracy) for vision classification and Gemma 4 via Ollama for language generation — required careful fusion of VLM confidence scores with live sensor readings to produce reliable, context-aware outputs

- Integrating TwelveLabs, Cloudinary, Fetch.ai, and ElevenLabs into one backend taught us how to normalize API boundaries and ship fast without breaking pipeline coherence

What's next for AgriMind

- Expand disease classification to more crop and pathogen types, and improve robot mobility hardware for stable navigation on real-world uneven farm terrain

- Integrate drone and satellite imagery for field-scale analysis, and build predictive analytics on accumulated historical sensor data for proactive yield forecasting

- Scale the dashboard for multi-farm management and build multilingual voice assistants to serve farmers across different regions and languages

- Run structured pilot programs with real growers, cooperatives, and greenhouses to validate AgriMind as a deployable, long-term AI operating system for sustainable farming

Built With

- auth0

- cloudinary

- elevenlabs

- fast-api

- fetch.ai

- gemma

- langgraph

- ngrok

- node.js

- npm

- opencv

- python

- react

- twelvelabs

- typescript

Log in or sign up for Devpost to join the conversation.