-

-

A sleek, minimalist landing page introducing the "Action Era" of machine learning development.

-

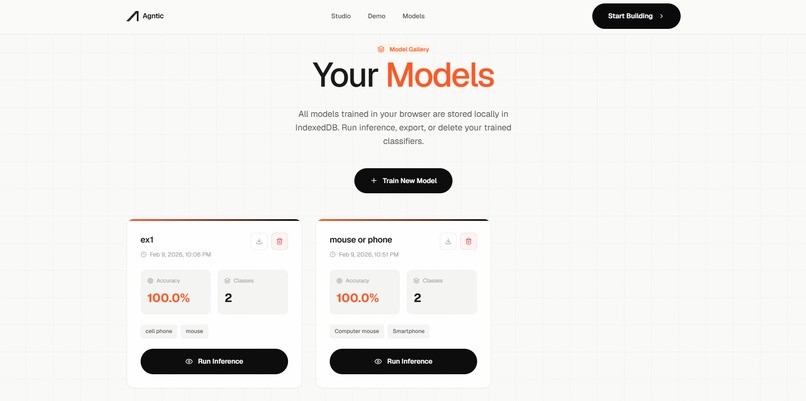

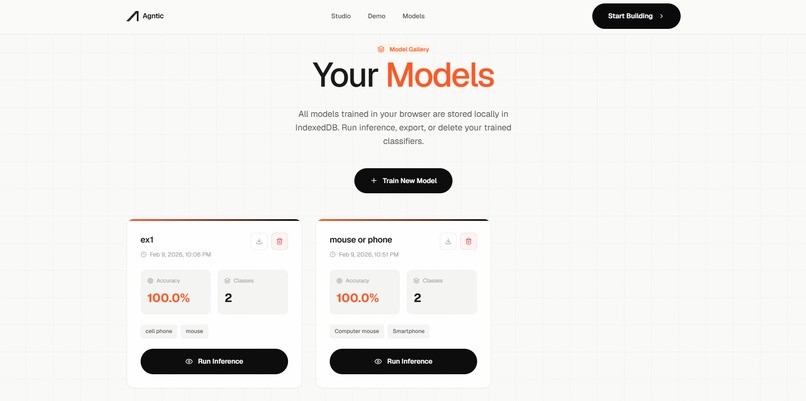

The Model Gallery: Local repository of trained classifiers, stored securely in the browser's IndexedDB.

-

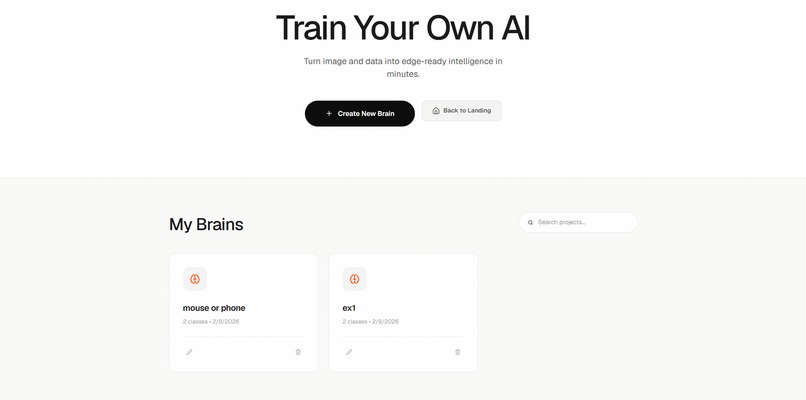

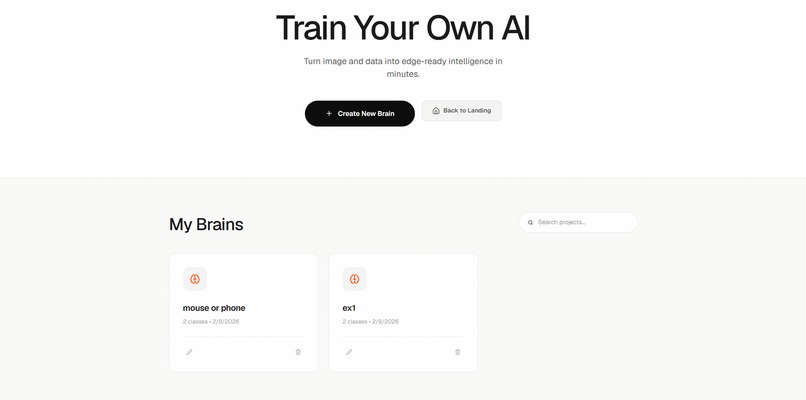

The Project Hub: Effortlessly create new experiments or pick up where you left off with existing projects.

-

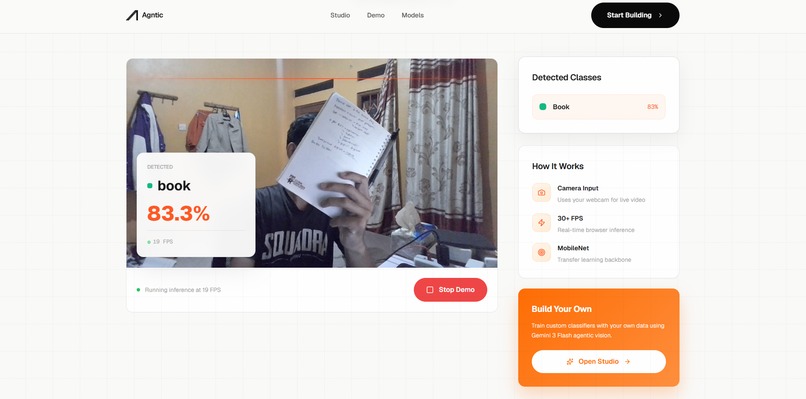

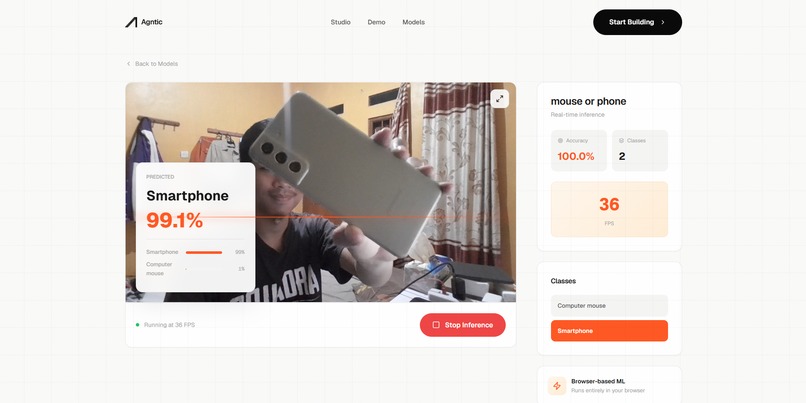

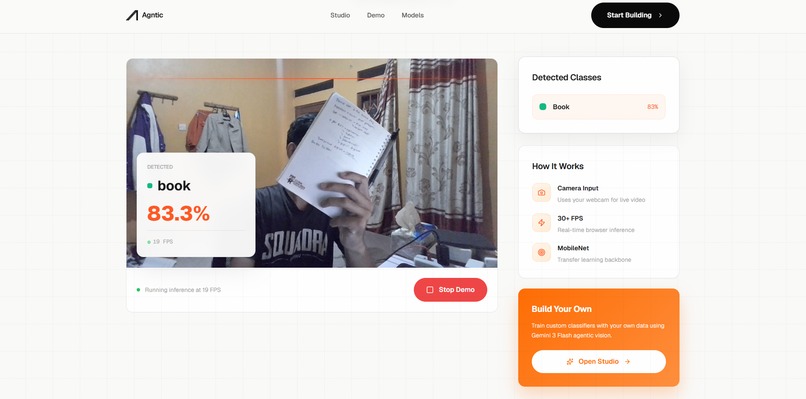

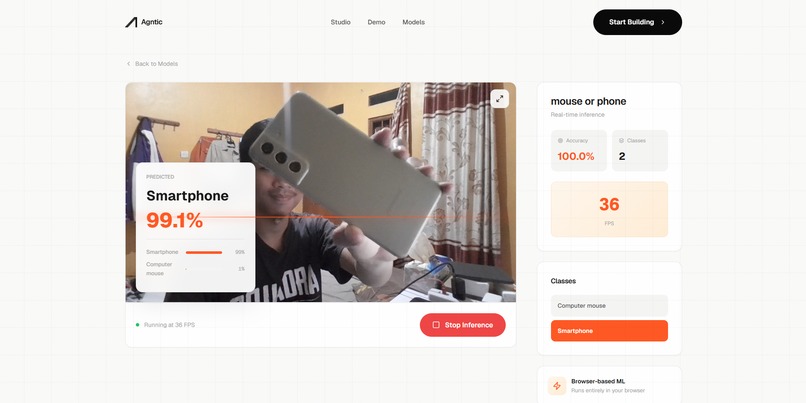

The Showcase: Standalone inference mode for instant testing of models without going through the training flow.

-

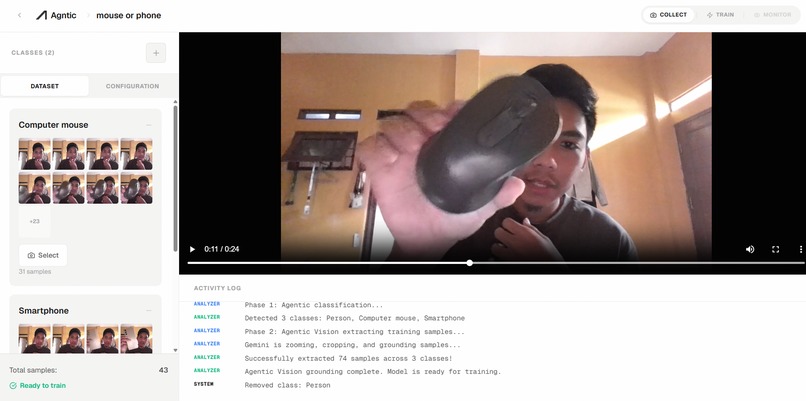

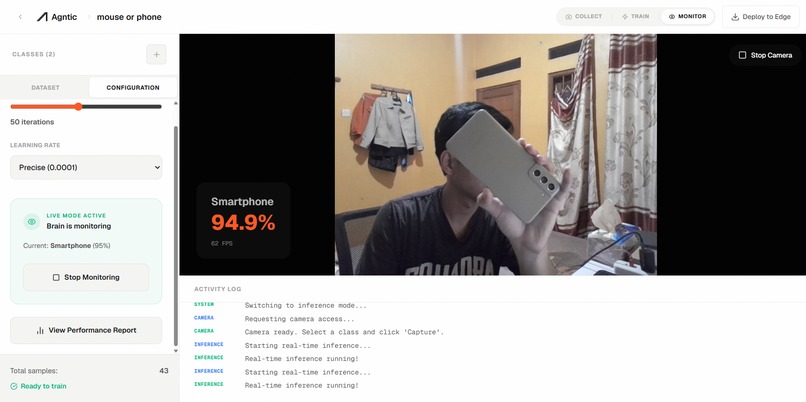

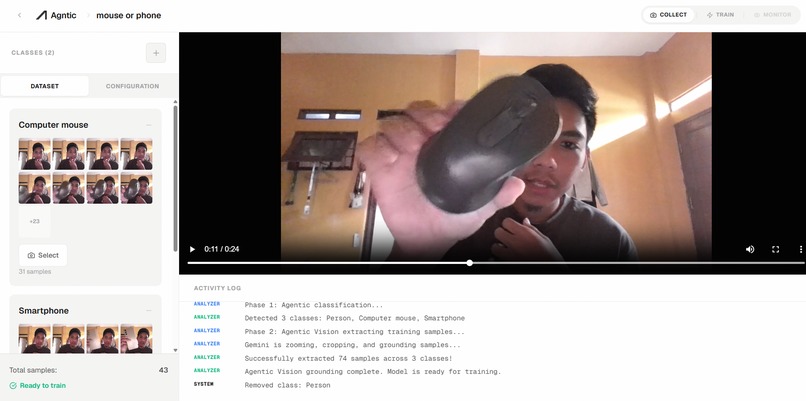

The Agntic Training Studio: A three-panel orchestration dashboard for data, training, and real-time agents.

-

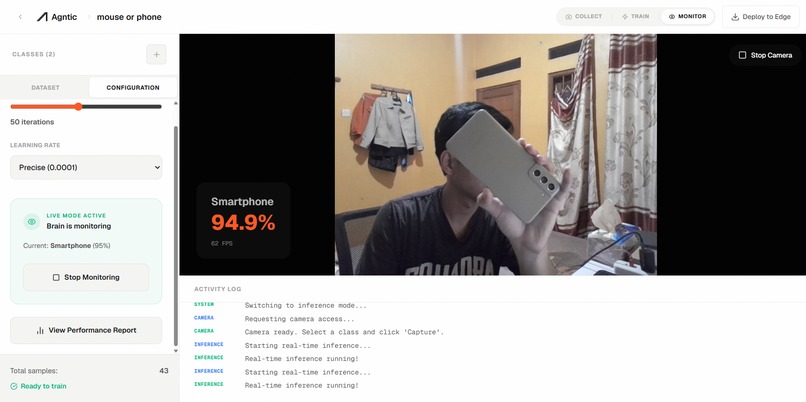

Real-time model verification immediately after training—powered by WebGL-accelerated TensorFlow.js.

-

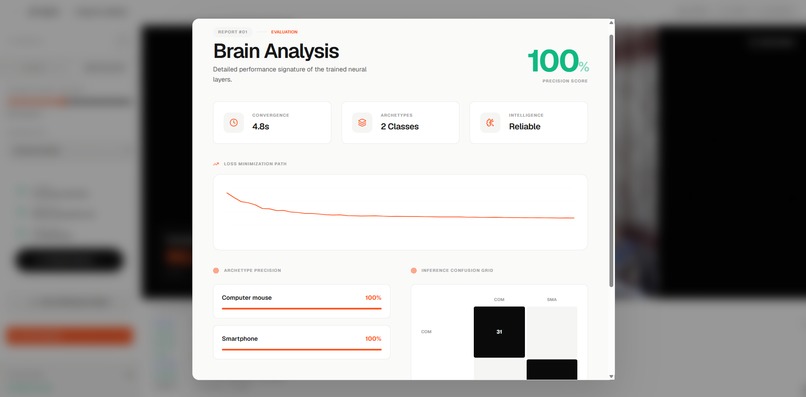

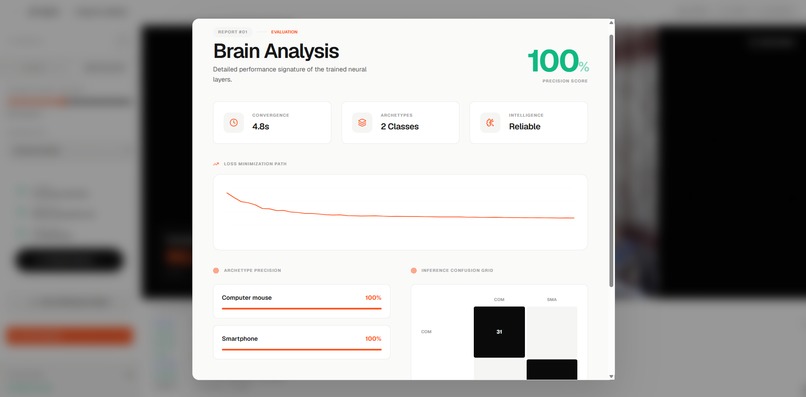

Deep model insights featuring confusion matrices and per-class accuracy scores generated autonomously.

-

Standalone inference mode for testing production-ready models on diverse real-world object datasets.

Agntic: Agentic Video-to-Classifier Studio

Agntic is an autonomous ML factory that transforms raw video into high-performance vision models. By combining Gemini 3’s reasoning with browser-side deep learning, we’ve eliminated the manual friction of the ML lifecycle.

💡 The Inspiration

Machine Learning is often bottlenecked by "The Triple Burden": Labeling, Compute, and Complexity. We built Agntic to prove that Gemini 3 Flash can act as an autonomous engineer, handling data curation and quality auditing so developers can focus on building, not labeling.

🚀 Why Agntic Wins

| Feature | Traditional Workflow | Agntic (Agentic) |

|---|---|---|

| Data Selection | Manual frame picking | Gemini Temporal Analysis |

| Precision | Human bounding boxes | Agentic Vision + Code Execution |

| Quality Control | Visual inspection | Autonomous Quality Auditor |

| Training | Server GPUs ($$$) | In-Browser WebGL (Free) |

🤖 The "Wow" Logic: Agentic Pipeline

Agntic orchestrates specialized agents that do more than just "chat":

- Temporal Video Analyzer: Scans video sequences to discover classes using Gemini’s 1M context window.

- Precision Vision Cropper: Employs Agentic Vision with Code Execution to zoom into frames, ensuring 80%+ object visibility and pixel-perfect bounding boxes.

- The Auditor: An autonomous quality-check agent that evaluates blur, lighting, and occlusion to prune "garbage" data before it hits the trainer.

- ML Architect: Dynamically suggests hyperparameters based on dataset variety scores.

🧠 SOTA Technical Integration

We pushed the boundaries of what's possible in a browser:

1. Advanced Data Curation

Using MobileNet embeddings, we implement Cosine Similarity Matrices (via tf.matMul) to detect and remove redundant samples. This ensures high dataset variety and prevents the model from overfitting on identical frames.

2. Browser-Side Deep Learning

- Transfer Learning: We use a MobileNet v3 backbone with a custom 2-layer Dense head.

- On-the-fly Augmentation: Real-time image transformations (flips, brightness shifts) using TensorFlow.js tensors.

- Regularization: Integrated L2 Regularization and Dropout to maintain model robustness despite small dataset sizes.

3. Gemini 3 as a Logic Engine

We utilize Gemini 3 Flash for Structured JSON Reasoning, allowing it to coordinate complex media processing tasks like FFmpeg slicing and sample density optimization (aiming for 200ms-500ms sampling frequency).

🛠️ Tech Stack

- AI Core: Google Gemini 3 Flash (

@google/genai) - Neural Engine: TensorFlow.js (WebGL Accelerated)

- Frontend: Next.js 15, TypeScript, Framer Motion

- Media Ops: FFmpeg (WASM), Sharp, Firebase Storage

🗺️ Roadmap

- Multi-label Scene Reasoning: Complex object interactions.

- Active Learning Loop: Agents suggesting "missing" video angles.

- Edge Export: One-click export to TFLite for mobile/IoT.

Log in or sign up for Devpost to join the conversation.