-

-

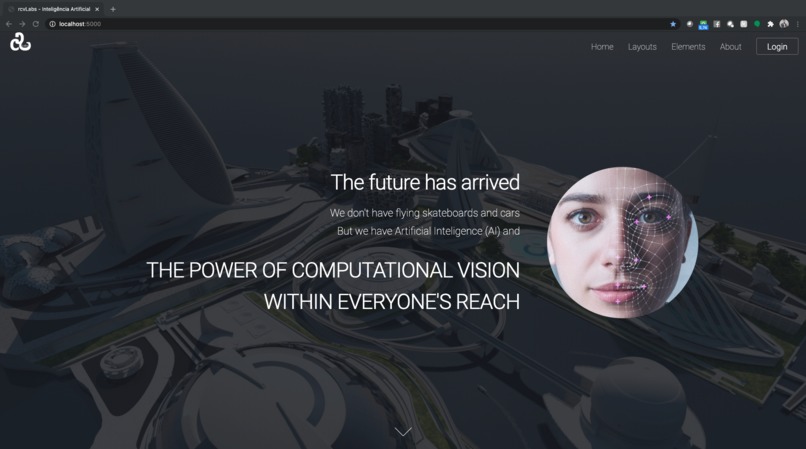

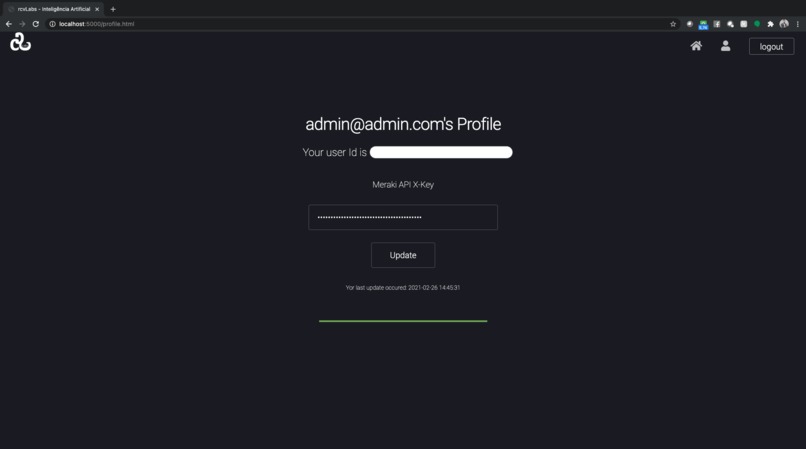

Main Page

-

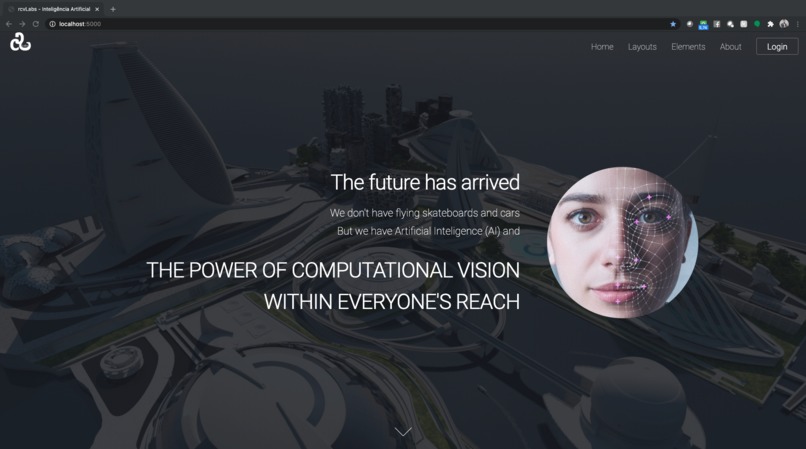

Login Page

-

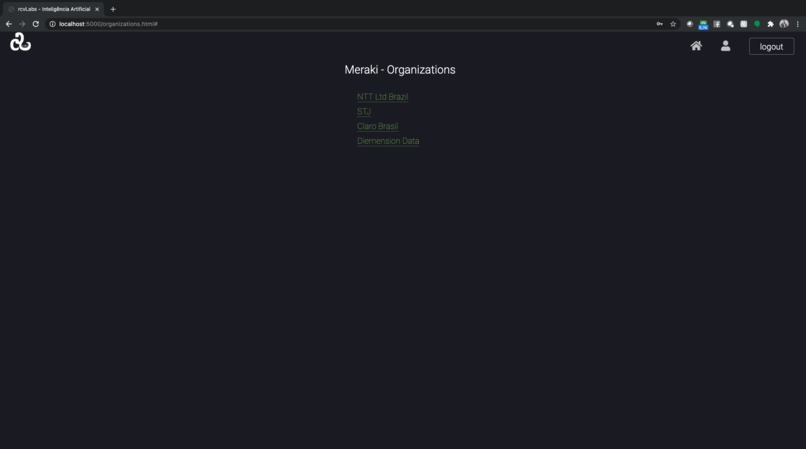

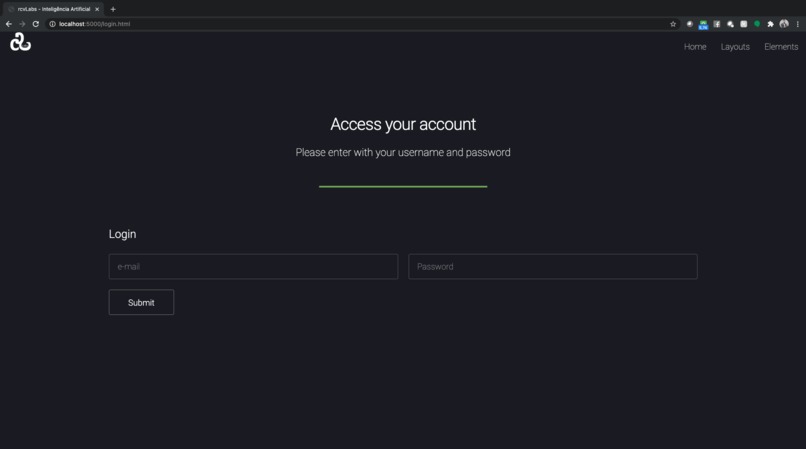

Organizations - Meraki Integration

-

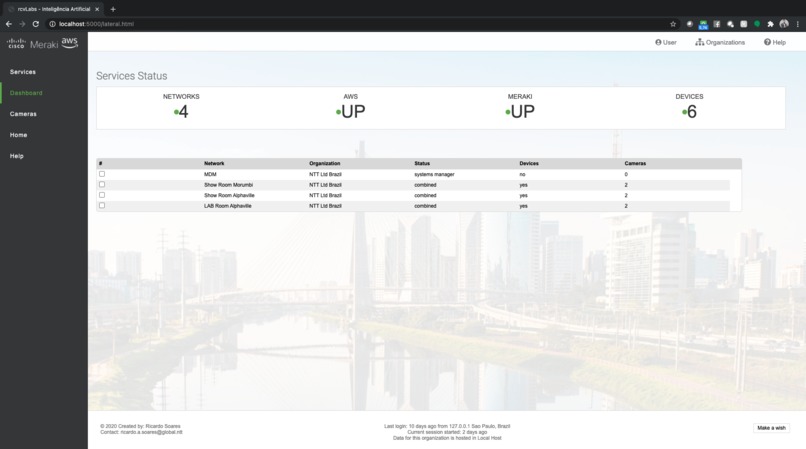

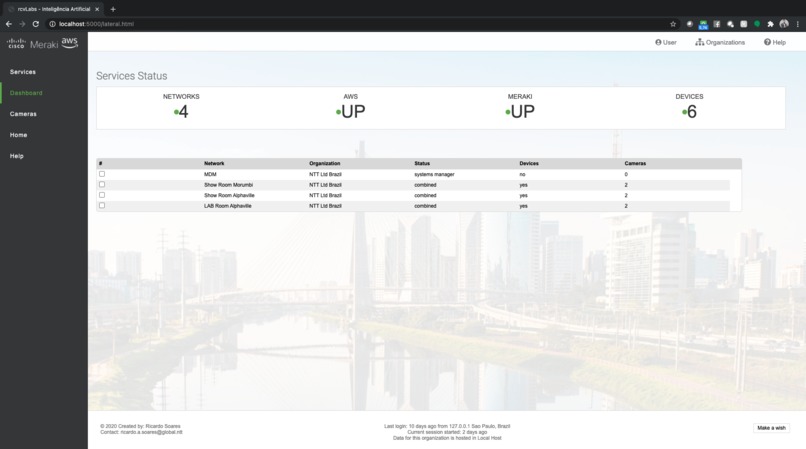

Dashboard to see status of connections and integrations

-

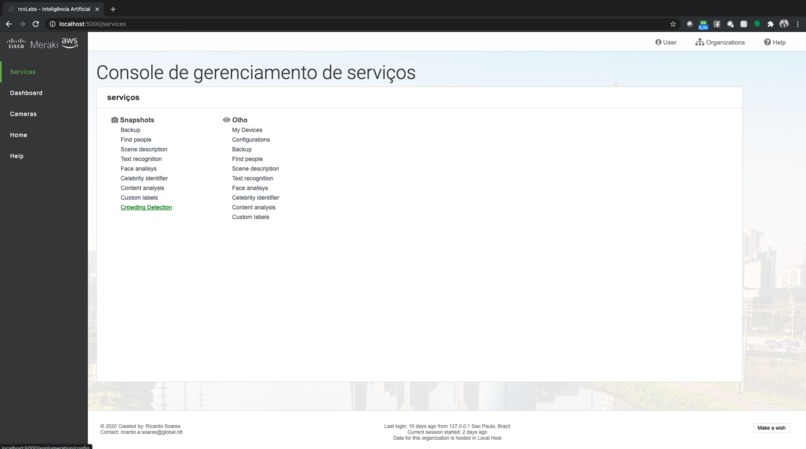

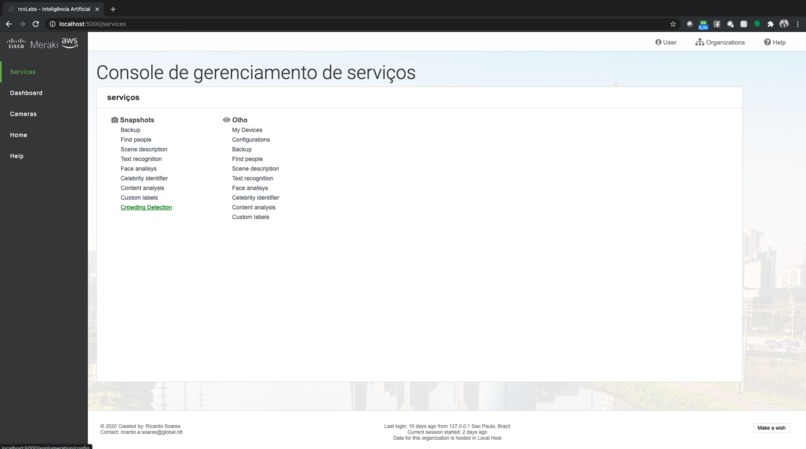

Service Menu

-

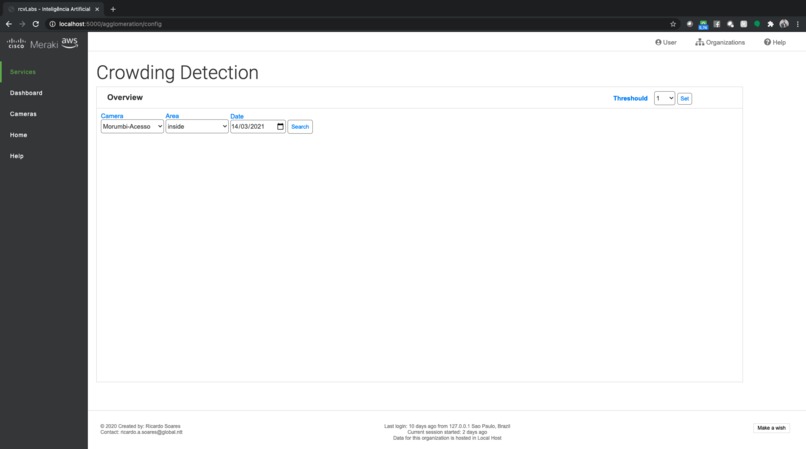

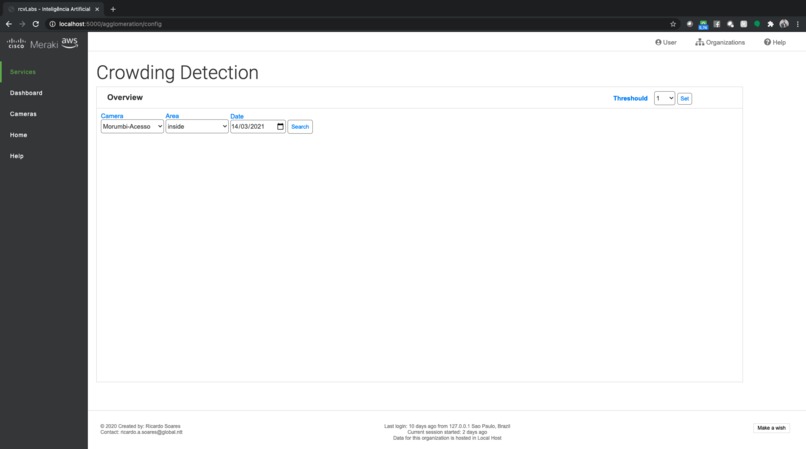

Crowing detection feature

-

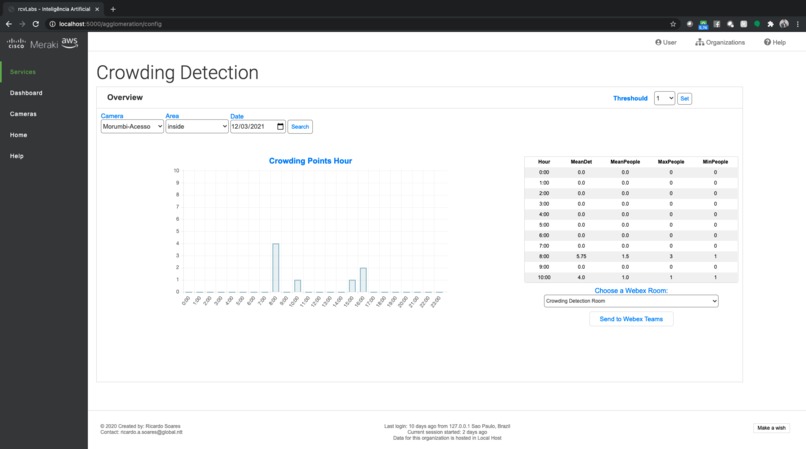

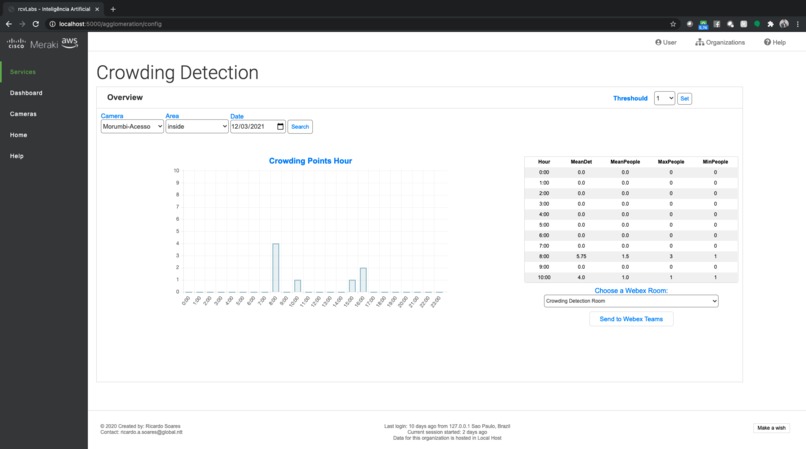

Report of crowing detection

-

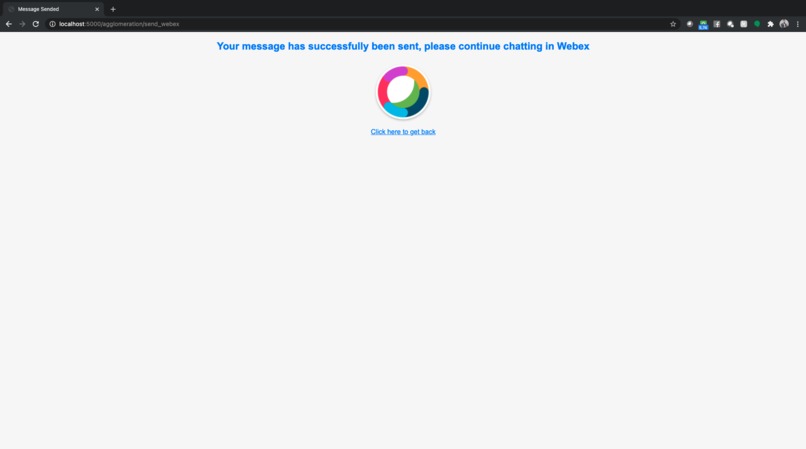

Webex Message Success Page

-

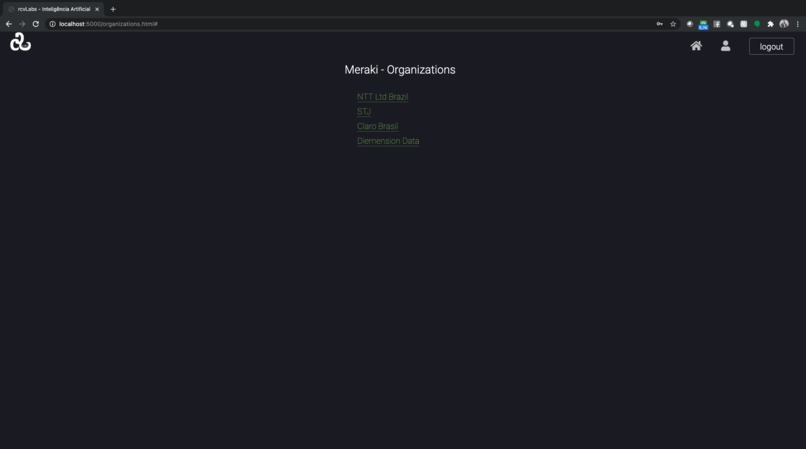

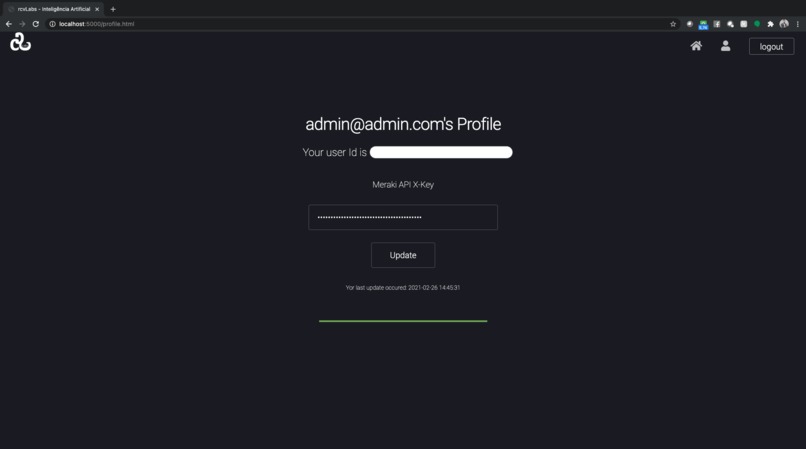

Meraki Key Configuration Screen

-

Face Detection Example 1

-

Face Detection Example 3

-

Face Detection Example 2

Inspiration

Consuming artificial intelligence cannot be difficult. In modern times companies needs quickly identify and adapt scenario changes. Computer vision becomes a tool with infinite possibilities to assist C-levels in these challenges. With the sudden change of public health related to COVID-19, controlling and monitoring the number of people within environments has become an overnight business requirement. Using cameras to help large companies monitor their environments and add the capacity of artificial intelligence (computational vision) to support the capture, qualification and presentation of the data obtained seems to me to be big opportunity to help decision makers. Create a platform where companies can easily register their cameras and consume multiples services quickly, simply and according their needs is my goal.

What it does

Creates a cloud-based platform that consume Meraki API, map the environment and register cameras. The portal will expand the computer vision capability of the MV cameras using the captured video as well as data generated by the camera via the MQTT protocol to extract some useful information for further processing and information extraction. For the Hackaton I focused to develop a crowding detection feature. The idea is to set a threshold based on the number of people that is considered crowding, and if the number of people exceeds the specified, the system will generate a crowding detection event. With this data we generate daily report that is possible to share at Webex Teams rooms according the specific need.

How we built it

Using microservices I built a fully distributed architecture with several personas dealing with specifics tasks. All of them based on Docker and implemented in Python with Flask. I installed a MQTT Server using Mosquitto Server and a Data Base using MongoDB (both containers based) to support the application. I developed an MQTT client in python to consume the camera's MQTT protocol and act as a gateway sending this information via REST APIs. I have created a Camera Recording that consumes the RTSP streaming of a specific camera to save images. I added an AI algorithm to identify if there is or there not face detected in real time capture. All information collected at MQTT message and face detection are stored at MongoDB to each image saved. I have developed the system to save images only when people are detected, using MQTT messages to identify these events. The idea is developing more features in the future using this data. To implement the crowding detection service, I record the information available at MQTT messages about people counting always that its events occur. To facilitates the customer interaction, I have created in HTML5, CSS, Java, Python Flask a web interface similar to Meraki Dashboard. Its interface consumes the services I have created via REST API. At the end I present to the user the in a intuitive format. I have added the possibility to share the report via Webex Teams, to facilitate the communication between teams and accelerate the response to incidents of crowing.

Challenges we ran into

As a network engineer, developing programming skills is a native challenge. To create all thing how I have imagined I needed to use several different languages to compose the project as a whole: Python, Data Base, HTML5, Java, CSS, JINJA, etc. I needed to understand how docker works (the basic as needed) to run the application. Perform real time image processing was too a big challenge.

Accomplishments that we're proud of

I am currently able to capture images, identify possible agglomerations, generate graphs for reports with captured information. Identify it there is or not a face in an image captured. Created an intuitive graphic interface suitable for easy and agile consumption of the product. But main point of proud was the development of ability to embed the AI algorithm in the code to detect faces. At the moment will not be the focus for its Hackathon but I have launched the base for new services development.

What we learned

Create a web page. Object-oriented databases (MongoDB). Languages (HTML5, JINJA, Java, Python Flask, etc.) Create APIs in Python. Clearly understand how Meraki APIs work. Develop the ability to understand deeper how its development world works. Use and manipulate data captured and received. Capture images via RTSP streaming. Save and working with these images. Extract valuable information to the businesses.

What's next for MV Computational Vision Improvements

Refine the development so far to transform it into a scalable platform that can be easily accessed and consumed via web. Add computer vision services already available through the AWS APIs face recognition (I have created the base to construct this service). Add more and more computational vision services available at AWS.

Log in or sign up for Devpost to join the conversation.