-

-

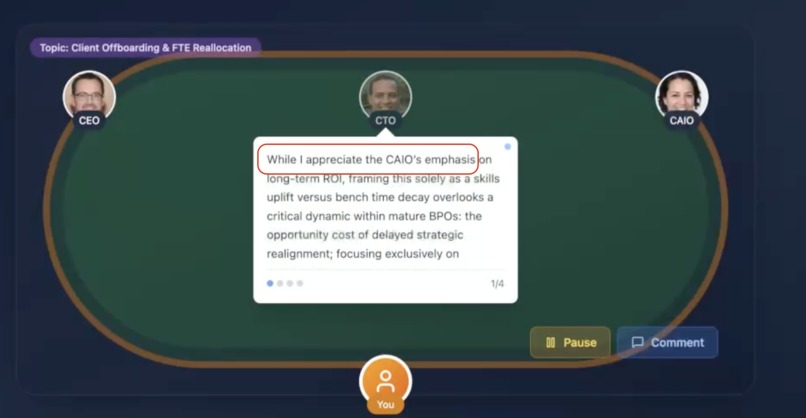

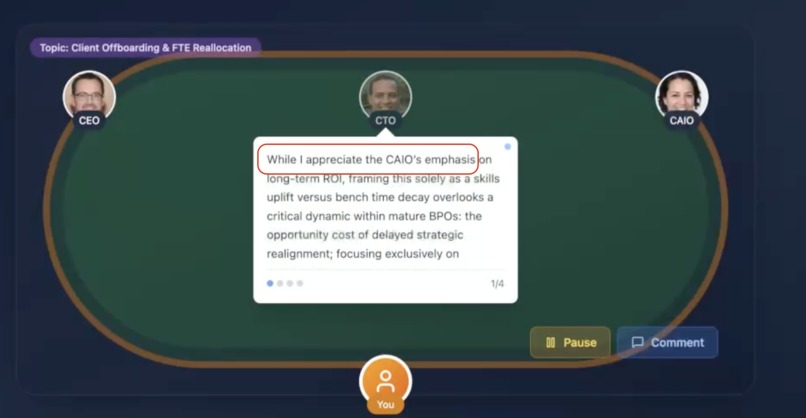

Agents exhibit emergent behavior, challenge each other, provide contextually-aware strategic insights that mirror real boardroom dynamics.

-

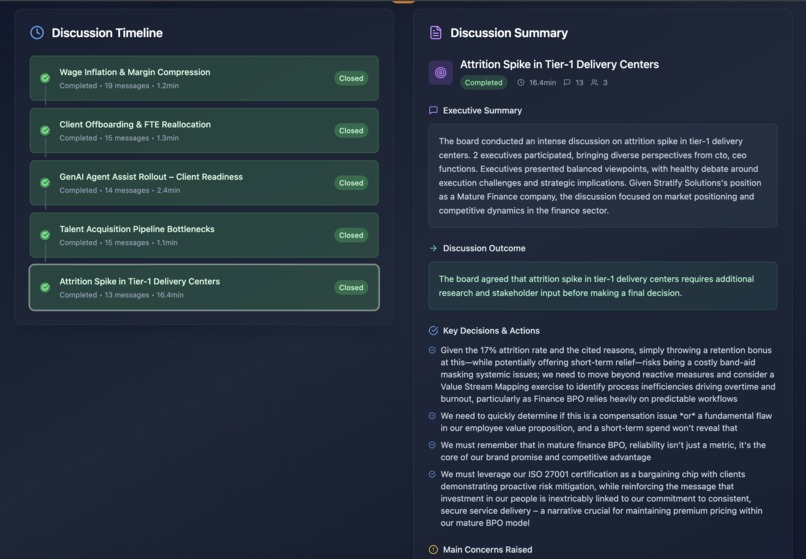

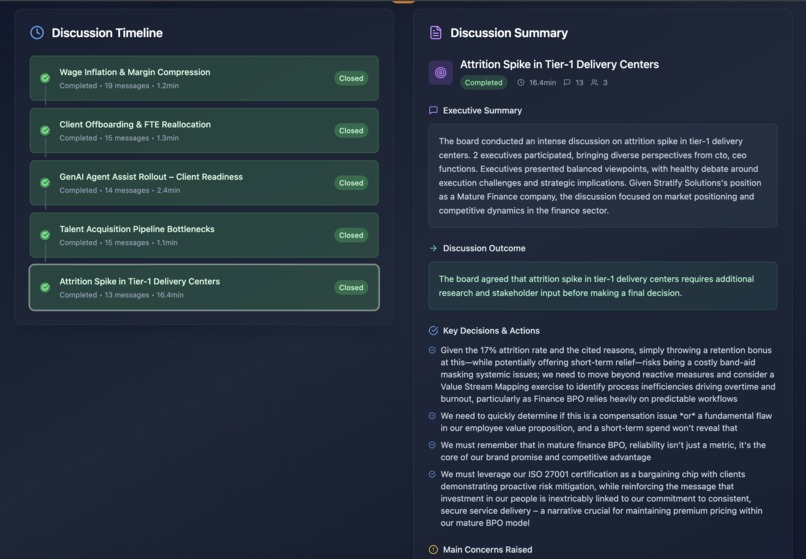

topics discussion summary

-

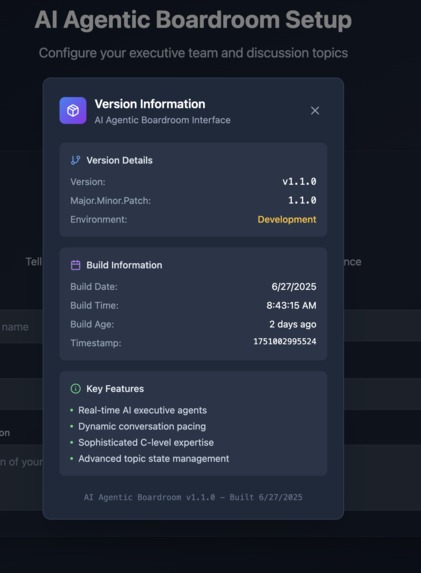

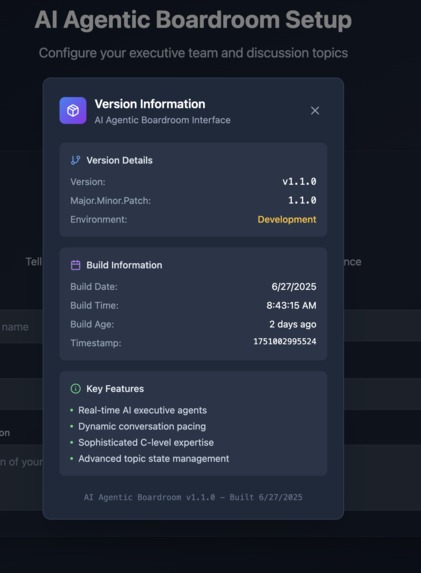

version tracker of the web app

-

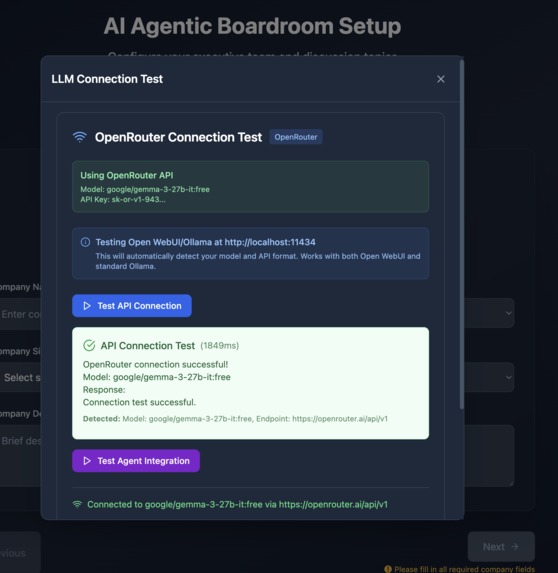

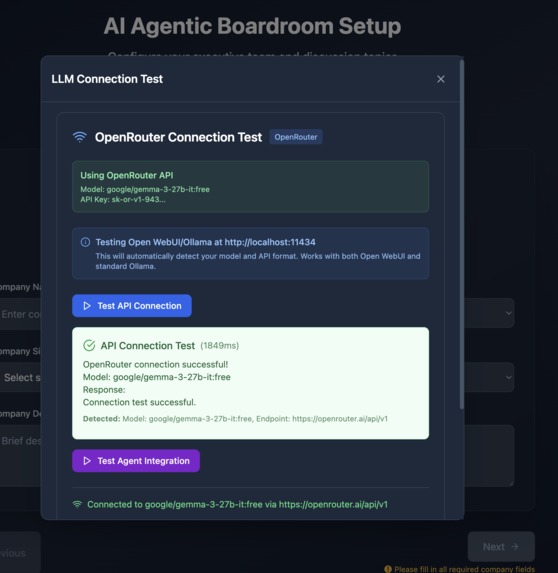

LLM tester. Discovers connections to OpenWebUI, Ollama, OpenAI, OpenRouter. (when any of them isa available on the host)

-

selection of C-level agents. Each one has unique perspective to the specific domain

-

AI agentic boardroom. Agents = Boardmembers

-

option to comment and change the direction of the conversations

Inspiration

I believe the future of work is collaborative, fast, and powered by AI. This project is my vision for that future: a place where anyone can assemble a dream team of expert advisors in seconds, and make better decisions, faster. Every company, regardless of size or budget, could benefit from the wisdom of a full C-suite or seasoned domain experts.

What it does

On-demand AI Executive Team

Company Setup: Define your company context, industry, and stage

Executive Selection: Choose your C-level team from available agents

Topic Configuration: Set discussion topics with priorities and durations

Result: Watch AI agents (board members) engage in strategic discussions in real time

(optional) Interactive Participation: Interrupt and guide conversations in real-time

How we built it

- Node.js 18+

- TypeScript

- Ollama installed and running or OpenRouter AI API key

- llama3.2:latest (or smarter) model downloaded for local Ollama instance

- Hosted by Netlify

AI Integration:

- Local LLM: Privacy-focused with Ollama integration

- OpenRouter AI: Access to multiple models via OpenAI-compatible API

- Context Awareness: Full conversation history in every request

- Fallback System: Graceful degradation when LLM unavailable

- Real-time Generation: No pre-computed responses

Challenges we ran into

- high CPU usage for ollama topics/threads for agents due endless loops and too many requests to the LLM

- when building and had initial PoC version with hardcoded text data, but later added real service - the LLMs was constantly trying to switch to the easier and faster to integrate hardcoded text version.

- env variables and deploys in Netlify as I was first-timer in managed Netlify hosting

- when requests are sent in a wrong sequence and not properly closed - the impact on the system is exponential. in just a few prompts becomes unusable.

Accomplishments that we're proud of

- LLM requests coordination. Agents send and process just the right amount of requests every time to support real time update of all participants.

- Real-time LLM Integration - supporting both locally running model and API enabled. - enabling readiness to run own private model(s)

- Multi-Agent Coordination: Agents interact naturally without central orchestration

- Dynamic Personality System: Agents can be challenging, salty, or supportive based on conversation context

- Adaptive Timing: Smart conversation pacing based on meeting duration and urgency

- Emergent Behavior: Conversations develop organically with surprising insights

- User Interruption Handling: Non-blocking real-time user input integration

- Contextual Memory: Agents remember everything said and adapt accordingly. Full conversation history and user input awareness

Agentic AI System Highlights // Each agent is an autonomous entity with:

- Unique personality and expertise

- Memory of full conversation history

- Ability to challenge and disagree

- Context-aware response generation

- Real-time LLM integration

What we learned

- Vibe coding takes time. 80% of the project is done in the first 20% of the time, but we need the other 80% of the time to add the missing 20% of the time that are challenging.

- Bolt.new has its own style of doing things, one needs to spend some time with with to get used to its way of work. The amount of front end work (when proper prompt is sent) is huge.

- I should more often tell the LLM - "do not perform any other changes" (apart from what I am asking it to help with)

- Using chat mode saves a lot of tokens

What's next for Agentic Boardroom - Next-Gen Executive Decision Making

- Update with better LLM models - better trained on the subject or fine-tune a model from provider

- Local models support for privacy and wide context containing vast majority of a real company data.

- Add voices

- Analytics Dashboard: Meeting insights and decision tracking

- Meeting Recording: Export conversations and summaries

- Multi-language Support: Global boardroom simulations

- Event-Driven Architecture: Switch to event orchestrator pattern

- BYOE: Bring Your Own Executive support

- Potentially expand to offer a layer of agentic interactions asd a service

- A service to create agents on-demand - via training LLM models, based on the online content of someone, or just generic system prompt interventions.

- Adding tests to avoid degradation of quality

- Add better logging

Note for hackathon judges: Initial blot.new link for the project is https://bolt.new/~/sb1-kyxjhpnk. Public duplicate version is also available at https://bolt.new/~/sb1-hxm9sq45. Both of those versions require API key for an LLM, but that should not be hardcoded, so you'd better try the public Netlify version https://jolly-salamander-77a282.netlify.app/ or once available the public domain smartboardroom.online

Built With

- bolt.new

- git

- github

- langchain

- netlify

- node.js

- ollama

- openai

- openrouter

- react

- tailwind

- typescript

- vite

Log in or sign up for Devpost to join the conversation.