-

-

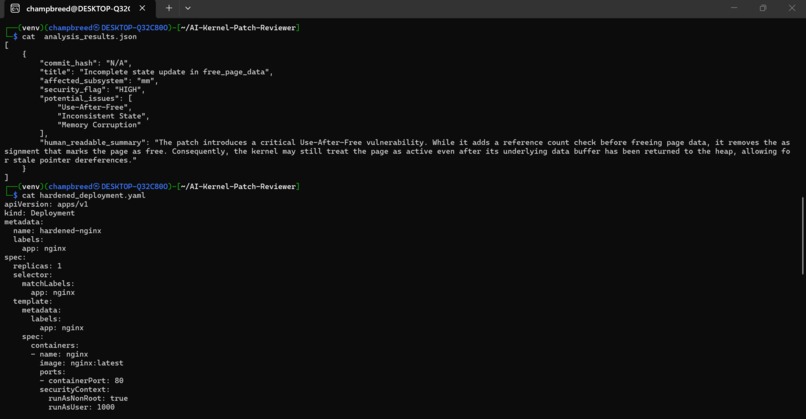

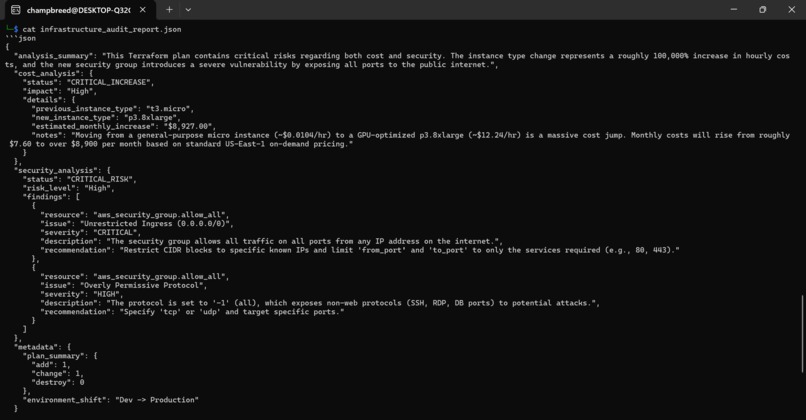

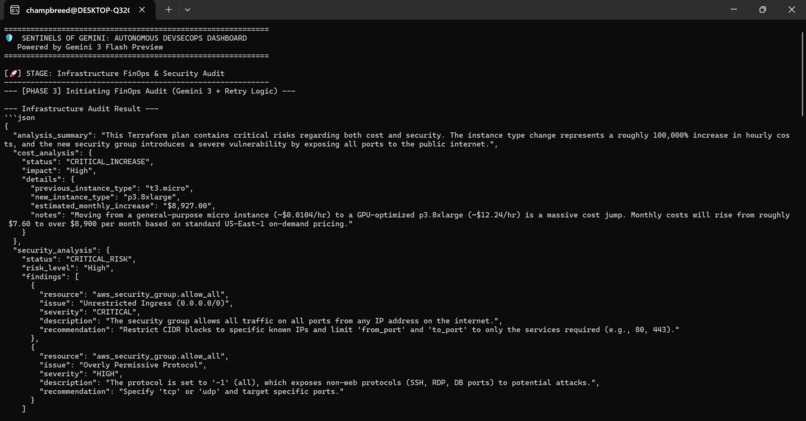

he output is rendered in structured JSON, providing the pipeline with clear, actionable risk levels and technical recommendations.

-

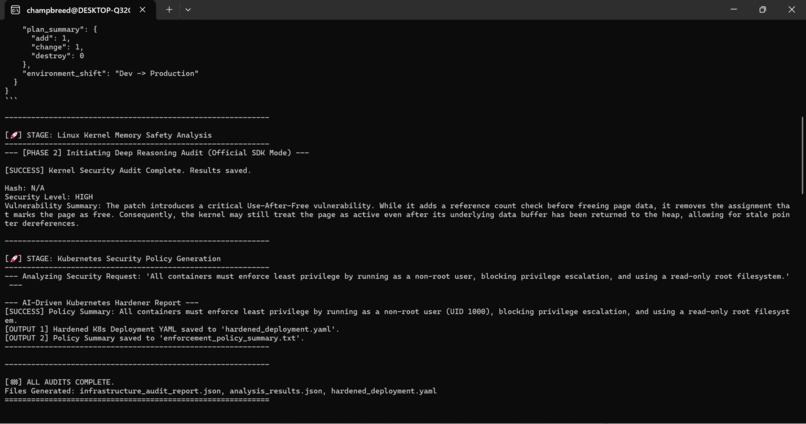

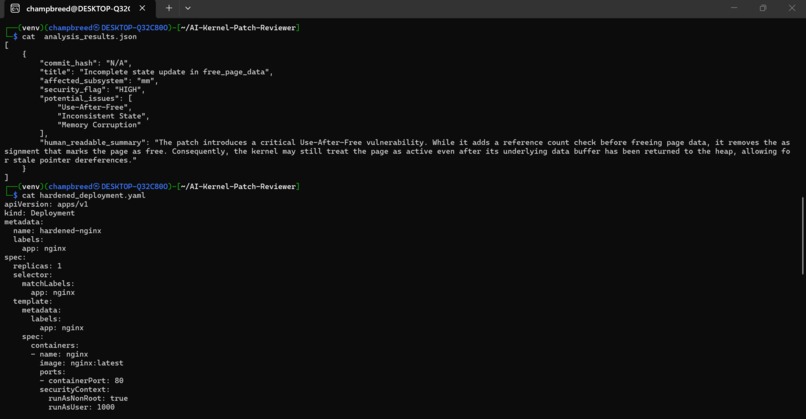

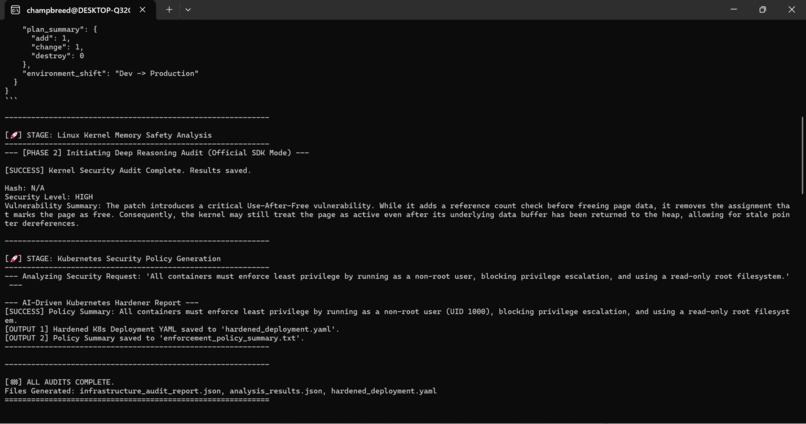

This terminal view demonstrates the system's dual-threat capability across different technical layers. In the Kernel Memory Safety stage

-

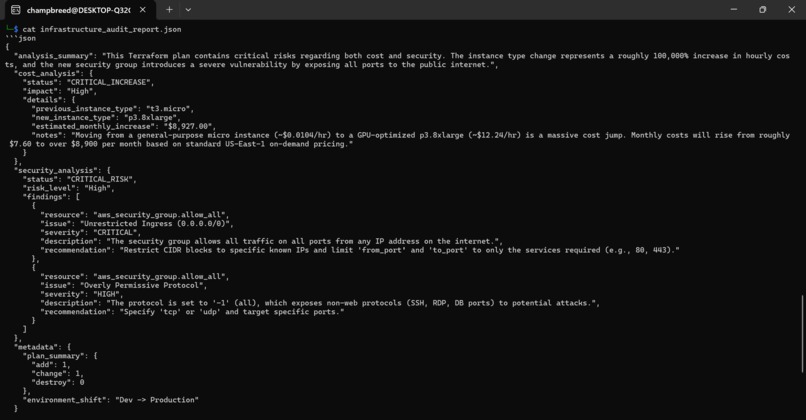

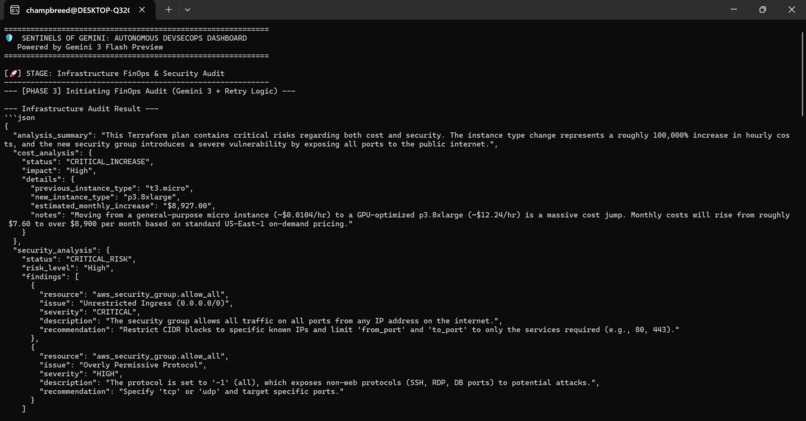

This final screenshot provides a detailed inspection of the audit artifacts generated by the system.

-

The AI identifies a "CRITICAL_INCREASE" in costs, specificaly flagging a massive jump from a t3.micro instance to a GPU-optimized p3.8xlarge

-

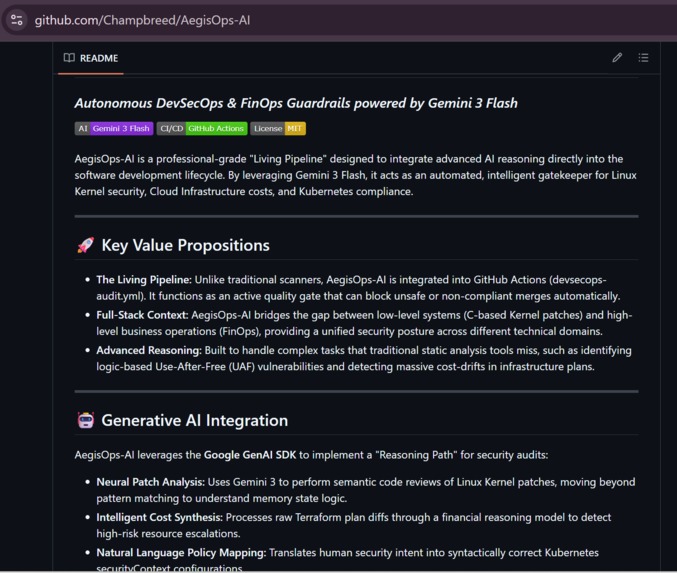

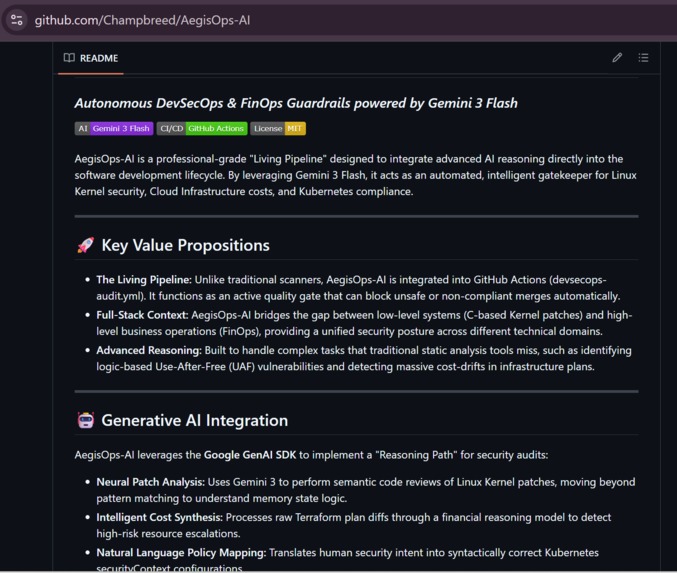

EADME: AegisOps-AI A professional "Living Pipeline" powered by Gemini 3 Flash for autonomous DevSecOps. I

Inspiration In the modern cloud-native era, security is often reactive rather than proactive. Organizations lose millions to "Silent Disasters"—minor configuration oversights that lead to massive cloud cost drifts or critical kernel-level vulnerabilities. Existing static analysis tools (SAST) often fail to understand the logic and state behind the code. I was inspired to build AegisOps-AI to bridge this gap, creating a "Living Pipeline" that doesn't just scan for patterns but actually reasons through the security and financial implications of every commit, from the deep Linux Kernel to the high-level Cloud Orchestration layer.

What it does AegisOps-AI is an autonomous DevSecOps sentinel that acts as an intelligent gatekeeper within the CI/CD pipeline. It operates across three critical domains:

- Infrastructure FinOps & Security: It audits Terraform plans to identify "cost explosions" (e.g., accidental high-tier GPU upgrades) and permissive security groups that violate compliance frameworks like SOC2.

- Kernel Memory Safety Analysis: It performs deep reasoning on raw Git patches for the Linux Kernel, specifically targeting complex logic bugs like Use-After-Free (UAF) vulnerabilities that standard scanners miss.

- Kubernetes Hardening: It acts as a security architect, translating natural language requests into production-ready, "Least Privilege" Kubernetes manifests (enforcing non-root users and read-only filesystems).

How we built it The platform is built on a robust, asynchronous Python-based engine powered by the Google GenAI SDK.

- Core Logic: I utilized Gemini 3 Flash for its exceptional reasoning-to-latency ratio, enabling real-time auditing of technical files.

- Pipeline Integration: The dashboard is integrated into GitHub Actions, allowing for automated triggers upon every pull request.

- Structured Outputs: I engineered precise system instructions to force the AI to output structured JSON, which is then parsed to generate "REJECT" or "SUCCESS" signals for the deployment pipeline.

- Safety Guardrails: I implemented custom retry logic and exponential backoff to ensure the pipeline remains resilient under high-frequency auditing.

Challenges we ran into The most significant challenge was Contextual Logic Extraction. Teaching an AI to distinguish between a "necessary" instance upgrade and a "wasteful" one required fine-tuning the prompt engineering to include regional pricing context and workload intent. Furthermore, analyzing Linux Kernel patches is notoriously difficult due to the complex memory states involved. I had to design a "Reasoning Path" that forced Gemini to evaluate the state of memory (e.g., PAGE_FREE) before and after the patch to ensure its vulnerability assessment was technically sound and not just a hallucination.

Accomplishments that we're proud of

- The "REJECT" Signal: Successfully building a system that can autonomously stop a deployment if it detects an $8,000/month cost drift or a critical security hole.

- Kernel Depth: Achieving a high-fidelity audit of C-based kernel patches—a domain usually reserved for highly specialized senior security engineers.

- Zero-Human Latency: Creating a system that provides a "Security Architect" level review in under 10 seconds, accelerating the developer velocity without compromising safety.

What we learned Through this project, I gained a deep understanding of the Gemini 3 Flash multi-turn reasoning capabilities. I learned that AI is most effective in DevSecOps when it isn't just a "search engine" but a "logic engine." I discovered how to effectively handle rate-limiting in a production-grade SDK and, more importantly, how to use AI to bridge the gap between human intent (Natural Language) and technical enforcement (YAML/HCL).

What's next for AegisOps-AI: Autonomous DevSecOps Sentinel The roadmap for AegisOps-AI includes:

- Real-time eBPF Observability: Integrating Falco and Cilium logs to allow Gemini to analyze live "shady" behavior in production clusters.

- Automated Remediation: Moving beyond "REJECT" signals to "FIX" signals, where the AI automatically generates a corrected patch and submits it back to the developer.

- Multi-Model Verification: Implementing a "Dual-Sentinel" mode where Gemini 3 Flash performs the initial audit and a high-reasoning model like Gemini 1.5 Pro verifies critical findings to ensure 99.9% accuracy.

Built With

- amazon-web-services

- c

- devsecops

- finops

- geminiapi

- github

- google-genai-sdk

- hcl

- kubernetes

- linux-kernel

- python

- terraform

Log in or sign up for Devpost to join the conversation.