Try it out yourself!

Inspiration

One of the biggest challenges, and one that we are acutely aware of now more than ever, is that it can be really hard to deliver the same connected experience remotely. Students have suffered unevenly across the country and the world where access to remote learning tools can vary greatly. When it comes to Computer Science, we can and should do better. One way that a lot of students got exposure to areas of CS is through workshops like the ones hundreds attended each year run by ADSA on campus. Those workshops would offer an opportunity for younger students to interact and work with their peers, following along with the presentation and seeing their code run before their own eyes. Unfortunately, the tools on the market for collaborating on code and running workshops are either built only for a handful of users or cost exorbitant amounts of money. This is a problem because only the wealthiest can afford to pay and it creates yet another barrier to learning for lower-income individuals or organizations wishing to offer workshops without a budget. To make matter worse, most of these tools are restricted to on-premise servers blocked by private networks and organizational accounts making them unavailable to students who don't attend universities that pay for them. Inspired by our experiences working directly with professors, hearing their frustrations with what's available to them today, and our experiences running workshops through ADSA, we aimed to build something better and to make it completely Open Source and free for all. We want students anywhere around the world to be able to join workshops with hundreds or thousands of their peers and learn in real-time. We want students to be able to follow along with the teacher during class and see for themselves how the code works. So we set out to make that a reality.

What it does

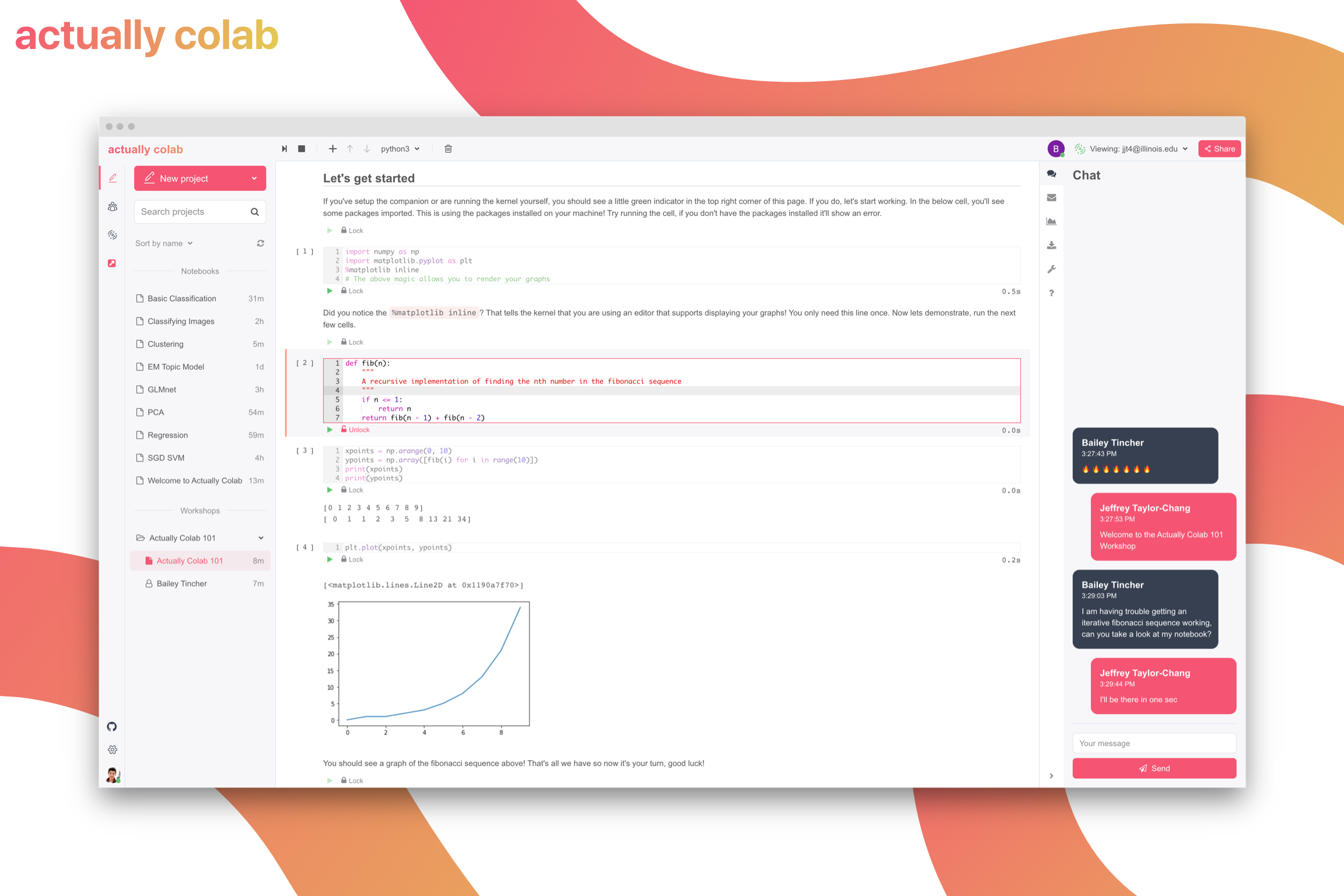

It is a collaborative code editor based in the cloud. Our name "Actually Colab" is a playful jab at Google's Colab which isn't actually collaborative (and suffers from the same centralized problems as products like CoCalc but more on that later). It is a cloud notebook editor built from the ground up which allows users to create or import ipynb notebooks they normally would write in JupyterLab and have them saved in the cloud. Users can share these notebooks with their team and edit together at the same time (or separately) with features like showing your collaborators' cursors and edits. When you share a notebook or workshop, we send an email notifying the user. One of the most unique parts of our design is our distributed network of kernels. This allows each student in the same notebook to execute code independently. We allow users to switch their view to see the outputs of any other users on the notebook, so if you don't want to run the code or if you're following along with a team member, no problem. We also built a feature called Workshops which is targeted towards classes and (as you might've figured) workshops. Instructors can create a primary notebook and configure the attendees (and even co-instructors). Once they release the workshop, the attendees will be emailed that their workshop is starting and they can access the workshop in a read-only capacity so they can follow along with the presentation and see the code run for themselves. Have a question for the instructors? No problem, we have a built-in chat that allows you to send messages back and forth. We also understand that sometimes you really do need a notebook file on your machine, be it for grading purposes or committing to version control, and we've thought of that too! Users can export their notebook back to an ipynb file or as a python or markdown file, at a moment's notice.

How we built it

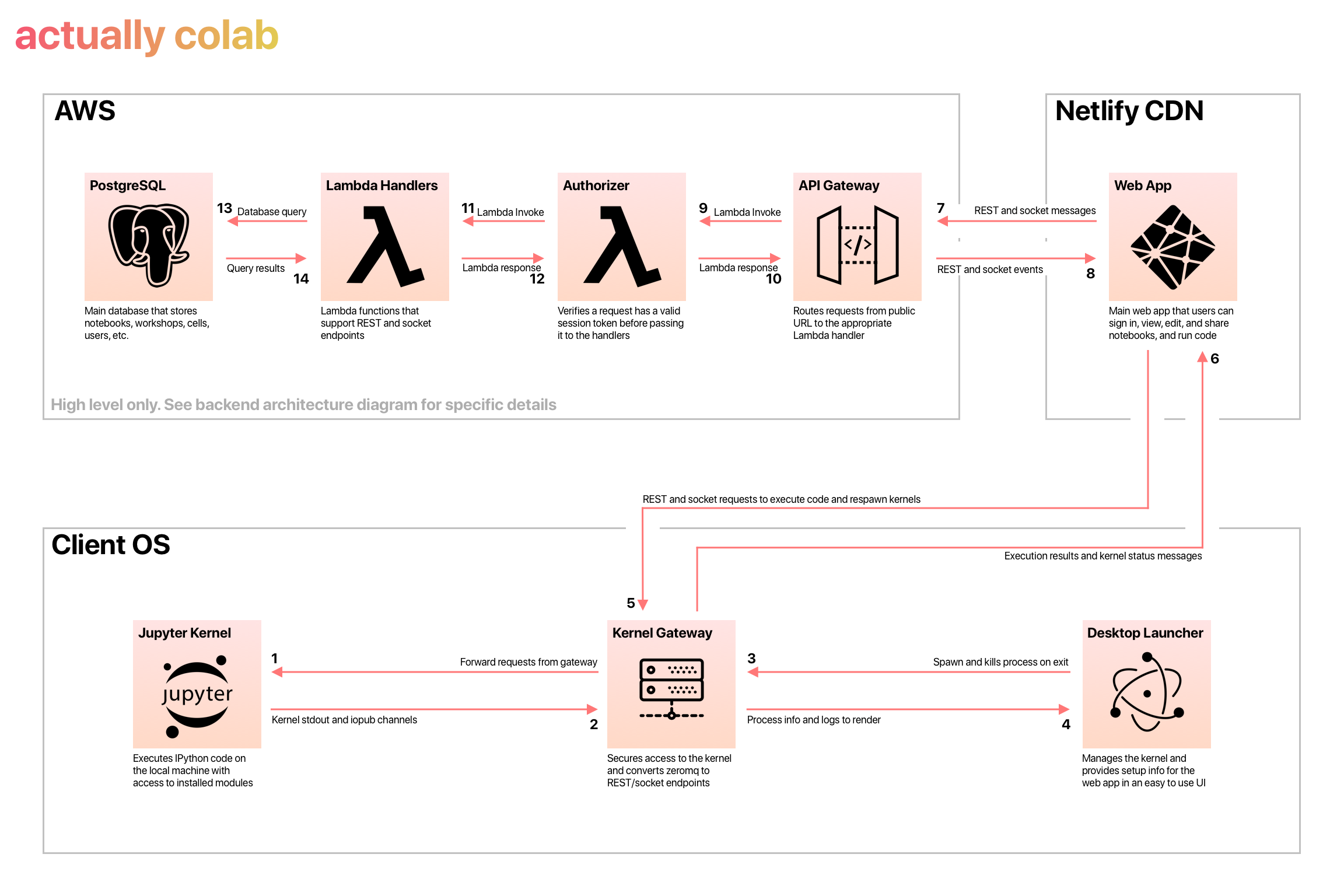

Our project consists of three primary components:

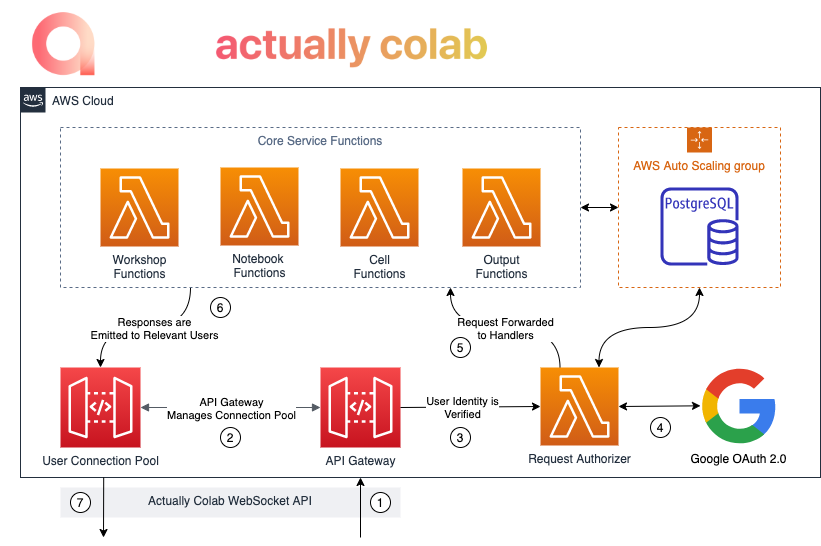

@actually-colab/editor: This monorepo houses our Serverless Lambda functions as well as our REST/socket client package which is frontend-agnostic and abstracts away the networking details for the caller. This component was built by Bailey Tincher and was built using Serverless.js to manage our Cloud Formation, Shallot.js for the middleware (a publicly available NPM package by our very own Bailey), LZUTF8 for compressing kernel outputs, and is entirely written in TypeScript.

@actually-colab/desktop: This is the frontend for our cloud editor. This component was built by Jeff Taylor-Chang and was built in React and heavily uses Redux for state management with several custom middlewares that allow it to interact with our editor client as well as the Jupyter Kernel Gateway, and is also written in TypeScript.

@actually-colab/desktop-launcher: This is the Electron native app that manages the kernel process for the user. This component was built by Jeff and was built in React with Redux as well. It takes advantage of hidden renderers to spawn a primary UI that shows diagnostic info and logs, as well as a dedicated process that manages the Kernel Gateway process. These processes communicate over IPC. The desktop-launcher was also built-in TypeScript.

Challenges we ran into

The first question is why are the existing tools so expensive. According to professors we talked to, CoCalc costs the University roughly $12 per student each semester. That is an incredibly high cost when you want to run a class or a workshop that has hundreds or thousands of attendees. In fact, the reason why the main option on the market is so expensive is because of their architecture. By hosting the notebook server on-premise and providing each student with gigs of RAM, CPU, and their own Kernel instance, they've created a model that simply cannot scale. Their server requirements for the kernel alone scale O(n) with the number of attendees which makes it impossible to provide it cheaply. So when designing our system we asked if it was possible to flip the script and create a distributed network of kernels where each student can bring the compute resources they already have sitting in front of them, and we succeeded. This created a few problems to solve: 1) we had to learn how the Jupyter Kernel worked at a low level so that we could control the process and execute code on the user's machine in a safe and controlled environment, 2) we had to figure out how to make the entire process as seamless as possible, 3) we had to build a custom cloud editor that was capable of interacting directly with the Kernel on the user's machine, and 4) we had to learn how to build real-time collaboration, something neither of us had any experience with, and do it in an extremely efficient way.

In order to deliver the best experience, we opted to build an Electron app that would act as a companion to our cloud editor. That native app would have access to the user's machine and be able to install the required software like the Kernel Gateway as well as manage the kernel process automatically without requiring the user to execute complicated commands in the terminal. We were able to restrict the Kernel Gateway to only interact with our website and to clean itself up after being closed. An alpha version of the Native Companion (called desktop-launcher) is available for MacOS, Windows, and Ubuntu (however support on Windows and Ubuntu is experimental still because we both have Macs). The native app will automatically attempt to install the Kernel Gateway if it detects it is not installed.

Building the cloud editor required a significant amount of time and effort but the hardest part was interacting with the Kernel on the machine and doing so in a way that was seamless for the user. The Kernel Gateway spawns a REST and Web Socket server that converts requests into ZeroMQ to communicate with the Kernel instance. We were able to design the editor to automatically check for a running instance of the Kernel Gateway and establish a socket connection. From there we can manage the lifecycle of the kernel based on events that happen in our editor for instance if a user opens a new notebook or runs a cell.

Finally, real-time collaboration was tricky. To autoscale with usage and allow us to deliver a lower-cost service than what's on the market, we designed a cloud architecture that revolved around AWS Lambda functions, a lot of them (almost 30 to be more precise). The vast majority of our server interactions occur over web sockets. After signing into your Google account (we choose 3rd party auth to avoid issues of account security), your information and profile picture are imported to our database. We keep track of the active running sessions and broadcast events automatically to clients who are impacted. So when you open a notebook or edit a cell, etc, all the other users on the notebook are notified of your action so their editors can respond accordingly. To avoid flooding the server with requests on each keystroke from the user, we "debounce" their edits and send them in batches. The interval between these batches remains under a half-second round-trip which allows for the real-time feel. For the sake of time, we created a custom system we call cell-level locking, similar to Mutex locks for parallel programming. When a user clicks on a cell, we automatically request exclusive access to the cell. This prevents other users from editing the same cell at once. Because of the natural decomposition of Jupyter Notebooks into many cells, this allows us to have real-time collaboration without implementing an OT or CRDT algorithm (the common methods of applying changes to reach a consensus result). In the future, we would like to remove that constraint and allow anyone to edit the same cell at the same time, but for now it does the trick.

Accomplishments that we're proud of

Before HackIllinois we had the very basics of the editor working but most of it was hardcoded and didn't support collaborating yet (which was a bit of a crucial feature). We spent the weekend flying through our hardest issues and now users can create and share notebooks with their peers, edit together, view each other's cell outputs, use the built-in chat, import existing notebooks, and our biggest feature: dedicated workshops with instructors and attendees. We took our service from a single user cloud editor to a truly collaborative experience. It was a rewarding experience when we opened a notebook for the first time at around 2am Saturday, shared it with each other, and saw our project work.

What we learned

We had used Jupyter Notebooks before but neither of us really understood how the Kernel worked under the hood. It was a really interesting experience to learn how it worked and to control it programmatically. It gives us a newfound respect for the team at JupyterLab. While they might not do collaboration, they do kernels really well. We also learned that building collaboration tools requires a lot of moving pieces. We each wrote several thousand lines of code this weekend to make our project come to life, working late into the night and throughout the day. Hopefully, others who want to learn how collaborative software works can benefit from our experiences by reading our code and seeing what challenges we had to solve.

What's next for Actually Colab - Real-Time Collaborative Jupyter Editor

The journey is far from over. On the horizon, we have University classes and workshops planning to use our software and there are still plenty of features left before it is truly production-ready. Our notebooks and workshops work, but we want them to be even better. We envision down the road a way for users to browse available workshops that are open to the public and join them from anywhere, creating a new kind of developer community built around sharing knowledge and learning directly from peers. We will let workshop instructors schedule a time for a workshop to automatically open instead of manually opening them. We want to add comments so that instructors could read a student's code and leave comments on areas that need work, and we want to add tools to allow professors to grade their students' code automatically. There are also a whole host of usability improvements that will improve the experience like built-in auto-scrolling and virtualized notebooks for even better performance. And we want to allow users to configure their editor to use a remote kernel. This would allow power users who need access to hardware their local machine can't offer, for instance, higher RAM, faster CPU's, or graphics cards, to plug and play with VMs they can spawn on platforms like AWS. These are just a handful of open issues and tasks that we plan to work on in the coming months as we take what we built for Hack Illinois and put it in the hands of real people, pushing the bill on remote learning.

Built With

- amazon-web-services

- aurora

- electron

- immutable

- jupyter

- kernel

- lambda

- postgresql

- react

- serverless

- typescript

- websockets

Log in or sign up for Devpost to join the conversation.