-

-

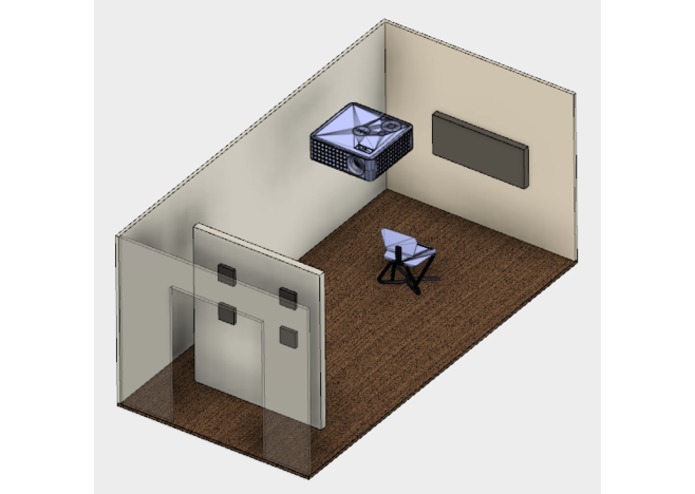

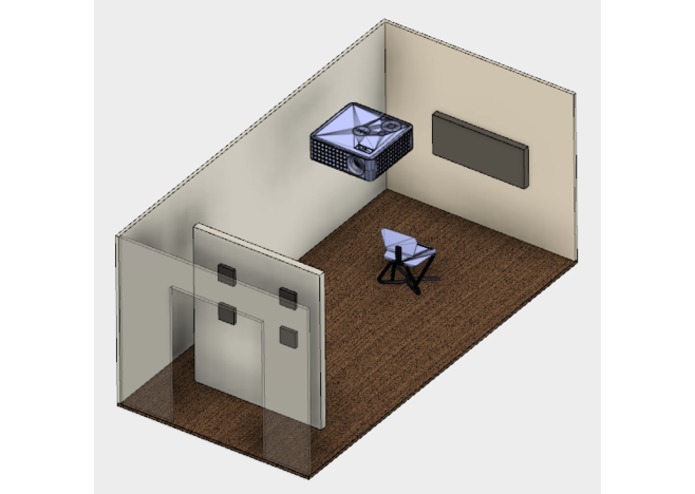

3D Model of the exhibit space made with Fusion 360

-

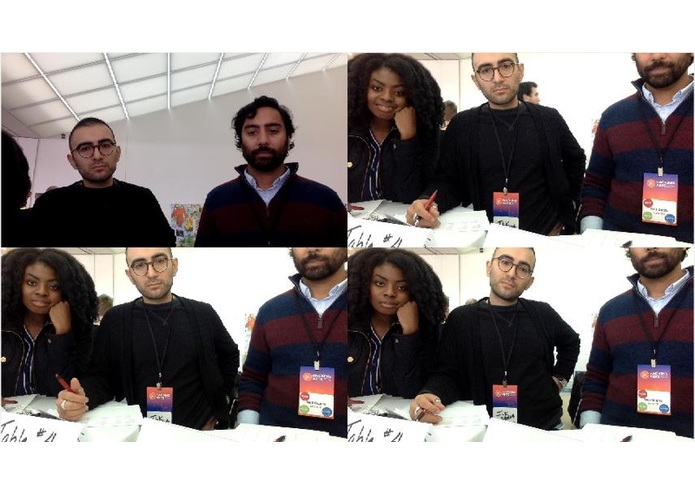

Sample image of FaceSwap technology

-

Sample image of FaceSwap technology

-

Sample image of FaceSwap technology

-

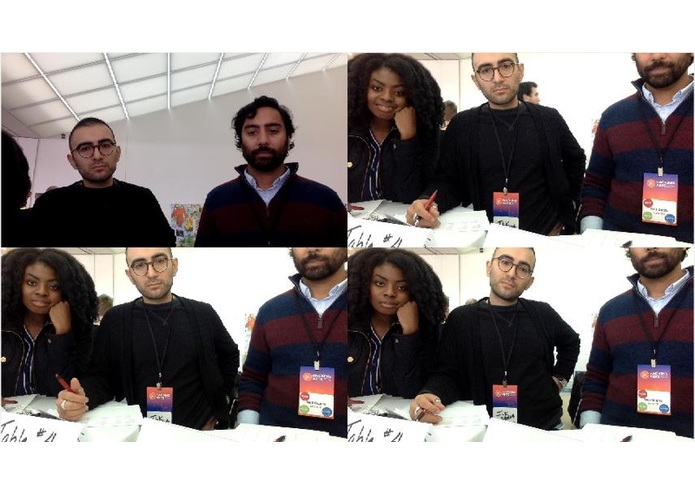

Series of images that capture participant's changing emotions during the video

-

Series of images that capture participant's changing emotions during the video

-

Awesome About Face team members! :)

Inspiration

Now more than ever, we live in a country divided. We can’t understand our neighbors, and we cling to those who reflect our views. We’ve forget to allow ourselves to understand the feelings of others, and in forgetting how to empathize we are expediting the path of hate, we all fear. Our project sets out to tackle empathy, instead of the default that we tend to fall into, where we look at controversial topics from only our point of view. We want to help others in this time of isolation learn that we all have experiences that shape our stories, and that the stories of others can shape us. We were able to tackle this problem, because Morris had previously created a face swap technology for commercial use. He had a vision that this technology could be art and could make people feel. With this idea central to our hearts we were able to extend to a place of greater understanding.

What it does

The demo of our product is a video that has automatically face swapped the actor's face into a predetermined story. We ask the actor to allow us to take a series of photos, which are then set in a progressive story line to allow the view of a different perspective. Lastly, we take the actors pictures as they watch the video, and at the end, the pictures are reflected back onto the actor, confronting them with a literal “in your face” representation of how they felt. A crucial element of this piece, is that the narrative isn’t the point. We could easily have put the actor's face on someone facing domestic violence, someone facing deportation, or someone in a war zone. We choose to address the domestic epidemic of homelessness, because we wanted a clear cut story that everyone, here today, can relate to. We all see homeless people in our daily lives and many of us don’t even think twice. We hope our piece will ask you to think again, and examine these people as people and not inconveniences, or sob stories, or something to be avoided.

How we built it

The program is based off of opencv, an open source computer vision library. We built it on a Mac OS using C++, python, matlab for image presentation, and shell scripts to run different scripts depending on changing inputs. The project was a continuation of a previous successful implementation of the Faceswap algorithm with 5Wits adventure, an escape the room style challenge where participants unknowingly had their pictures taken and then swapped with the murderer of the crime. This project built on that repository by adding support for face mood input, a guiding process to obtain images, and the automations of a group of images into a gif or movie that tell a story.

The images chosen for this particular story were based on group discussions of what topics (Frats, drugs, global warming, mental health) would elicit different reactions, even within a homogenous group like MIT.

The CAD model was built using Autodesk Fusion 360. With the help of the autodesk members, we were able to design an exhibit space (similar to the one we will have in February due to MIT’s grant), to better convey the impact of our project. Models were taken from GrabCAD, mcmaster, and other open source 3D model repositories.

And finally, the image capture (not the face detection) was done through matlab.

Challenges we ran into

We spent a considerable amount of time choosing our storyline since the exhibition will be hosted in February at MIT, and we wanted a topic that would evoke strong emotions on both ends of the spectrum from the audience. We ultimately chose “homelessness” because we felt that it was a topic we could all relate to, as everyone has come into contact with someone who was homeless at some point.

The lesson we learned is that creating our own content can sometimes be easier than researching online. In order to create our storyline, we needed various images to illustrate the narrative. Our initial approach was to search images from specific keywords that were relevant to our story. However, this process took us an enormously long time because most of the images felt too “staged” and couldn’t provide the realistic/relatable experience we wanted. In fact, we ended up having to take one of the pictures ourselves, in order to save time.

Additionally, deciding on the format of the exhibition (whether individual experience or group experience) was equally challenging because our opinions were evenly divided. We decided to get a third party opinion from the mentors and reached a consensus based on popular vote.

Accomplishments that we're proud of

We were able to produce a strong pilot of an exhibit in just under 24 hours. The process required lots of defining of the story, end goals, and what empathy means to different people. We were also able to get over certain hurdles as a team, including not having a single person trained as a software engineer, learning that a visual model would be beneficial one hour before the deadline, and fighting back the urge to sleep.

What we learned

We learned from small focus groups and our own hackathon brainstorm that the ideal conditions for empathy vary widely from person to person. We learned that empathy is not just a factor of how relatable the character is, but also how long you allow someone to “get to know” the character. The biggest lesson is that we should generate our own content for better stories. We searched the web for appropriate images, and unfortunately, when we couldn’t find what we envisioned, we had the image we found determine the next path of the story. Not only that, but the face swap algorithm cannot take into account obstructed faces very well. If instead, we are the photographers taking pictures of scenes to tell a story, we have total control of the narrative as well as more control of how well the FaceSwap app will function.

What's next for About Face

What you see before you today is only the beginning. We expect the piece to actually premier in the MIT Student Center in February, where responses to the piece with be recorded and the recordings will be placed online, along with a virtual version of the piece, allowing the whole word access to the this conversation on empathy.

We view this About Face exhibit at MIT in Feb 2017 as a pilot to gather data on people’s genuine reactions to various stories and how they differ. We will use this pilot data to create more emotionally impactful stories. About Face can be used to personalize any story and capture genuine emotional reactions to that story:

- As an edtech tool to personalize and teach history to young students, for example, teaching the US civil war to high school students using their own faces to encourage relation and empathy to historic stories to increase engagement, retention, and learning.

- Once you start personalizing history lessons, why not personalize interactive stories for children, or comic books?

- Use About Face to imagine yourself in someone else’s Snapchat or Instagram stories.

Log in or sign up for Devpost to join the conversation.