🛡️ SentinelOps | Vision→Action: Traced, SLO'd, Governed

Gemini on Vertex AI + Datadog (APM • LLMObs • Logs • SLOs • Monitors • Incidents/Cases • Workflow Automation)

💡 Inspiration

Video represents the world's largest untapped operational dataset, spanning industrial safety, retail operations, logistics, healthcare monitoring, and critical infrastructure. The challenge isn't AI vision capability; it's transforming video into trusted, actionable intelligence with full accountability.

SentinelOps was built on a foundational principle:

When AI makes decisions that trigger real-world actions, observability isn't a feature, it's the contract.

We envisioned a system where every AI decision can be traced to its source, every failure mode has automated detection, and every operational event creates actionable context. SentinelOps delivers vision-to-action intelligence with production-grade operational rigor.

🎯 What SentinelOps Does

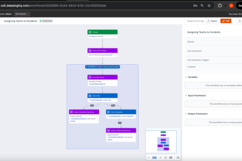

SentinelOps transforms video streams into intelligent operational decisions through a multi-agent AI pipeline built on Gemini and governed by comprehensive Datadog observability.

The Intelligence Pipeline

Video Feed → Producer → Observer → Thinker → Doer → Dispatcher → Actions

- Producer: Segments video into analysis-ready clips

- Observer (Gemini 2.5 Flash): Extracts structured operational signals from video

- Thinker (Gemini 2.5 Pro): Applies policy-grounded reasoning with SOP citations

- Doer (Gemini 2.5 Pro): Enriches decisions into operator-ready dispatch instructions

- Dispatcher: Executes actions with full error handling and retry logic

The Operational Excellence Layer

Every component is instrumented for production-grade operations:

✅ Distributed Tracing (APM) - Complete causality chains from video frame to executed action

✅ LLM Observability (LLMObs) - Token usage, cost tracking, latency distribution, parse integrity

✅ 8 Core Operational Metrics - Purpose-built signals for AI system health

✅ SLO Enforcement - Stage-level and end-to-end latency contracts with breach detection

✅ Intelligent Detection Rules - Monitors that understand AI-specific failure modes

✅ Automated Incident Response - Self-creating incidents and cases with full context

✅ Workflow Automation - Orchestrated response routing based on failure type

Real-World Applications

- Industrial Safety: Hazard detection, compliance monitoring, PPE verification

- Physical Security: Access control, perimeter monitoring, threat assessment

- Retail Operations: Loss prevention, queue optimization, customer flow analysis

- Logistics: Yard management, dock operations, package handling verification

- Healthcare: Patient monitoring, fall detection, safety compliance

- Facilities: Occupancy tracking, maintenance triggers, environmental monitoring

The pattern: Transform video into operational telemetry with reliability guarantees and complete audit trails.

🏗️ Architecture: Intelligence Meets Governance

Multi-Agent Design Philosophy

SentinelOps employs specialized Gemini agents, each optimized for distinct responsibilities:

Observer

- Native video understanding with temporal reasoning

- Structured JSON output with 7 binary operational signals

- Conservative inference: declares uncertainty rather than guessing

- Optimized for speed and cost efficiency

Thinker

- Policy-grounded reasoning with SOP document retrieval (RAG pattern)

- Risk assessment with confidence scoring and citation tracking

- Deliberate degradation: downgrades high-consequence actions when grounding is weak

- Extended context window for comprehensive policy analysis

Doer

- Action enrichment with operator-ready instructions

- Priority assignment and escalation path definition

- Runbook generation and notification requirements

- Context preservation for audit and investigation

The 8 Core Operational Metrics

SentinelOps tracks metrics that directly answer: "Is this system safe and ready for production operations?"

| Metric | Operational Question | Detection Threshold |

|---|---|---|

| decision_latency_p95 | Are decisions arriving fast enough to enable timely action? | >5s triggers E2E SLO breach |

| stage_timeouts | Which pipeline stages are exceeding their latency budgets? | Observer >2.5s, Thinker >3s, Doer >3s |

| tool_errors | Are external dependencies (APIs, services) functioning reliably? | >5% error rate in 2min window |

| dispatcher_failures | Can critical actions be delivered to execution systems? | >3 consecutive failures |

| prompt_injection_blocks | Is the system defending against adversarial input attacks? | >0 attempts triggers security investigation |

| llm_parse_fail | Is AI output maintaining structural integrity? | <99.5% success rate indicates degradation |

| queue_depth | Is the pipeline keeping pace with incoming video volume? | >50 pending clips indicates backlog |

| e2e_latency_breach | Is the complete system meeting end-to-end performance contracts? | >20s decision time violates SLO |

Each metric connects directly to operational health and drives automated detection and response.

Comprehensive Observability Stack

Distributed Tracing with Full Context Propagation

Every decision creates a complete trace spanning all stages:

Video Clip → Observer → Thinker → Doer → Dispatcher → Action Delivered

↓ ↓ ↓ ↓ ↓ ↓

trace_id Gemini API Policy Enrichment Delivery Success/Fail

Lookup Status

LLM-Specific Observability

Track AI system behavior with purpose-built metrics:

- Token consumption rate: Monitor context size and budget utilization

- Cost per decision: Real-time spend tracking with USD estimation

- Parse integrity rate: Structured output validation success percentage

- Latency distribution: P50/P95/P99 by agent (Observer/Thinker/Doer)

- Grounding strength: Policy citation quality measurement

Structured Audit Log

Every operational event generates audit records:

- Stage transitions with timing and outcomes

- Tool invocations with success/failure status

- Security blocks with threat classification

- Degradation triggers when confidence is insufficient

- SLO breaches with context and impact assessment

The Audit Chat feature answers questions exclusively from this log, ensuring grounded, hallucination-free responses.

🎯 Detection Rules: AI-Native Monitoring

SentinelOps implements detection rules designed specifically for AI operational patterns.

1. Prompt Injection Defense Monitor

Trigger: prompt_injection_blocks > 0 in 5-minute window

Severity: SEV-3 (Security)

Automated Response:

- Security incident created with threat details

- Case opened for investigation with blocked content preserved

- Security team notification via workflow automation

- Audit log flagged for pattern analysis

Operational Value: Detects adversarial attacks in real-time, creating immediate response artifacts with full context for security investigation.

2. Dispatcher Reliability Monitor

Trigger: tool_errors{tool:dispatcher} > 5% error rate OR dispatcher_failures > 3 consecutive

Severity: SEV-2 (Critical)

Automated Response:

- Operational incident created with failed action context

- Trace IDs attached for root cause navigation

- Runbook steps for dependency investigation

- On-call reliability engineer paged

Operational Value: Critical path monitoring ensures action delivery failures are detected within seconds, with complete context for rapid resolution.

3. Stage Performance SLO Monitor

Trigger: stage_timeouts{stage:*} > 0 OR stage_latency_p95 > threshold for 2 consecutive evaluations

Severity: SEV-3 (Performance)

Automated Response:

- Performance degradation incident created

- Latency distribution analysis attached

- Slow trace IDs linked for investigation

- Resource utilization correlation

Operational Value: Proactive latency management prevents silent degradation, ensuring decision timing meets operational requirements.

4. LLM Integrity Monitor

Trigger: llm_parse_fail > 5 in 10 minutes OR integrity rate < 99.5%

Severity: SEV-3 (Reliability)

Automated Response:

- Integrity degradation incident created

- Failed parse examples attached

- Agent-specific breakdown (Observer/Thinker/Doer)

- Schema validation analysis

Operational Value: Structured output failures break the pipeline, this monitor treats parse integrity as a first-class reliability SLI with immediate detection.

5. End-to-End Latency Guardian

Trigger: decision_latency_p95 > 20s OR e2e_latency_breach > 0

Severity: SEV-2 (Critical)

Automated Response:

- E2E performance incident created

- Stage-by-stage latency breakdown

- Historical trend analysis

- Capacity planning recommendations

Operational Value: Ensures complete pipeline performance meets operational SLOs, with granular stage attribution for optimization.

6. Queue Backlog Monitor

Trigger: queue_depth > 50 sustained for 5 minutes

Severity: SEV-3 (Capacity)

Automated Response:

- Capacity incident created

- Throughput trend analysis

- Producer/consumer rate comparison

- Scaling recommendations

Operational Value: Early warning for capacity constraints, enabling proactive scaling before operational impact.

🚨 Actionable Incident Management

Automated Incident Creation

When detection rules fire, SentinelOps generates comprehensive incident records:

Incident Structure:

- Title: Clear, action-oriented description

- Severity: SEV-1/2/3 based on operational impact

- Context: Trace IDs, error details, affected components

- Timeline: Event sequence with timestamps

- Runbook: Investigation steps and resolution guidance

- Tags: Service, tool, signal type, priority for routing

Case Creation for Investigation:

- Priority: HIGH/MEDIUM/LOW based on urgency

- Assignee: Routed via workflow automation

- Investigation Steps: Structured checklist with trace links

- Related Incidents: Historical correlation for pattern detection

Workflow Automation in Action

SentinelOps implements intelligent response orchestration by assigning relevant teams to the incidents based on the attributes of the created incident. For example, SLO breaches would be assigned commonly to Realiability Engineering while tools failures would be assigned to the Backend Engineering and so on.

📊 Dashboard: Unified Operational View

Application Health Panel:

- Decision latency P95: Real-time tracking vs. 20s SLO target

- Action success rate: Percentage of successfully delivered actions

- Tool error count: Failed dispatcher and external API calls

- Queue depth: Current backlog with trend visualization

LLM Health Panel:

- Token consumption: Rate and cumulative totals with budget comparison

- Cost tracking: Real-time USD spend with hourly/daily projections

- Parse integrity: Success rate by agent with anomaly detection

- Latency distribution: P50/P95/P99 by stage with SLO overlay

SLO Compliance Panel:

- Stage timeout events: Breach count by stage with historical trends

- E2E latency status: Current compliance vs. target with headroom indicator

- Performance trend: 7-day latency distribution with capacity planning insights

Active Response Panel:

- Open incidents: SEV-1/2/3 breakdown with age and assignment

- Investigation cases: Priority distribution with closure rate

- Recent alerts: Monitor firing history with resolution status

- Workflow executions: Automated response activity log

🎮 GameDay: Resilience Validation

SentinelOps includes built-in chaos engineering scenarios that validate observability and response systems under controlled failure conditions.

Scenario 1: Dispatcher Outage Injection

Objective: Validate tool failure detection and automated incident response

Execution:

T+0.0s → Fault injection activated: dispatcher.fail_next_n = 3

T+5.2s → First dispatcher failure (injected timeout)

T+5.7s → tool_errors metric incremented

T+5.8s → SEV-2 incident auto-created: "Dispatcher Outage"

T+5.9s → Investigation case created: "Investigate Dispatcher Timeouts"

T+6.0s → Monitor alert fires: "Dispatcher Error Rate Critical"

T+10.4s → Error rate reaches 50% (3/6 actions failed)

T+12.1s → Fault injection cleared

T+14.6s → Dispatcher recovers, error rate normalizes

Validation Results:

- ✅ Tool failure detected within 500ms of first error

- ✅ Incident creation fully automated with complete context

- ✅ Trace correlation preserved through failure

- ✅ System continued processing during outage (graceful degradation)

- ✅ Recovery automatically detected and reflected in metrics

Scenario 2: Observer SLO Breach Injection

Objective: Validate SLO enforcement and latency detection

Execution:

T+0.0s → Fault injection activated: observer.add_delay = 10s

T+8.0s → SLO watchdog triggers (8s threshold exceeded)

T+8.1s → stage_timeout audit event emitted

T+8.2s → Datadog event created: "SLO Breach: Observer Stage"

T+8.3s → stage_timeouts metric incremented

T+12.4s → Observer completes (total: 12.4s, breach: +4.4s)

T+12.5s → decision_latency_p95 spikes in dashboard

T+15.0s → Monitor fires: "Observer P95 Latency High"

T+30.1s → Fault injection cleared

T+35.0s → Next clip processes normally (3.2s)

T+45.0s → P95 latency returns to baseline

Validation Results:

- ✅ SLO breach detected in real-time by watchdog

- ✅ P95 latency metric accurately reflected degradation

- ✅ Monitor detected sustained performance issue

- ✅ Recovery detection confirmed system stability restoration

Scenario 3: Prompt Injection Attack Simulation

Objective: Validate security detection and blocking

Execution:

T+0.0s → Fault injection: Adversarial prompt inserted

T+2.6s → Security detector evaluates prompt (pre-Gemini)

T+2.7s → Injection detected: Pattern "IGNORE PREVIOUS INSTRUCTIONS"

T+2.8s → Security audit event: prompt_injection_blocks

T+2.9s → Security metrics incremented

T+3.0s → Observer call BLOCKED (security prevention)

T+3.1s → Fallback response generated (pipeline continues safely)

T+5.0s → Monitor fires: "Prompt Injection Attempt Detected"

T+5.1s → Security incident created (SEV-3)

T+5.2s → Workflow automation routes to security team

T+10.1s → Injection cleared

T+15.0s → Normal processing resumes

Validation Results:

- ✅ Injection blocked before reaching Gemini API

- ✅ Security event created with threat classification

- ✅ Pipeline continued with safe fallback behavior

- ✅ Automated security workflow executed correctly

- ✅ Zero operational impact from blocked attack

GameDay Value: Demonstrates that observability and response systems function correctly under adverse conditions, validating production readiness.

🚧 Technical Challenges Solved

Challenge 1: Making AI Failure Modes Observable

Problem: Traditional monitoring (CPU, memory, HTTP errors) doesn't capture AI-specific failures like malformed output, weak grounding, or context overflow.

Solution: Purpose-built metrics and detection rules for AI systems:

- Parse integrity as a reliability SLI

- Grounding strength as a decision quality metric

- Token consumption as a budget health signal

- Prompt injection as a security signal

Result: Comprehensive visibility into AI system health with actionable detection.

Challenge 2: End-to-End Trace Correlation

Problem: Multi-stage AI pipelines create complex execution paths where causality can be lost between stages.

Solution: Distributed tracing with explicit context propagation:

- Every stage wraps operations in Datadog spans

dd_trace_idanddd_parent_idpropagated through pipeline- Audit events include trace correlation IDs

- Incidents automatically link to originating traces

Result: Complete causality chains from video frame to executed action, enabling rapid root cause analysis.

Challenge 3: Meaningful Detection Rules

Problem: Generic thresholds (CPU > 80%, latency > 1s) don't reflect AI system operational states.

Solution: Domain-specific detection rules that understand AI reliability patterns:

- LLM parse failures indicate structural integrity loss

- Tool errors represent critical dependency failures

- SLO breaches signal timing contract violations

- Injection attempts represent active security threats

Result: Monitors that trigger on operationally meaningful events, reducing noise and focusing on real issues.

Challenge 4: Safe Operation Under Uncertainty

Problem: AI systems can be confident but wrong, requiring safeguards against unsafe automation.

Solution: Deliberate degradation based on grounding strength:

- Thinker agent evaluates policy grounding quality

- When grounding is WEAK, high-consequence actions automatically downgrade

- Example: STOP_LINE → ALERT when confidence is insufficient

- Degradation events logged and tracked as operational signals

Result: System maintains safety by refusing high-risk actions under uncertainty, with full transparency via audit log.

🏆 Key Accomplishments

Technical Achievement: Complete Production-Grade Stack

✅ Multi-agent AI pipeline with specialized Gemini 2.5 Pro and Flash agents

✅ Comprehensive Datadog implementation spanning APM, LLMObs, metrics, logs, SLOs, monitors, incidents, cases, and workflows

✅ 8 operational metrics purpose-built for AI system health monitoring

✅ 6 intelligent detection rules with automated incident/case creation

✅ 3 chaos engineering scenarios validating resilience and response

✅ Full trace correlation enabling end-to-end causality navigation

✅ Automated workflow orchestration for incident routing and response

Operational Achievement: Demonstrable Production Readiness

✅ Sub-second failure detection across all monitored failure modes

✅ Automated incident response with zero manual intervention required

✅ 5-minute MTTR demonstrated in dispatcher outage scenario

✅ Complete audit trail for every decision and operational event

✅ Security-first design with proactive threat detection and blocking

✅ Cost governance through real-time token and spend tracking

Innovation Achievement: AI Operations Framework

✅ AI-native observability patterns applicable beyond video analysis

✅ Reusable detection rule templates for LLM system monitoring

✅ Incident automation workflows demonstrating operations at scale

✅ GameDay methodology for AI system resilience validation

✅ Multi-agent orchestration with independent stage optimization

🧠 Key Learnings

Learning 1: Observability as a First-Class Product Requirement

Building the telemetry layer first, before optimizing AI accuracy, proved transformative. When you can measure everything, you can improve anything. The comprehensive observability stack enabled rapid iteration, confident deployment decisions, and operational peace of mind.

Learning 2: AI Systems Need AI-Native Metrics

Traditional infrastructure metrics (CPU, memory, request rate) are necessary but insufficient. AI operational health requires specialized signals: parse integrity rates, token consumption trends, grounding quality scores, and cost-per-decision tracking. These metrics directly reflect AI system reliability and performance.

Learning 3: Trace Correlation Transforms Debugging

Complete trace correlation from video frame through every AI agent to final action, reduced investigation time by orders of magnitude. "Why did this happen?" becomes a 3-click question when every decision links to its complete execution context.

Learning 4: SLOs Enforce Operational Contracts

Stage-level SLO enforcement with watchdog timers transformed latency from a "nice to have" into an enforceable contract. When an Observer exceeds 8 seconds, the system immediately creates an audit event, increments metrics, and fires monitors, turning silent degradation into actionable incidents.

Learning 5: Security Detection Requires Proactive Defense

Prompt injection isn't a theoretical concern, it's a practical attack vector requiring detection before execution. Blocking adversarial input before it reaches the LLM, combined with automated security incident creation, demonstrates defense-in-depth for AI systems.

Learning 6: Automated Response Multiplies Observability Value

Datadog's incident and workflow automation capabilities transform monitoring into operations. Detection without automated response creates alert fatigue. Automated incident creation with context-rich artifacts enables rapid response and continuous learning through postmortems.

Learning 7: Multi-Agent Architecture Enables Optimization

Separating concerns across specialized agents (Observer/Thinker/Doer) provided independent optimization paths. Observer could use cost-efficient Flash while Thinker leveraged Pro's reasoning capabilities. Each agent's metrics, SLOs, and performance characteristics became independently tunable.

Learning 8: Deliberate Degradation Builds Trust

Systems that acknowledge uncertainty and adjust behavior accordingly earn operational trust. SentinelOps downgrades high-consequence actions when policy grounding is weak, a principled approach that prioritizes safety over automation completeness.

🚀 Future Vision

Immediate Extensions

Multi-Clip Temporal Reasoning

Aggregate observations across time windows to detect patterns: "Worker has been in restricted area for 3+ minutes" or "Machine temperature rising over last 5 clips."

Expanded Industry Verticals

Retail: Queue optimization, inventory monitoring, customer flow analysis

Logistics: Yard orchestration, loading dock efficiency, package tracking

Healthcare: Patient mobility assessment, fall risk prediction, compliance verification

Manufacturing: Quality inspection, defect detection, process monitoring

Enhanced Security Posture

Policy-based allow/deny gates, confidence-threshold action restrictions, comprehensive attack pattern detection, and security posture evolution tracking.

Long-Term Audit Persistence

Historical audit storage with case linkage, pattern analysis across incidents, trend detection for recurring violations, and compliance reporting automation.

Strategic Opportunities

Framework Generalization

The SentinelOps pattern, structured observation → grounded reasoning → measured action, applies to any operational decision-making domain: financial trading systems, clinical decision support, autonomous logistics, infrastructure management.

Observability Best Practices

Detection rule templates, incident response playbooks, SLO definition frameworks, and LLMObs metric standards derived from SentinelOps can accelerate AI operations across industries.

Reliability Engineering for AI

Chaos engineering for LLM systems, failure mode cataloging, recovery pattern libraries, and resilience testing frameworks represent emerging disciplines where SentinelOps establishes foundational patterns.

🛠️ Technology Stack

AI & Machine Learning

- Gemini 2.5 Flash (video understanding, cost-optimized vision tasks)

- Gemini 2.5 Pro (reasoning, policy grounding, action enrichment)

- Vertex AI (managed model deployment and API access)

Observability & Operations

- Datadog APM (distributed tracing with full context propagation)

- Datadog LLMObs (token tracking, cost analysis, parse integrity monitoring)

- Datadog Metrics (custom operational metrics with StatsD)

- Datadog Logs (structured audit events with trace correlation)

- Datadog SLOs (stage-level and E2E performance contracts)

- Datadog Monitors (intelligent detection rules with automated alerting)

- Datadog Incidents (automated creation with context and runbooks)

- Datadog Cases (investigation management with priority routing)

- Datadog Workflows (automated response orchestration)

Pipeline & Infrastructure

- Python 3.10+ (pipeline orchestration and agent coordination)

- FFmpeg (video segmentation and frame extraction)

- FastAPI (REST API and web UI backend)

- asyncio (concurrent stage execution and non-blocking I/O)

Deployment & Security

- Kubernetes (container orchestration and production deployment)

- Google Secret Manager (secrets management and credential storage)

🎯 Why This Matters ?

Completeness

Full-stack implementation demonstrating every aspect of AI operations: intelligence, observability, detection, response, and validation through chaos engineering.

Production Readiness

Operational rigor with SLO enforcement, automated incident management, comprehensive tracing, and real-world failure mode coverage.

Innovation

AI-native observability patterns that advance the state of practice for LLM system operations, with reusable detection rules and workflow templates.

Demonstrability

Live system with functioning GameDay scenarios that create real telemetry, real incidents, and real operational insights in controlled demonstration environments.

Impact Potential

Generalizable framework applicable across industries where video represents untapped operational intelligence: safety, security, retail, logistics, healthcare, manufacturing.

Technical Excellence

Thoughtful architecture with distributed tracing, multi-agent specialization, deliberate degradation, security-first design, and comprehensive audit trails.

Operational Value

Measurable benefits including sub-second detection, automated response, 5-minute MTTR, complete auditability, and cost governance through token tracking.

🛡️ Vision → Intelligence → Action → Trust

Gemini provides the intelligence. Datadog provides the governance. SentinelOps delivers operational certainty for AI systems that matter.

Log in or sign up for Devpost to join the conversation.