-

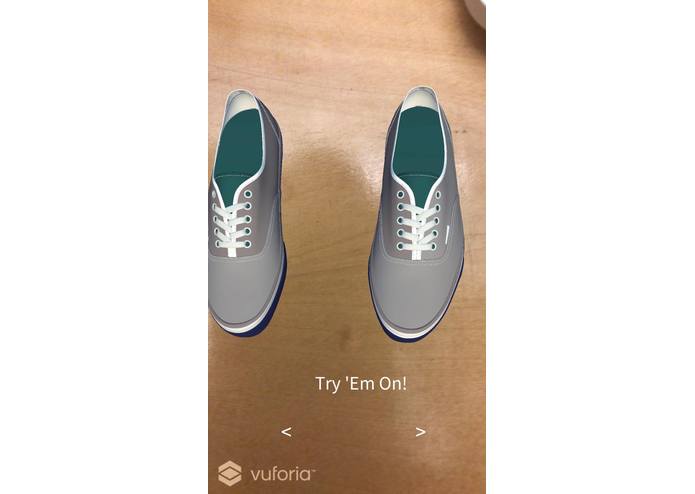

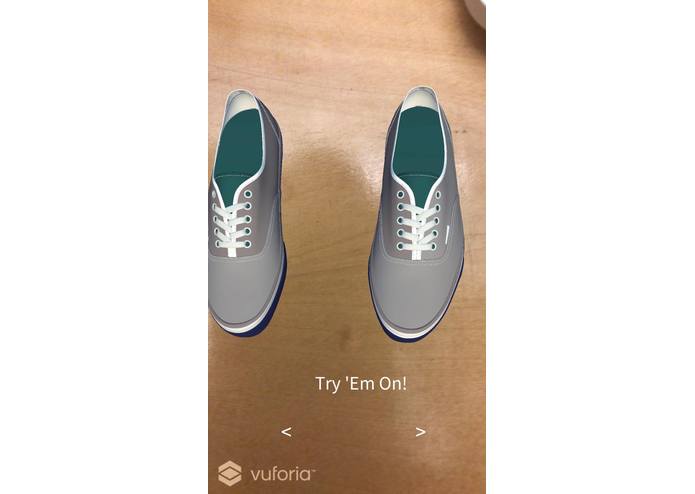

Prototype- By using cylindrical tracking, it appears as though the user is actually wearing the shoes

-

Application offers various colors and shoe styles to try on!

-

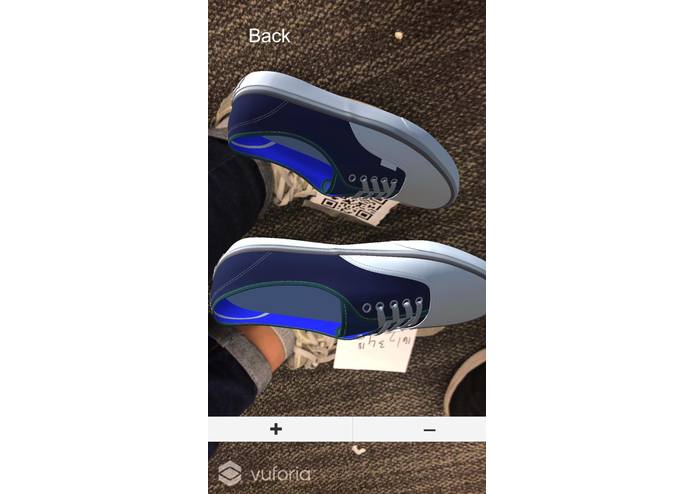

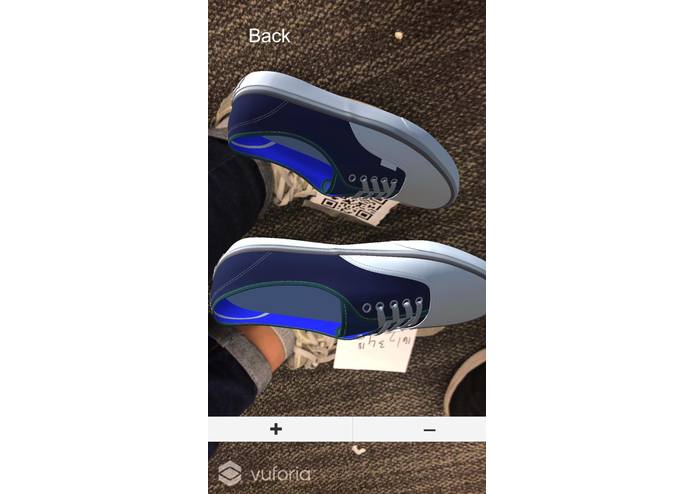

Another shoe style model...

-

Example of how flat image tracking prevents the user from truly "wearing" the shoes. The cylindrical feature was more realistic.

-

Model is very responsive and can register side views of the feet to give the appearance of actually wearing shoes.

-

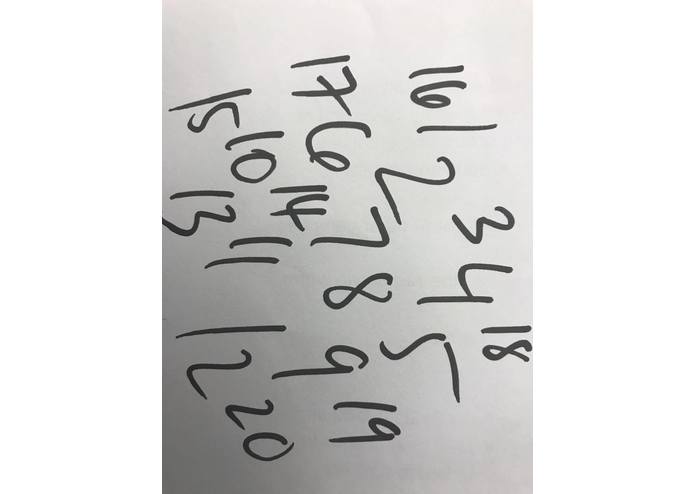

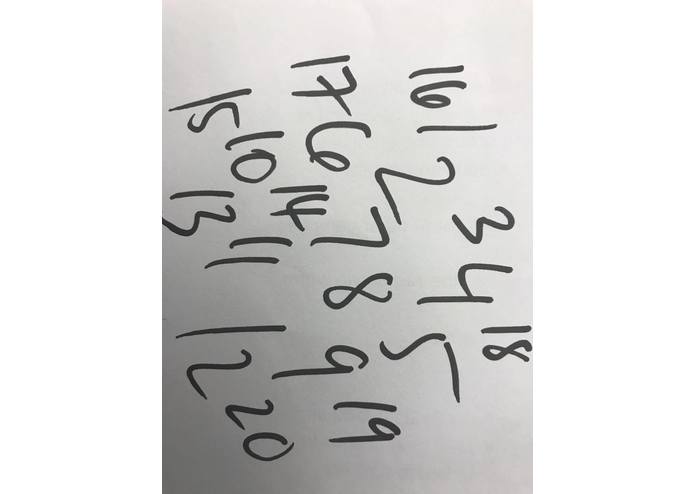

This was an image target used for tracking and model rendering.

-

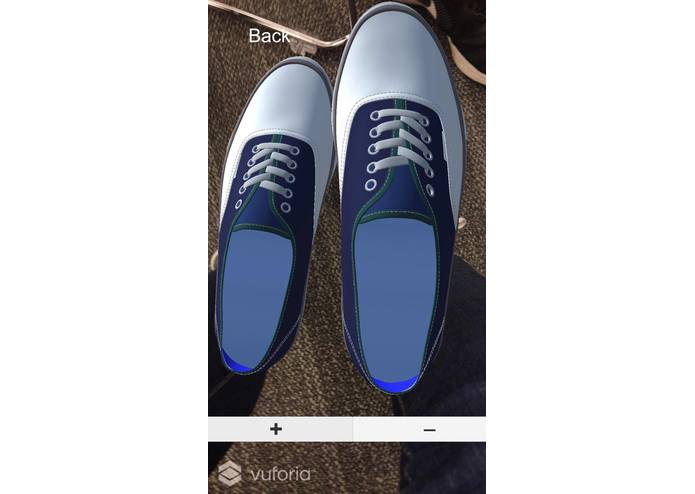

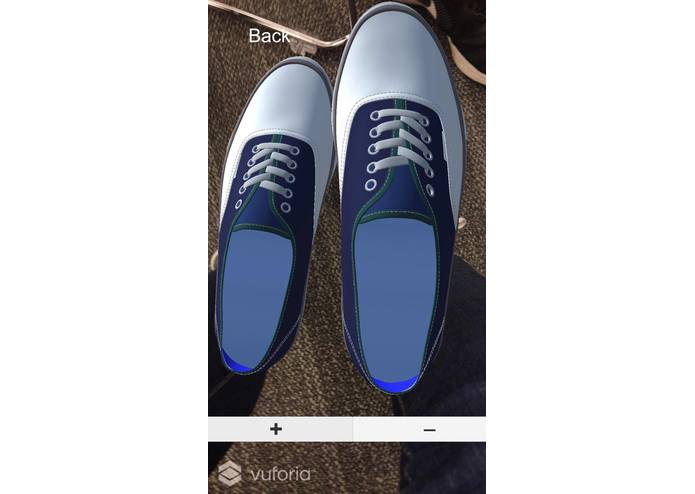

Example of scaling the shoe size up!

-

Example of scaling the shoe size down. These scaling features will be implemented to identify real-life shoe size.

Inspiration

The process of buying new footwear is confounded with hassles such as identifying the appropriate shoe size for varying brands and the amount of time the unique consumer must put into choosing the perfect style. This application aims to empower users to save time and we believe this concept could extend beyond shoes, but also include accessories (i.e. watches, necklaces, headwear, etc.). We cater to a wide demographic, including those who love to casually browse for shoes and more importantly, those who struggle to physically try on shoes such as elderly seniors and differently-abled/physically impaired people.

What it does

The application is elegantly simple. The user will initialize the application to render a database of shoe styles. Once the user chooses a pair to try on, the application will load an augmented reality model of the shoes in real-time and track the user's feet to simulate the activity of trying on shoes. It comes with additional features such as a scalability function that resizes the model to fit the user's feet appropriately. In the future, each scaling will be mapped to the official shoe size of the specific shoe brand so that the user knows exactly what size to purchase.

How we built it

By using Vuforia, we were able to create tracking images that served as image targets for the augmented reality model rendering. These tracking images were organized within Unity to appropriately call the model to the correct foot. We added controls to each scene to control which shoes we were viewing in the database as well as scalability features. We created the tracking images by drawing high contrast images on sheets of paper to function in a similar manner as QR codes.

Challenges we ran into

So many. It was difficult to create the perfect tracking image. Rendering multiple augmented reality models was tasking on the iPhone so in the future we will need to find an effective method of storing a large database of shoes. One of the original ideas was that we would have the ability to use machine learning similar to the Snapchat face-tracking feature. It was suppose to identify the shape of a foot based on the unique 5 toenail signature and outline, however there was insufficient time to train a program to do such over these past few hours. Google Intelligence API was of interest, however there was a significant delay that made it difficult to maintain a real-time viewing of the AR model. Furthermore, this application is limited to flat shoes because we have not yet designed a way to incorporate high heeled shoes in an AR setting. In this initial demo, we opted to use the more stable setting of 2D flat images for tracking as opposed to 3D cylindrical images which would provide depth and environment interaction to truly make the user look as though they are wearing the shoes. The cylindrical image looked very convincing, but was intermittent in its tracking ability, hence the demonstration of flat image tracking. The cylindrical code & files were included for reference.

Accomplishments that we're proud of

It tracks feet very nicely. The shoes look awesome. We also added controls, which is a unique feature that many AR modeling applications do not always offer. We emphasized on user friendliness and made a UI that was complex in design yet simple to use.

What we learned

None of us had any experience in Unity or Vuforia going into this hack. It was a learning experience the entire night. We also had no idea how to build an iPhone app. Somehow...we pulled it off.

What's next for Shoes AR

We're going to create a machine learning model to track bare feet. We will also feed data to the neural network so that the machine can correctly identify the size of the shoe. Even though your feet may swell or change in appearance, it'd be cool if it could function the way that the iPhone X face recognition security feature operates. However, if we hit a wall with that, we think that this product could be marketed with an add-on package of socks with unique designs that will function as tracking images for users. The designs on the socks will function in a similar manner to a QR code and help align the AR models and they will create a business model for us to sell a tangible product as well as demographic data from users. Hopefully we can create more unique models of shoes to represent different brands as well. This application has endless possibilities that can empower the consumer and revolutionize how we can shop from home.

Log in or sign up for Devpost to join the conversation.