-

-

-

Board with camera mounted (Front Angle)

-

Board with camera mounted (Aerial Angle)

-

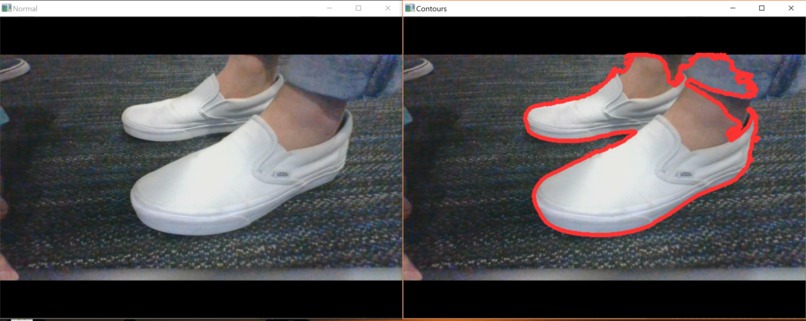

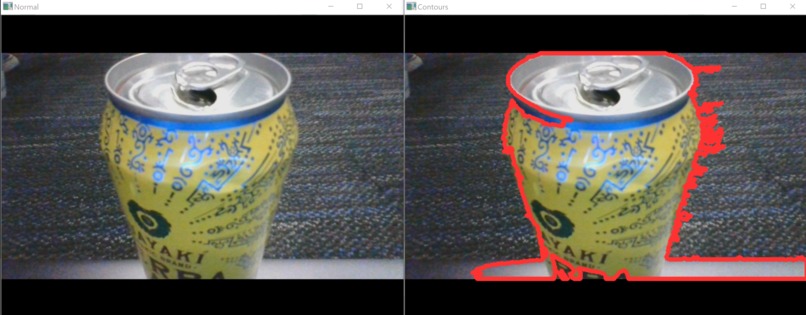

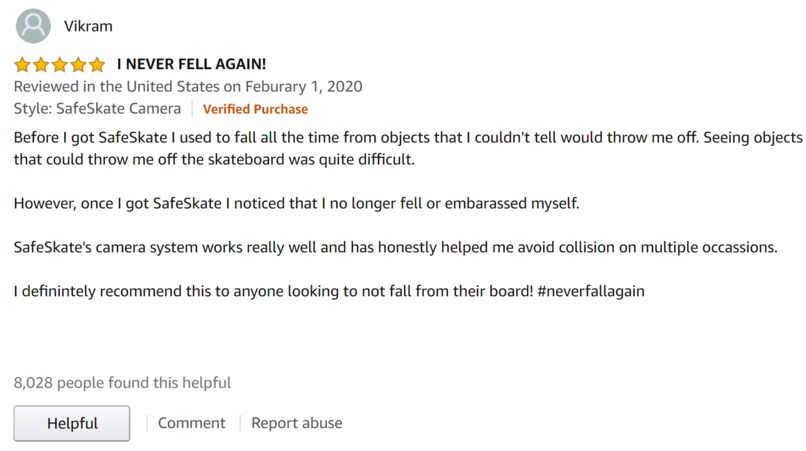

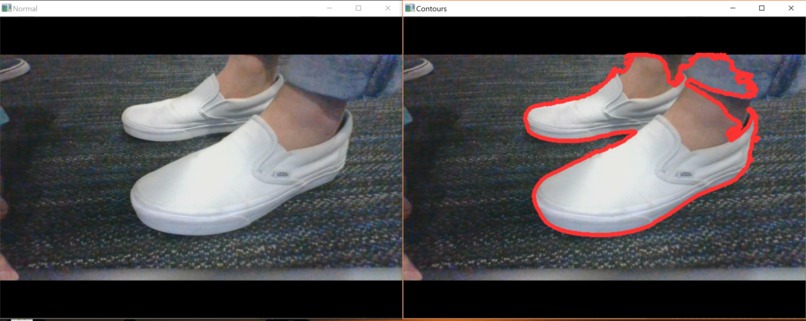

This image shows what the camera sees. Normal (Left) vs. Contour Detection (Right)

-

Normal (Left) vs. Contour Detection (Right)

-

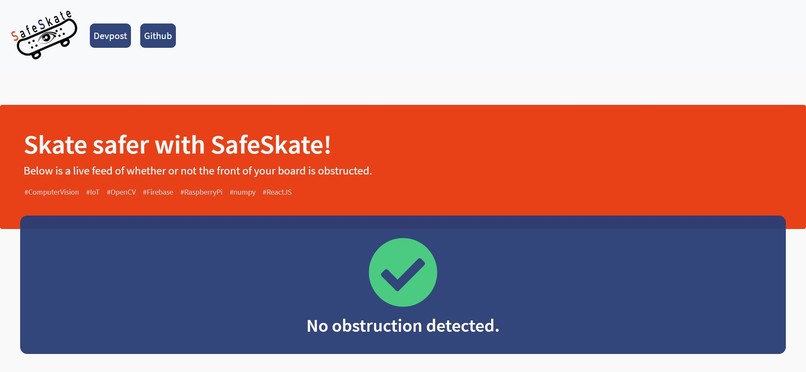

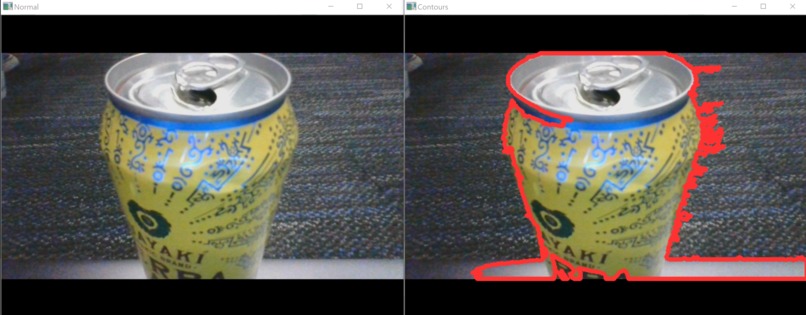

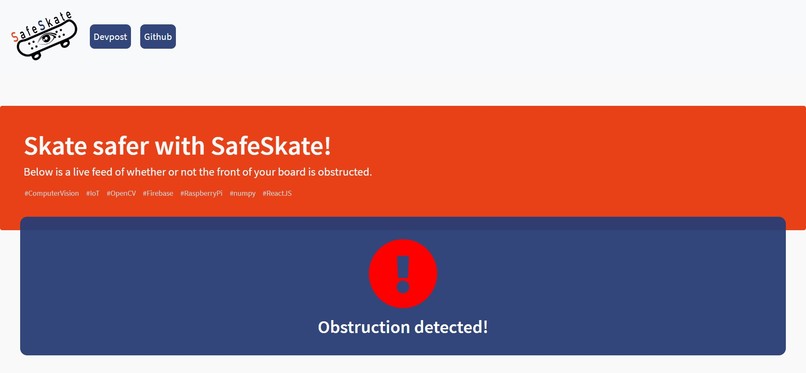

Front end when no obstruction detected (Default)

-

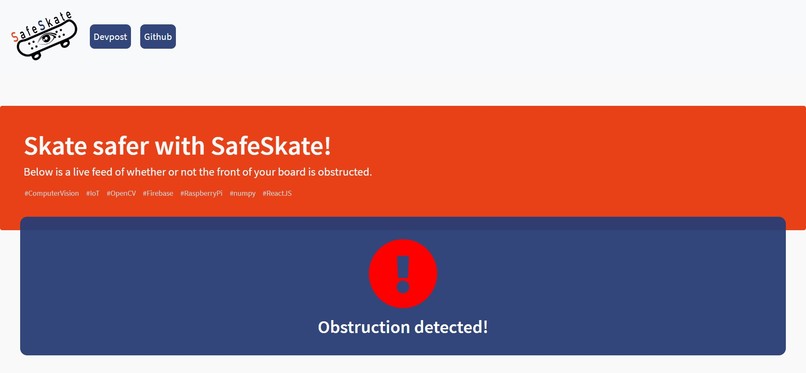

Front end when obstruction is detected (Beeping simulataneously)

-

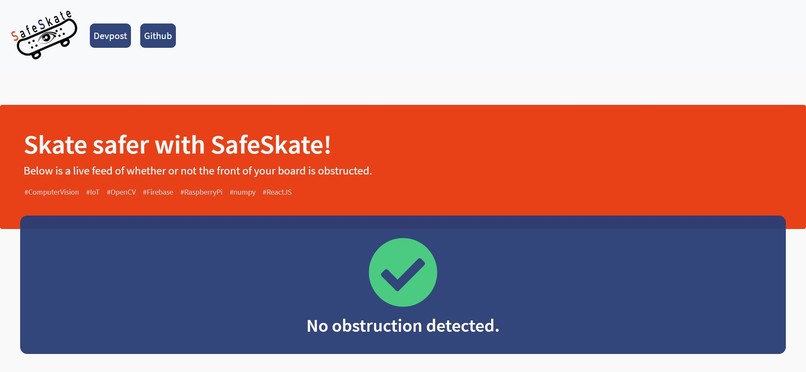

Testimony from a real, satisfied "customer"

SafeSkate

Computer Vision/IoT project for HackUCI 2020

Skate safer with SafeSkate.

What Does it Do?

The camera attaches to the front of your skateboard and constantly scans the area immediately ahead.

Via computer vision, the software detects obstructions in real time and alerts skaters of any objects ahead that may cause a fall by producing a series of loud beeps!

Description

Are you a skater?

If yes, then chances are you have probably fallen from obstructions on the road. Or in other words, you've eaten s#!t.

What if something could warn you of objects in your path and save you from a potential fall and embarrassing moment?

That's where SafeSkate comes in.

Bringing in revolutionary algorithms for rider safety, we introduce an attachment on your board that will analyze hazards in the road and warn you in real time.

SafeSkate utilizes a camera attachment to collect live-time footage and process it using computer vision via OpenCV.

If any hazardous object detected by SafeSkate's algorithm is seen, SafeSkate's back-end program quickly notifies the rider so they can take quick action and avoid a potentially embarrassing moment.

As of right now, this program will run in your phone, but we are hoping to have this integrated into remotes for a better experience.

How Does it Work?

Using a camera attached to a Raspberry Pi, we stream video of what is in front of the skateboard and feed it to our video algorithm.

With the Python library OpenCV, we can detect images and if they meet a certain threshold for our algorithm (such as if the area of the object exceeds a certain size) we update our Firebase database in real time.

At the same time, our web app continuously checks the database for any changes, and dynamically updates the site if an obstruction is detected or not, providing our riders with a safe and pleasant riding experience.

Object Detection

Object detection is done with recognizing contours. Contours can be explained simply as a curve joining all the continuous points (along the boundary), having same color or intensity.

Our backend script processes the video footage in real-time looking for contours to detect objects in the way.

Refer to the gallery to see our object recognition in action

Inspiration

This project was born from personal experience: one night just like any other, I decided to fly up a hill at my board's top speed of about 20 mph.

The unlit sidewalk made it hard for me to see a huge elevation in the next slab of concrete, and sent me tumbling off. If I had only seen what was ahead, I could have slowed down and prevented an injury.

Thus... SafeSkate was born!

Potential Updates

- Android and iOS App

- Add a live feed

- Take a picture of every obstruction

- Improve image detection

- Sync with smart watches

Challenges We Faced

One major challenge was developing a backend script that is quick enough to process a live video and detect objects in real-time. Computer vision and object detection require heavy computations by the computer and takes a long time, so many of the powerful vision APIs (such as Google's Vision API) were not feasible because they were simply too slow for real-time detection.

After a long time of looking around, we decided on using OpenCV as it allows us to come up with our own algorithm for object detection so it could be fast enough for real-time object detection.

Since most tools provided by OpenCV are designed for a setting where the camera sits still and objects from the environment move around, we had to come up with a unique approach (in our case, the camera moves and the environment stays still). We experimented with several algorithms, but after painstaking trial and error, we settled on one.

Once we figured out how to create contours around images, we had to determine how to filter out what we wanted from an image. Once we determined the ideal image processing and threshold values in order to detect the important things in our video feed, we created a web app for it quickly.

The seemingly minor detail of playing a sound when an obstruction was detected took a lot of time to figure out, but it was the last piece to getting the front end fully functional.

What We Learned

We learned how computer vision works with OpenCV and how to use use it.

We learned to communicate from front end to back end through Firebase.

We learned how to refine working software to make it more optimized.

We learned how to set up the Raspberry Pi and use it to power the camera and process images.

Technologies Used

Backend:

- Python

- OpenCV

- numpy

- Firebase

- Raspberry Pi

Frontend:

- React.js

- HTML/CSS

Log in or sign up for Devpost to join the conversation.