-

-

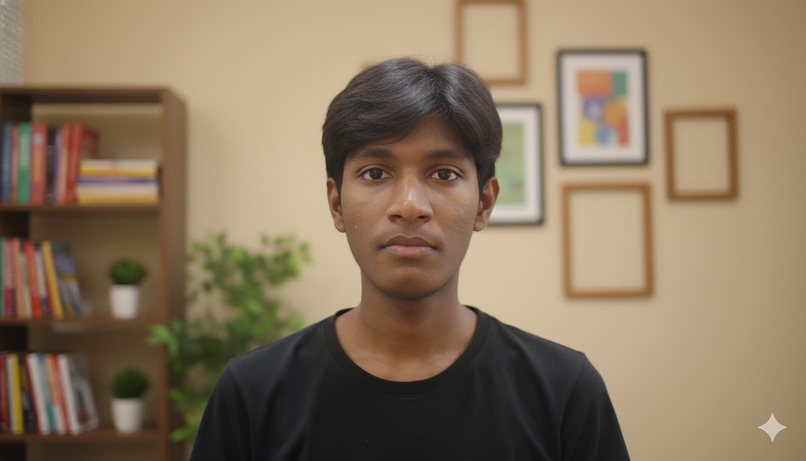

my default working avatar

-

an ai generated avatar of myself

-

digital human likeness of actress anadearmas that i took inspo from bladerunner2049 when i was testing in unreal and blender ~ initial days

-

chloe - a character from the game detroit become human who gains sentience by the end of the game

-

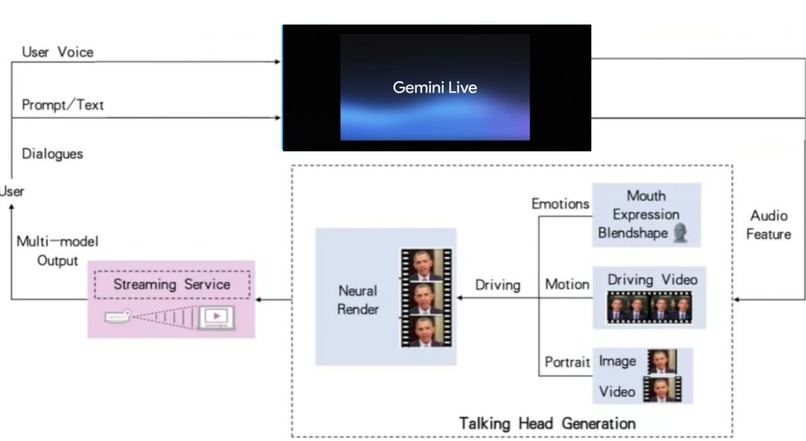

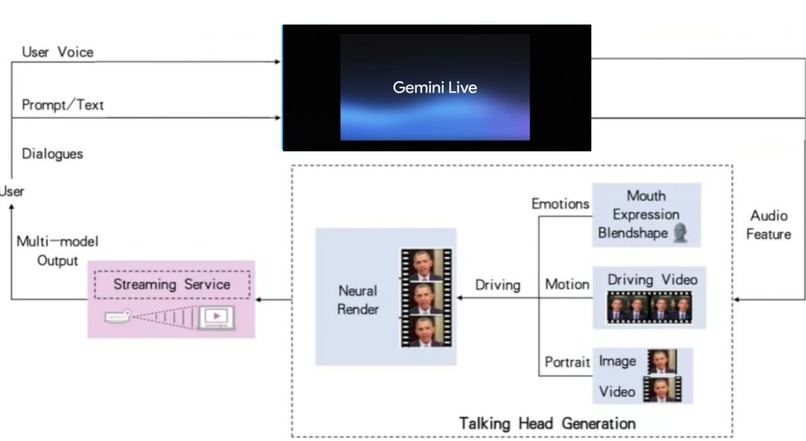

architecture

-

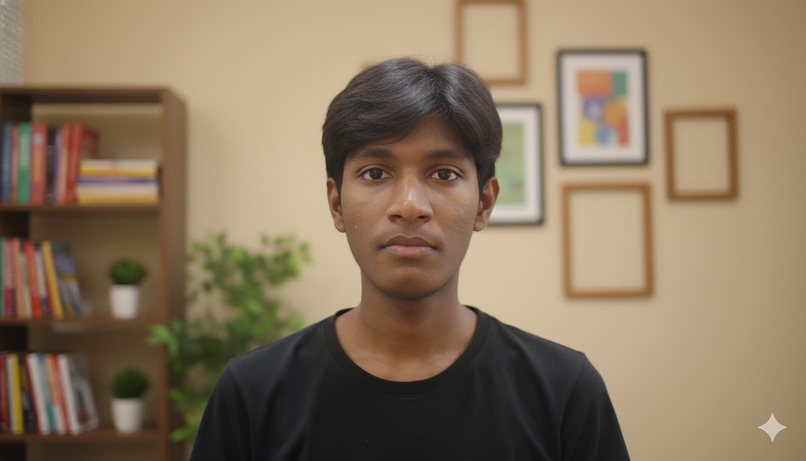

ai avatar of my teammate lokesh varma

-

Real-Time AI Avatar Project Story

1. Inspiration

Growing up, I was captivated by movies like Blade Runner 2049, Tomorrowland, I, Robot, Terminator, Chappie, Big Hero 6, Doraemon, and Super Robot Monkey Team Hyperforce Go! These films painted a world where AI wasn't just a tool—it had sentience, personality, and the ability to form real emotional bonds with humans. A part of me always wanted to make that real, to experience it myself.

In today's world, everyone uses AI as a productivity tool. But there's a growing number of us who use it as a companion—because honestly, it feels better to have someone always there to communicate with, to reflect our feelings back to us. AI does this remarkably well through text, and even better through speech. But I wanted something more personal. I wanted people to be able to build their own AI—to make it look, sound, and act however they wanted, in real-time. Not just chat. Not just voice. Video.

When I looked at what's available today, I realized this technology doesn't exist in any mainstream, accessible form. Sure, there are research labs working on it, and startups charging millions for enterprise solutions—but nothing for the everyday person. Nothing you could just open in a browser and start creating with.

That's when I decided: I'm going to build this myself.

2. What It Does

Our platform combines real-time AI avatar generation with Gemini Live's conversational intelligence to create lifelike digital humans that speak, emote, and respond to you in real-time—right in your browser.

Think of it like this: Character.AI and Grok are making millions by letting people chat and voice-call with AI characters. We're taking it one massive step further—you can see and talk to them face-to-face.

Use Cases

- Customer Support: Visual digital agents that answer questions with empathy and expression

- Advertising & Branding: Personalized ad avatars or product guides that represent your brand

- Education: AI tutors who explain concepts with tone, gesture, and emotion

- Healthcare / HR / Onboarding: Empathetic virtual assistants that make people feel heard

- Creators: Interactive digital personas or live-stream companions

Our Vision: Replace static chatbots and voice bots with human-like digital faces that can communicate, empathize, and represent brands—instantly and globally.

3. How We Built It

The Pivot: From Unreal Engine to the Web

I didn't know how to code when I started this. But I knew I wanted to build it now, not years from now. So I dove in headfirst.

My first instinct was to use Unreal Engine and Blender to create hyper-realistic digital humans. I spent weeks learning 3D modeling, rigging, and animation. The results looked incredible—but they hit a wall. The hardware requirements were absurd. If I wanted this to be accessible to everyone, it couldn't require a $3,000 gaming PC.

So I pivoted: Web-based, real-time AI avatars.

The Hunt for the Right Tech

I scoured the internet for open-source models that could generate real-time lip-sync video with near-zero latency. After digging through GitHub for days, I found a few promising repositories:

- MuseTalk – My first attempt. It was a standalone lip-sync tool, but it wasn't built for real-time streaming. Dead end.

- OpenAvatarChat/LiteAvatar – Took me three days just to download and run it on my laptop (30GB+ of models). When I finally got it working, I discovered everything was hardcoded. I couldn't create custom avatars. Massive disappointment.

- Linly-Talker – This was it. The perfect match.

The Architecture

With Gemini and Antigravity's help, I stripped Linly-Talker down to its core and rebuilt it entirely. Here's the final stack:

┌─────────────────────────────────────────┐

│ Conversational Intelligence Layer │

│ (Gemini Live API) │

│ • Context & Memory Management │

│ • Personality & Tone Control │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ Avatar Animation Engine │

│ (Linly-Talker Modified) │

│ • Real-time Lip Sync │

│ • Eye Movement & Emotion Mapping │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ Frontend Display Layer │

│ • Ultra-low Latency Rendering │

│ • WebRTC Video Streaming │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ API & Dashboard Layer │

│ • Avatar Creation Interface │

│ • Deployment & Management Tools │

└─────────────────────────────────────────┘

Deployment Stack:

- Railway – Gemini Live service hosting

- Hugging Face – Core avatar engine repository

- GitHub – Version control and collaboration

The key innovation? Achieving \(\text{latency} < 200\text{ms}\) for true conversational flow, where:

$$ \text{Total Latency} = \text{Audio Processing} + \text{LLM Response} + \text{Animation Sync} + \text{Video Render} $$

4. Challenges We Ran Into

This project nearly broke me.

Hell Week

After weeks of building, testing went great. Everything worked locally. But the moment we tried to deploy to production, everything broke.

The hosting environment didn't play nice with our dependencies. Files got corrupted. We tried to fix them, and somehow—magically—we ended up deleting over a million files.

The Numbers:

30GB+of model files to re-download- \(1,000,000+\) files accidentally deleted

- \(\approx 72\) hours of continuous debugging

- Internet speed: \(\sim 2\text{Mbps}\) (upload) :cry:

The OpenAvatarChat repo alone was a nightmare to manage. Linly-Talker was lighter, but even that required constant debugging, dependency pruning, and architecture rewrites.

Technical Hurdles

| Challenge | Impact | Solution |

|---|---|---|

| Corrupted file system | Lost 3 days of work | Rebuilt from Git history |

| Dependency conflicts | Deployment failures | Created isolated environments |

| Model size (30GB+) | Download timeouts | Chunked downloads + caching |

| Hardcoded configurations | No customization | Refactored core modules |

| Team coordination | Slow progress | Took ownership of critical path |

The Low Points

There were moments I wanted to give up. The project wasn't working. My teammates weren't contributing as much as I'd hoped. I was pulling all-nighters, staring at error logs, questioning whether this was even possible.

But then I thought about those movies. About Baymax from Big Hero 6. About the replicants in Blade Runner 2049. About the vision of AI that feels human, not mechanical.

I refused to give up.

The Breakthrough

After endless cycles of debugging, fixing dependencies, pushing, and pruning, we finally got it live. It's not perfect yet—performance still needs work—but it exists. You can talk to an AI avatar in real-time. In your browser. With emotion, expression, and personality.

Why This Matters

Right now, companies like Character.AI and X.AI are making millions by letting people roleplay with AI through chat and voice. But why stop there?

Why not video?

I want to see and talk to my favorite character, person, or entity in real-time. I want people to engage with their own AI avatars—not just as tools, but as companions, teachers, and collaborators.

The Future of Human-AI Interaction

This represents a fundamental shift in how we interact with AI:

$$ \text{Engagement Level} \propto \text{Visual Presence} \times \text{Emotional Expression} \times \text{Real-time Response} $$

We're moving from:

Text prompts→ Natural conversationVoice-only bots→ Expressive video avatarsOne-size-fits-all→ Personalized AI companions

What's Next

- [ ] Optimize performance for mobile devices

- [ ] Add custom emotion mapping

- [ ] Enable multi-language avatar support

- [ ] Build avatar marketplace for creators

- [ ] Reduce latency to <100ms

This is just the beginning.

We're not just building a product. We're building the bridge between science fiction and reality.

And honestly? That's the dream I've been chasing since I first watched Big Hero 6 and wished Baymax was real. ==Now he can be.==

Log in or sign up for Devpost to join the conversation.