-

-

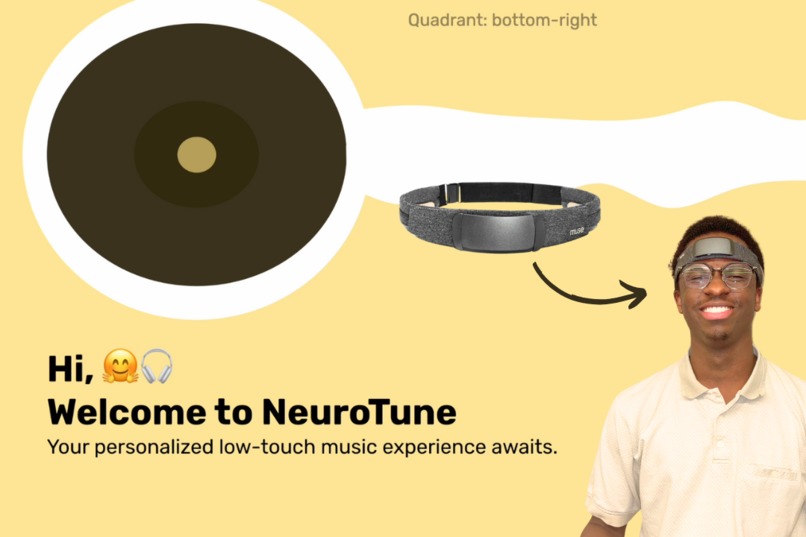

Welcome to NeuroTune! Explore the next images to see using the software, along side some of the other exciting parts.

-

User Using NeuroTune (Muse S headband on) - Homepage - Notice the large green eye tracking cursor

-

User Using NeuroTune (Muse S headband on) - Large buttons for accessible UI

-

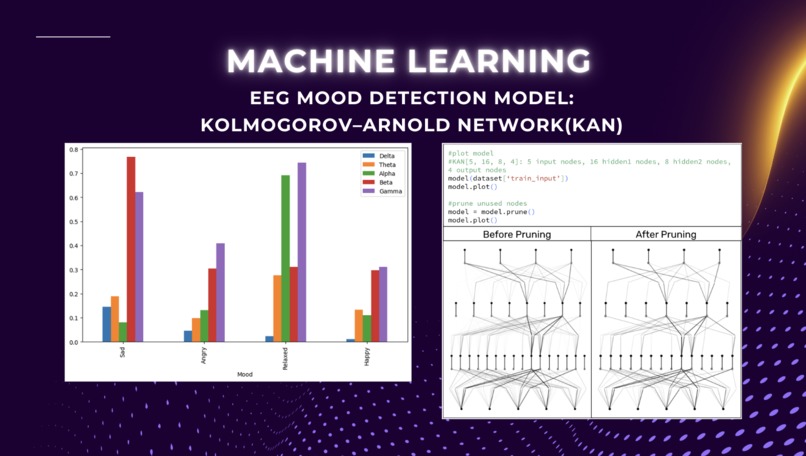

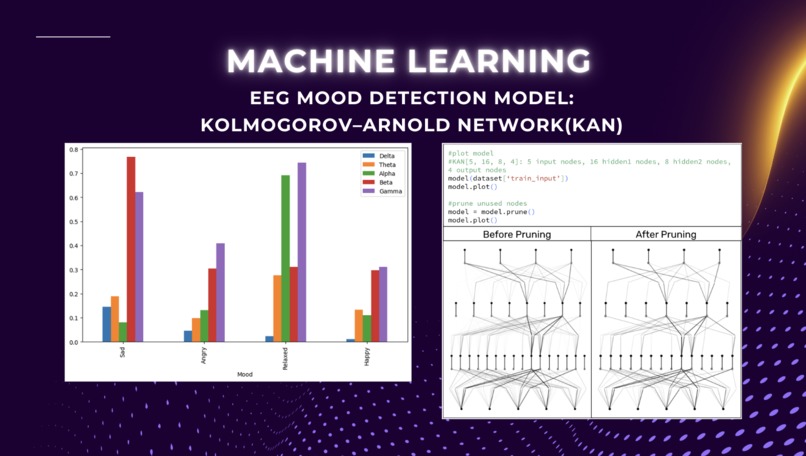

Custom KAN Model Data Visualization - Mood Detection

-

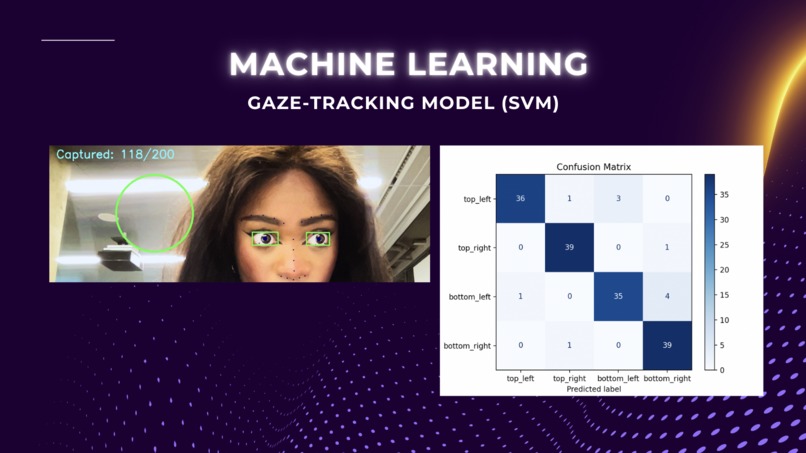

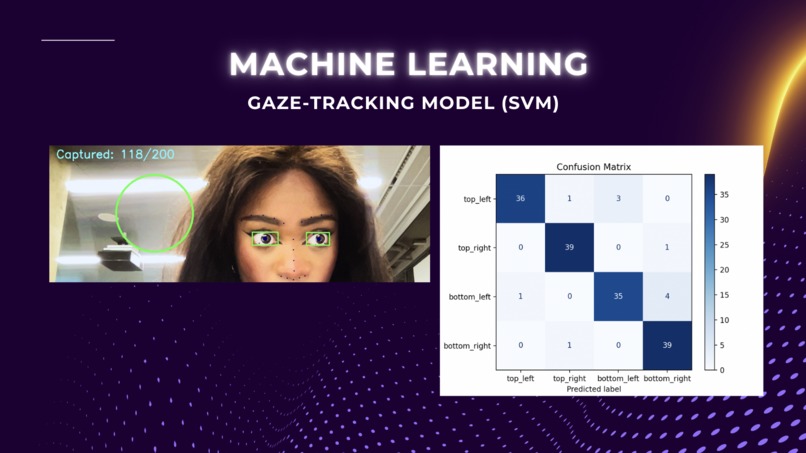

Custom SVM Model - Gaze Dectection

-

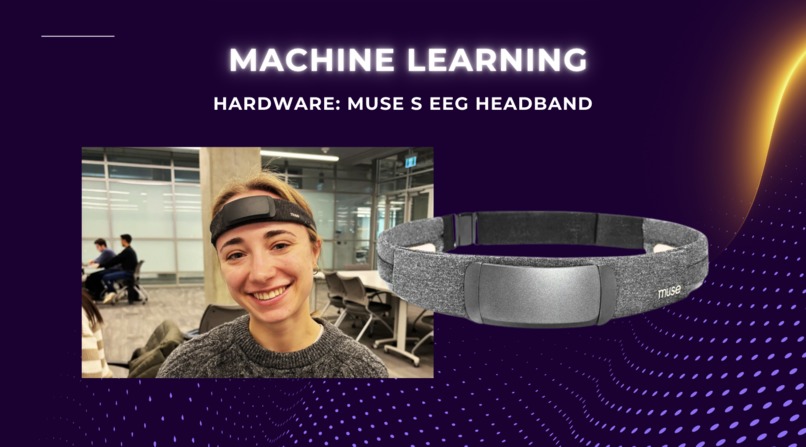

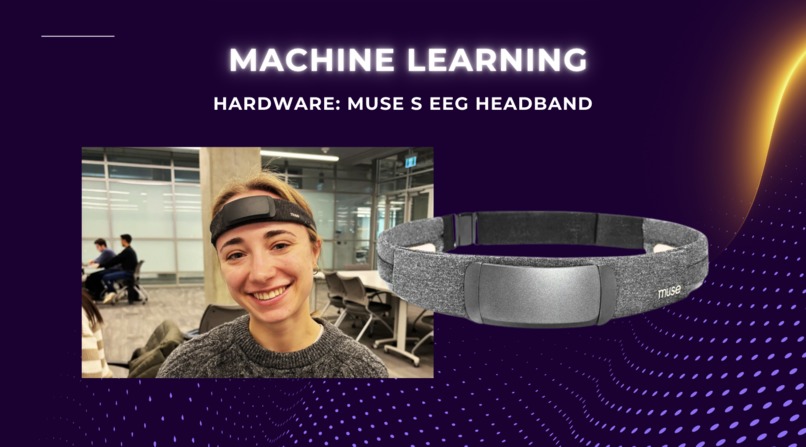

Hardware - Muse S EEG Headband

-

Initial Figma Designs. App design was later improved for a modern UI and accessible buttons and text

NeuroTune: Music Without Barriers 🎵

For many, music is more than entertainment—it’s therapy, comfort, and connection. But for individuals with motor challenges, such as Parkinson’s disease or speech impairments, accessing music can be frustrating. Swiping, tapping, or even using voice commands with devices like Alexa becomes a monumental task.

NeuroTune bridges this gap by reimagining how music is accessed. Our web app eliminates touch-based controls, offering a seamless and intuitive experience.

Using the Muse S headset, NeuroTune measures brain activity, analyzing alpha, beta, gamma, theta, and delta waves to detect emotional states. Our custom-trained KAN neural network classifies these states into moods like “sad,” “angry,” “relaxed,” and “happy.”

With real-time eye tracking, powered by a custom SVM model, users control the app through gaze alone. Look at specific screen areas to pause, play, or skip songs—no physical input required. NeuroTune then recommends a playlist of three songs tailored to your detected mood, adapting over time for better personalization.

In the future, NeuroTune could expand to recommend movies, podcasts, or meditation tracks. It has potential applications in therapy, stress management, and relaxation sessions.

NeuroTune is more than an app—it’s a step toward inclusivity. By removing physical barriers, we ensure everyone, regardless of ability, can experience the therapeutic power of music effortlessly. 🎶

Built With

- bootstrap-(for-initial-ui-prototyping)

- brainflow

- camera/webcam-for-eye-tracking

- css3

- dlib

- dotenv-(for-environment-variable-management)

- eventlet

- figma-(for-ui-design-mockups)

- flask

- flask-socketio

- git

- github

- google-colab

- google-drive-(for-collaborative-file-sharing)

- html5

- imutils

- javascript

- json-for-data-storage

- jupyter-notebook

- kan-neural-network-architecture

- keras

- linux-(for-server-testing)

- macos-(development-environment)

- matplotlib-(for-visualizations)

- muse-s-headset

- numpy

- opencv

- pandas

- pandas-(for-data-analysis-and-manipulation)

- pykan

- pyqt5

- pyqtgraph

- python

- python-dotenv

- pyyaml

- scikit-learn

- screeninfo

- shape-predictor-68-face-landmarks.dat-(dlib-model)

- socket.io

- spotify

- spotipy

- svm-machine-learning-model

- tensorflow-(for-experimenting-with-neural-networks)

- torch

- tqdm

- visual-studio-code

Log in or sign up for Devpost to join the conversation.