-

Our main character visible in the bird's eye AR representation of the world

-

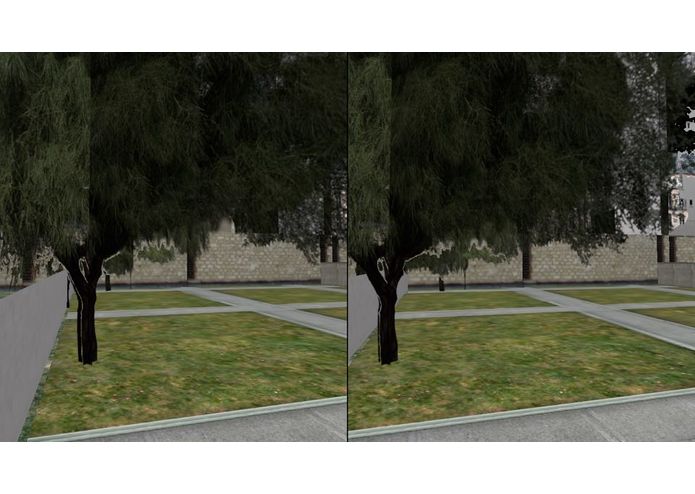

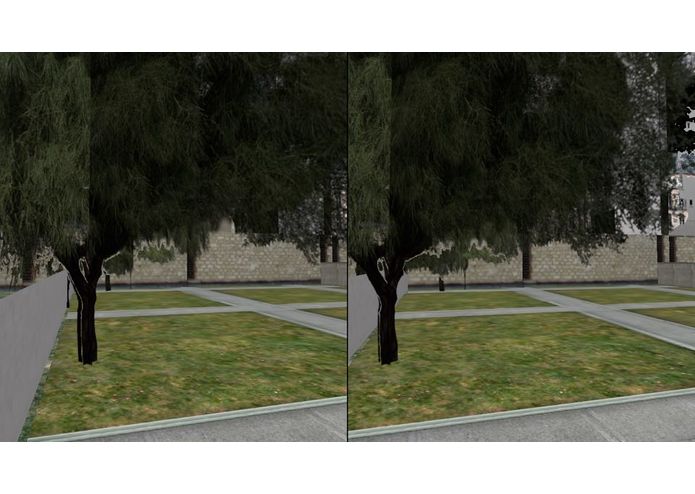

A sample of the VR user's view

-

A 3D rendering of Simmons Hall

-

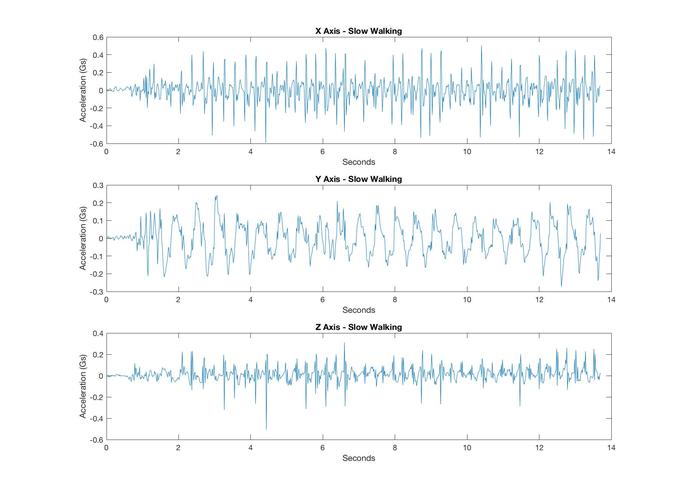

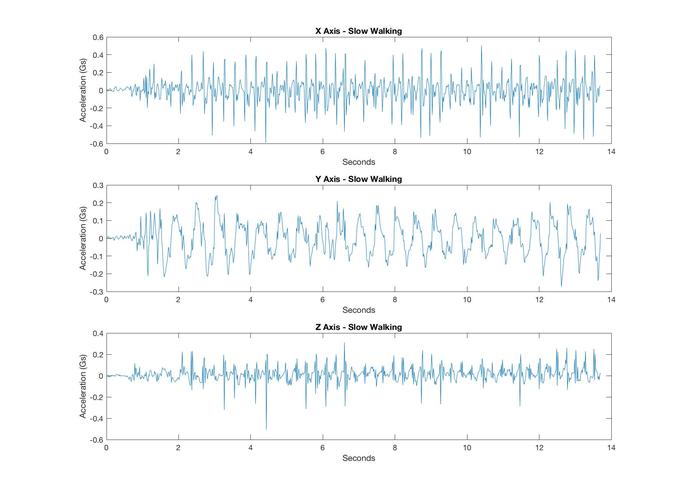

CoreMotion data giving us insight on walking mechanics

-

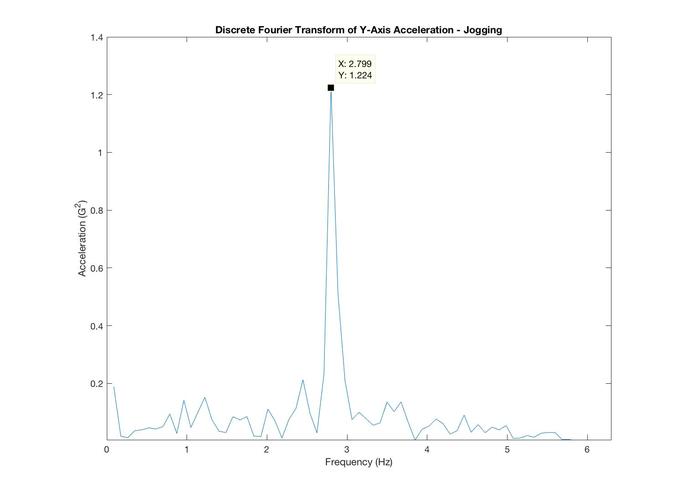

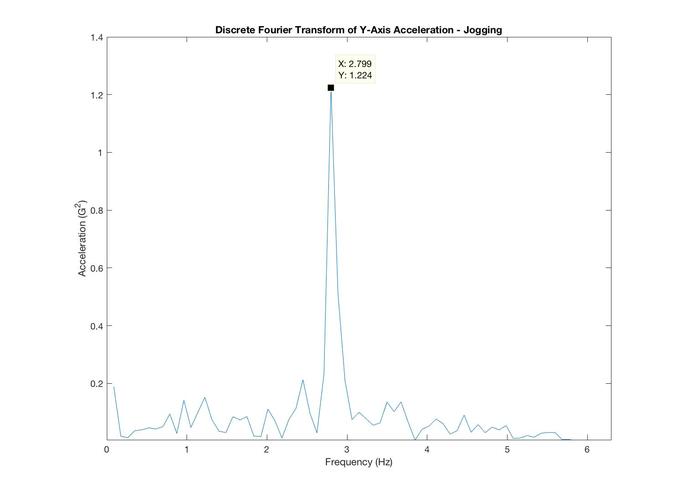

A Discrete Fourier Transform of our acceleration data which pinpoints the exact speed of jogging in place

-

Longfellow bridge in our point cloud meshes

Inspiration

We wanted to improve even more on the immersiveness of VR by using 3D maps based on the real world. We also wanted to demonstrate the power of AR as a medium for interactive and collaborative gaming. We also wanted to connect the physical world with the real world, so a player moves through the game by jogging in place.

What it does

Our game allows 4 players, divided into teams of 2, to run through the streets of Paris looking for a goal destination. On each team, one player is immersed at the street level through VR, while his or her comrade views the entire world map as an AR overlay on a surface. The navigator must help their teammate search through the streets of an unfamiliar city while trying to get to the destination before the other team. The faster the player on VR jogs in place, the faster they move through the VR world.

How we built it

- We worked on developing and rendering real-world meshes from GoogleStreetAPI. This was done through OpenFramework for visual rendering scene reconstruction. We also used Meshlab and Blender to generate these 3D scenes. We ran SLAM algorithms to create 3D scenes from 2D panoramas.

- One member worked on exploring hardware options to connect the physical and real world. He used Apple's CoreMotion framework and applied signal processing techniques to turn accelerometer data from the iPhone in the Google Cardboard into accurate estimates of jogging speed.

- One of the members developed and synchronized the AR/VR world with the 4 players. He used ARKit and SceneKit to create the VR world and tabletop AR world overlay. He used firebase to synchronize the VR user's location with the player icon on the AR user's bird's eye view.

Challenges we ran into

A substantial amount of our time was spent trying to stitch together our own 3D renderings from Google StreetView panoramas. Ultimately, we had to download and import existing object files from the internet. Another huge challenge was synchronizing all four players in real time in a shared AR/VR world.

Further, while we were able to use Fast Fourier Transforms to get extremely accurate estimates of our jogging speed when processing the CoreMotion data in Matlab, implementing this in Swift proved much more difficult, so we built a simpler (but still fairly accurate) estimation script which does not transform the data to a frequency domain.

Accomplishments that we're proud of

Making a game that we would play ourselves and that we think others would too. We are able to create an immersive experience for four users and the players get some exercise too while they're playing!

What we learned

We should have eliminated some of the dead-ends that we found ourselves stuck in over the course of the hackathon by checking out more APIs beforehand.

What's next for MazerunnerAR

World dominance. Extending our game to work in any location around the world where there is Google Streetview data. The game will have unlimited maps, players would just need to pick an area from Google Earth and they would be able to play the game with their friends in AR VR.

Log in or sign up for Devpost to join the conversation.